We are moving from a government of "best guesses" to a state run by data. Are we ready for the responsibility?

For decades, public policy was built on intuition, experience, and the slow turn of reactive reviews. Today, that model is being replaced by the Algorithmic State, a world where real-time service logs, predictive modelling, and advanced analytics dictate how resources are spent and lives are impacted.

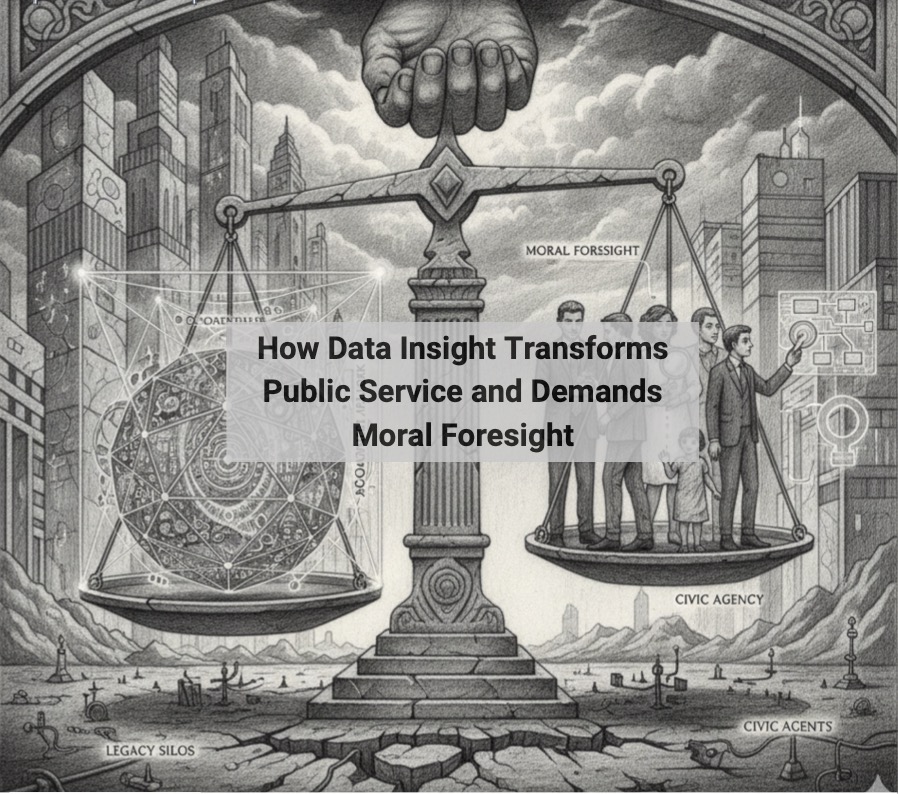

In my latest article, Governing the Algorithmic State, I explore why this shift is more than just a tech upgrade; it is a fundamental redesign of the social contract. While data offers us a path to unprecedented efficiency and proactive service, it also demands a new kind of leadership: Moral Foresight.

The question is no longer just "can we use this data?" but "should we?"

What we explore in this piece:

The Evidence Imperative: Why the move from "hunch-based" policy to rigorous data frameworks is non-negotiable for modern transparency.

Beyond Efficiency: The danger of optimising systems while losing sight of the citizens they serve.

The Ethical Architecture: How to build "Moral Foresight" into the very code of our public institutions.

The transition to an algorithmic state isn't inevitable, it's a choice. We must ensure that as our systems become more "intelligent," they don't become less human.

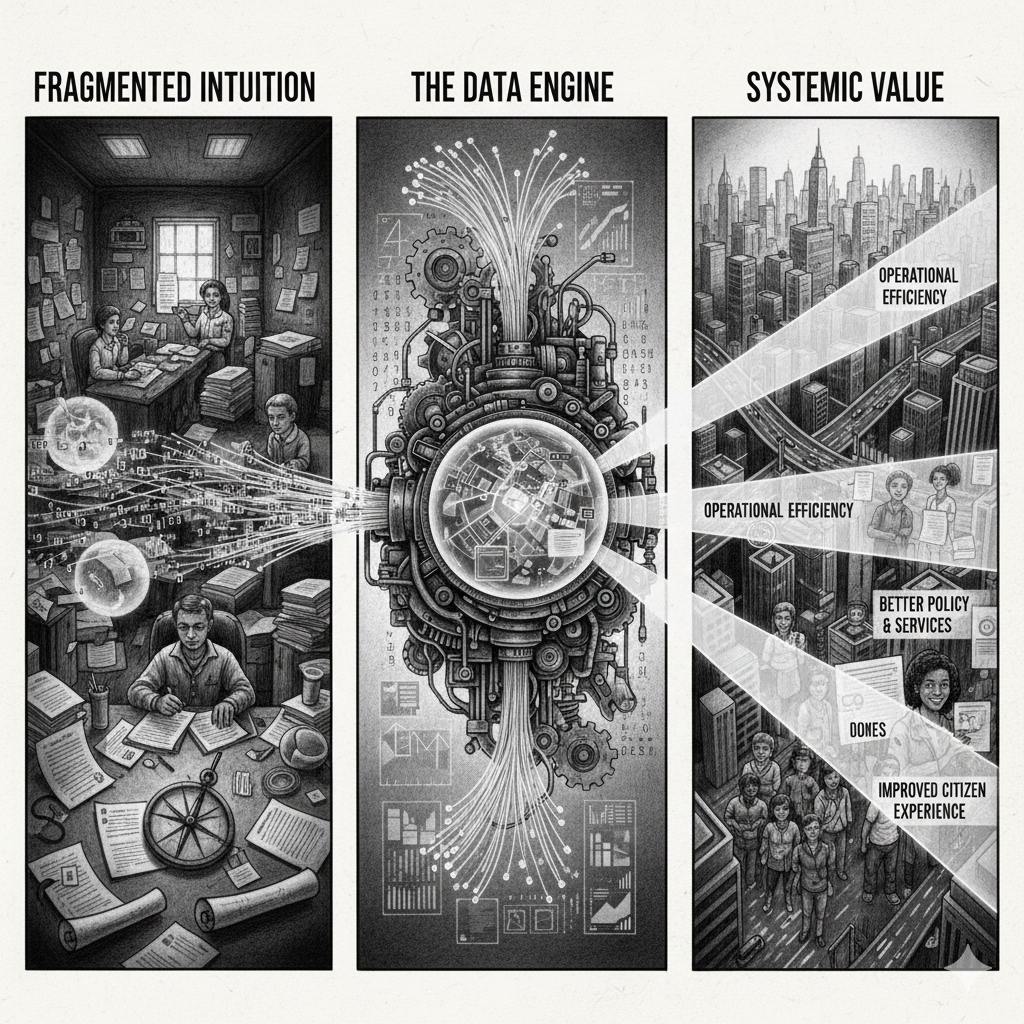

Embracing the Evidence Imperative for Systemic Change

The adoption of data-driven decision-making marks a fundamental shift in the UK public sector, moving beyond mere technological modernisation. Historically, public services relied heavily on intuition, experience and reactive policy review. However, today’s pervasive access to vast data – from real-time service logs to environmental metrics – empowers governments to harness advanced analytics and predictive modelling. This transformation enables organisations to shift from reactive governance to proactive engagement, optimising resources precisely and responding dynamically to citizens’ needs. The decisive move from intuition to rigorous evidence-based frameworks is crucial for a public sector defined by transparency, efficiency and modern responsiveness.

This article delves into the pivotal role data insights play in transforming public services in the UK. We’ll explore the shift in value creation, examine cutting-edge applications and ultimately address the crucial architectural (Data Mesh) and moral (Foresight) challenges necessary to build a resilient trustworthy and effective ‘Algorithmic State’.

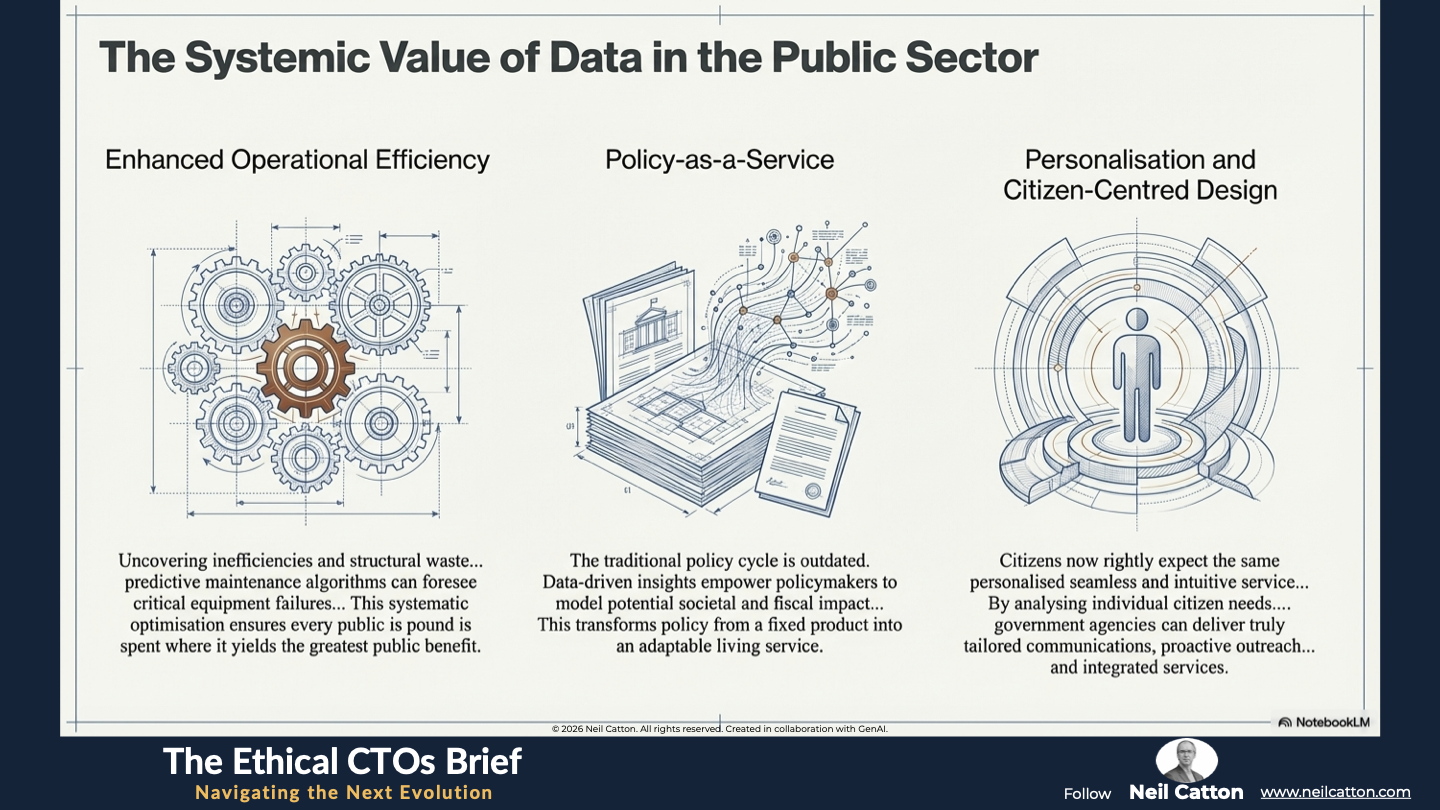

The Systemic Value of Data in the Public Sector

In today’s digital age, data is undeniably the most valuable asset for driving informed and effective decision-making throughout the public sector. The strategic ability to harness, integrate, analyse, and act upon this data empowers public institutions to deliver services that are not only faster but also structurally more transparent and ultimately more responsive to the public. By embedding data analysis into their operating model, the public sector can pinpoint intricate socio-economic trends, model the consequences of resource reallocation, and accurately assess the long-term impact of policy interventions.

Enhanced Operational Efficiency and Resource Optimisation

Data insights empower public sector organisations to delve into their processes, uncovering inefficiencies and structural waste. By analysing workflow data, they can accurately identify administrative bottlenecks, ultimately speeding up citizen service delivery. Beyond bureaucracy, predictive maintenance algorithms can foresee critical equipment failures in areas like transport, networks, energy, utilities, and critical infrastructure. This proactive approach enables planned repairs rather than costly reactive fixes. This systematic optimisation ensures every public pound is spent where it yields the greatest public benefit.

Policy-as-a-Service and Continuous Evaluation

The traditional policy cycle – long formulation, short implementation and slow retrospective review – is outdated. Data-driven insights empower policymakers to model the potential societal and fiscal impact of various policies beforehand. This often involves sophisticated simulations and predictive analytics. Real-time data collection and continuous feedback loops enable constant evaluation of policies already underway. This rapid objective adjustment, grounded in hard evidence rather than anecdotal or politically charged feedback, transforms policy from a fixed product into an adaptable living service.

Personalisation and Citizen-Centred Design

Citizens now rightly expect the same personalised seamless and intuitive service from the public sector as they receive from leading tech firms. Data-driven approaches are key to achieving this. By analysing individual citizen needs, preferences, and historical interactions across departments, government agencies can deliver truly tailored communications, proactive outreach like targeted public health messages, and integrated services. This ensures public services are designed around the citizen’s needs and life journey rather than outdated internal departmental structures.

Advanced Applications and Governance Tools

Advanced Applications and Governance Tools

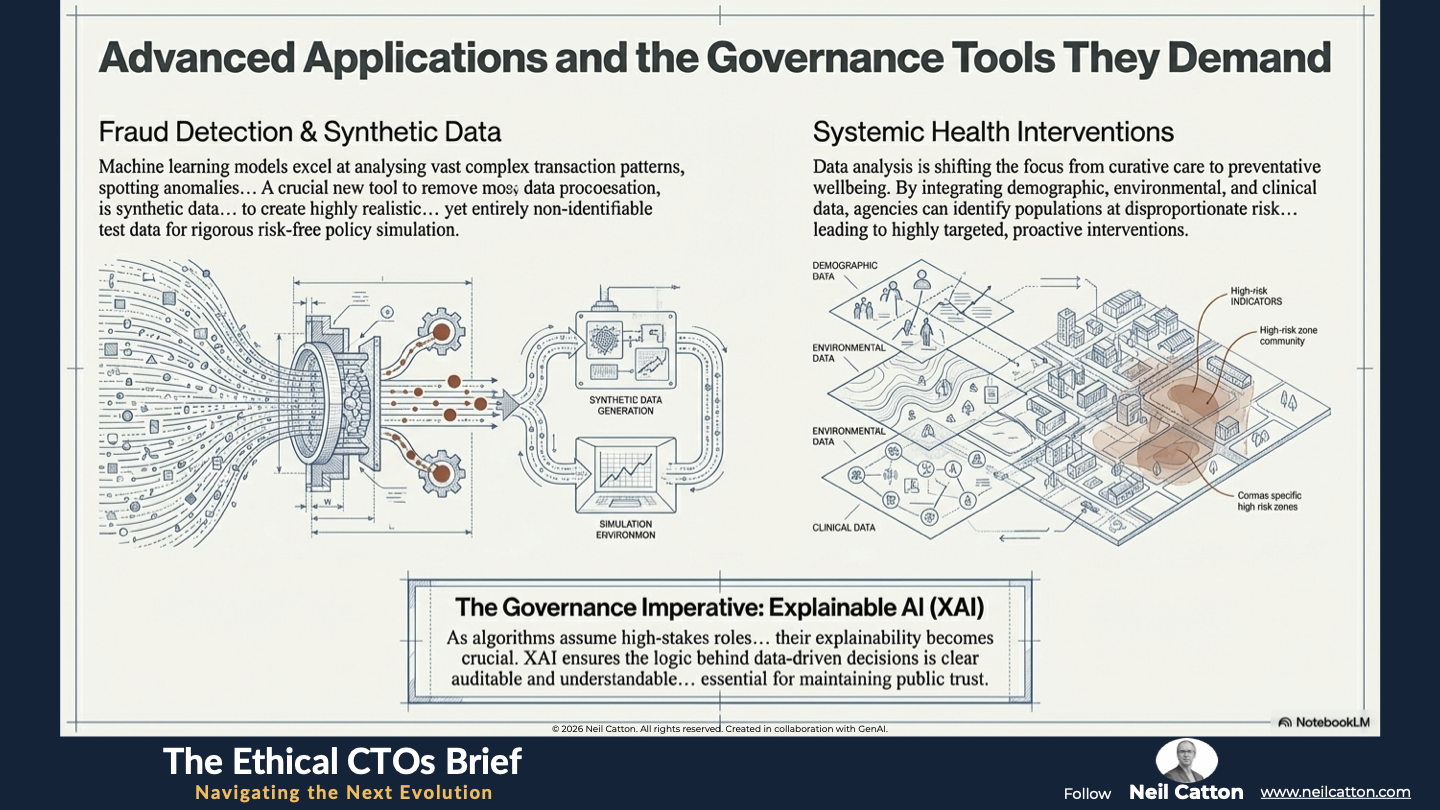

Building on the efficiencies discussed earlier, the next phase of data utilisation transcends mere process improvement. It now addresses the most critical areas of public service, including tackling systemic fraud and waste, and ensuring life-saving health interventions are precisely targeted.

This section delves into the transformative applications which not only unlock substantial societal value but also fundamentally redefine the ethical and technical accountability needed. When algorithms influence citizens’ lives their sophistication must be matched by equally sophisticated governance. This has led to the development of essential tools like Synthetic Data and Explainable AI (XAI).

Fraud Detection, Error Reduction, and Synthetic Data

The scale of fraud and waste in the public sector is immense. Machine learning models excel at analysing complex transaction patterns, spotting anomalies and flagging suspicious activities more effectively and quickly than manual audits. A crucial new tool in this arsenal is synthetic data using generative AI to create highly realistic, statistically representative, yet entirely non-identifiable test data. This synthetic data allows rigorous risk-free policy simulation and system testing without exposing sensitive information. Agencies can safely test fraud detection systems and policy changes against realistic scenarios.

Systemic Health and Personalised Interventions

In public health, data analysis is shifting the focus from curative care to preventative wellbeing. By integrating demographic, environmental, and clinical data, health agencies can accurately map and identify populations at disproportionate risk for specific diseases, health issues, or social disadvantages. This leads to highly targeted, proactive interventions, such as personalised wellness programmes, early warning systems for localised disease outbreaks, and more effective mental health support allocation. This moves the entire system away from reactive treatment and towards preventative, data-informed care.

The Imperative for Explainable AI (XAI)

As data models (algorithms) assume high-stakes roles like determining welfare payments, assessing re-offending risk, and allocating school placements, their explainability becomes crucial. This is the realm of Explainable AI (XAI). XAI ensures the logic behind data-driven decisions is clear auditable and understandable to both affected citizens and responsible public servants. It goes beyond simply achieving the ‘right answer’ to ensuring transparency and fairness in decision-making, which is essential for maintaining public trust.

Architectural and Structural Challenges

While data’s transformative potential is undeniable, the UK public sector grapples with deep-rooted challenges that actively hinder its realisation. These obstacles aren’t merely technical glitches; they’re profound structural and political hurdles stemming from decades of siloed working and legacy IT systems. This section delves into the critical architectural and human challenges. These include the crisis of poor data quality and integration necessitating a radical architectural solution like the Data Mesh, and the pervasive skills gap hindering effective data utilisation. Addressing these systemic faults is essential for building a functional and trustworthy Algorithmic State.

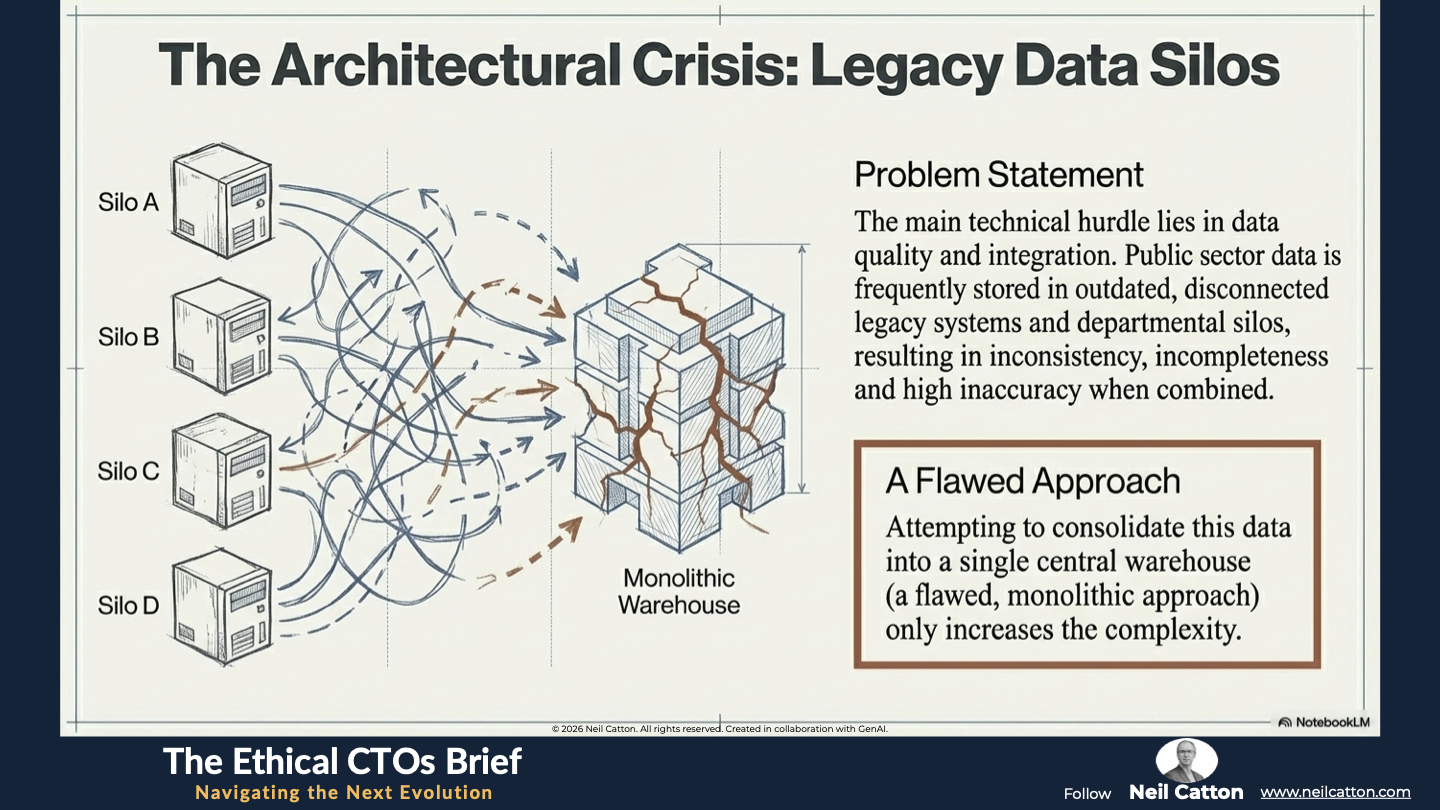

The Legacy Data Silo Crisis and the Data Mesh Solution

The main technical hurdle lies in data quality and integration. Public sector data is frequently stored in outdated, disconnected legacy systems and departmental silos, resulting in inconsistency, incompleteness and high inaccuracy when combined. Attempting to consolidate this data into a single central warehouse (a flawed, monolithic approach) only increases the complexity. The modern strategic solution is the Data Mesh model.

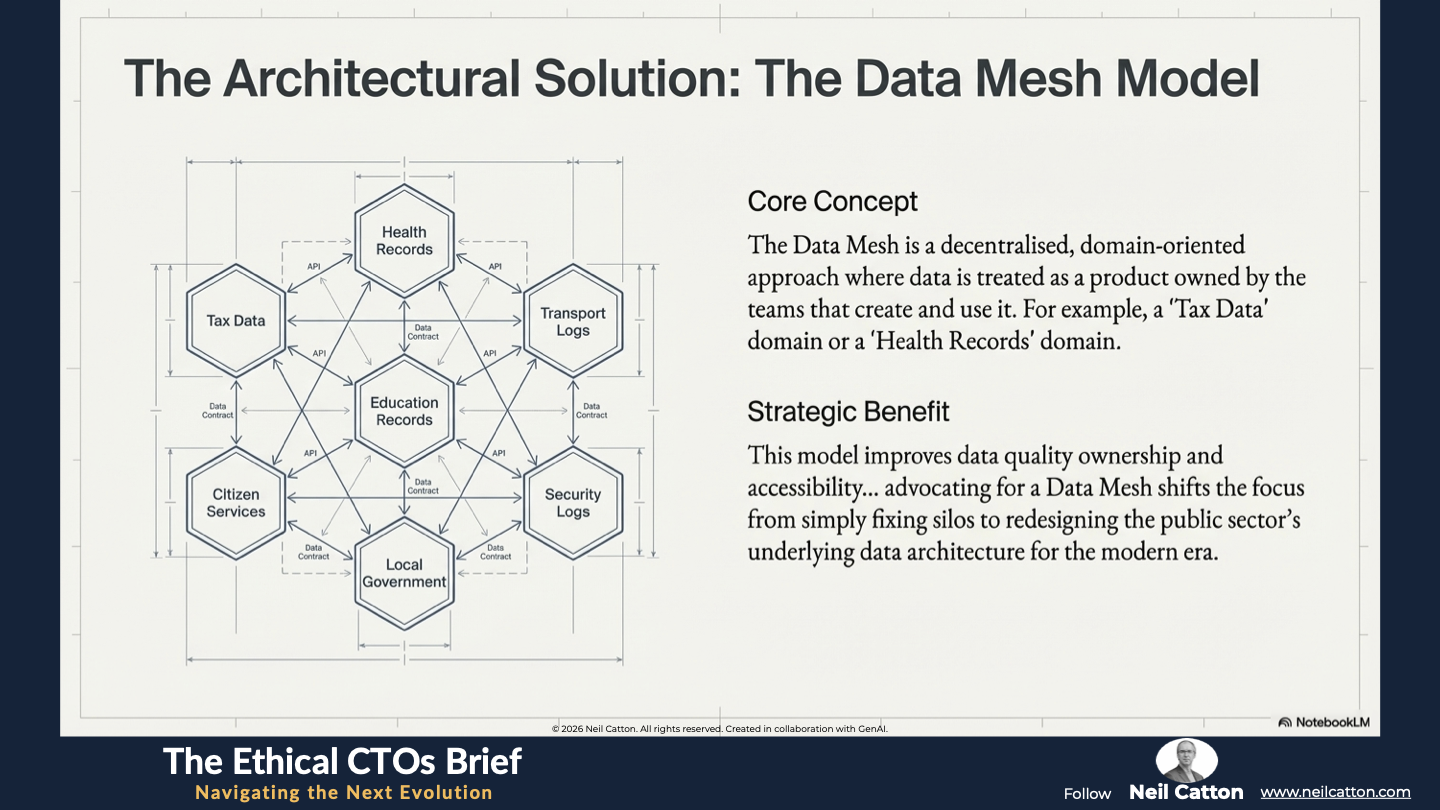

The Data Mesh is a decentralised, domain-oriented approach where data is treated as a product owned by the teams that create and use it. For example, a “Tax Data” domain or a “Health Records” domain. This model improves data quality ownership and accessibility while managing the inherent complexity of a large public sector. Advocating for a Data Mesh shifts the focus from simply fixing silos to redesigning the public sector’s underlying data architecture for the modern era.

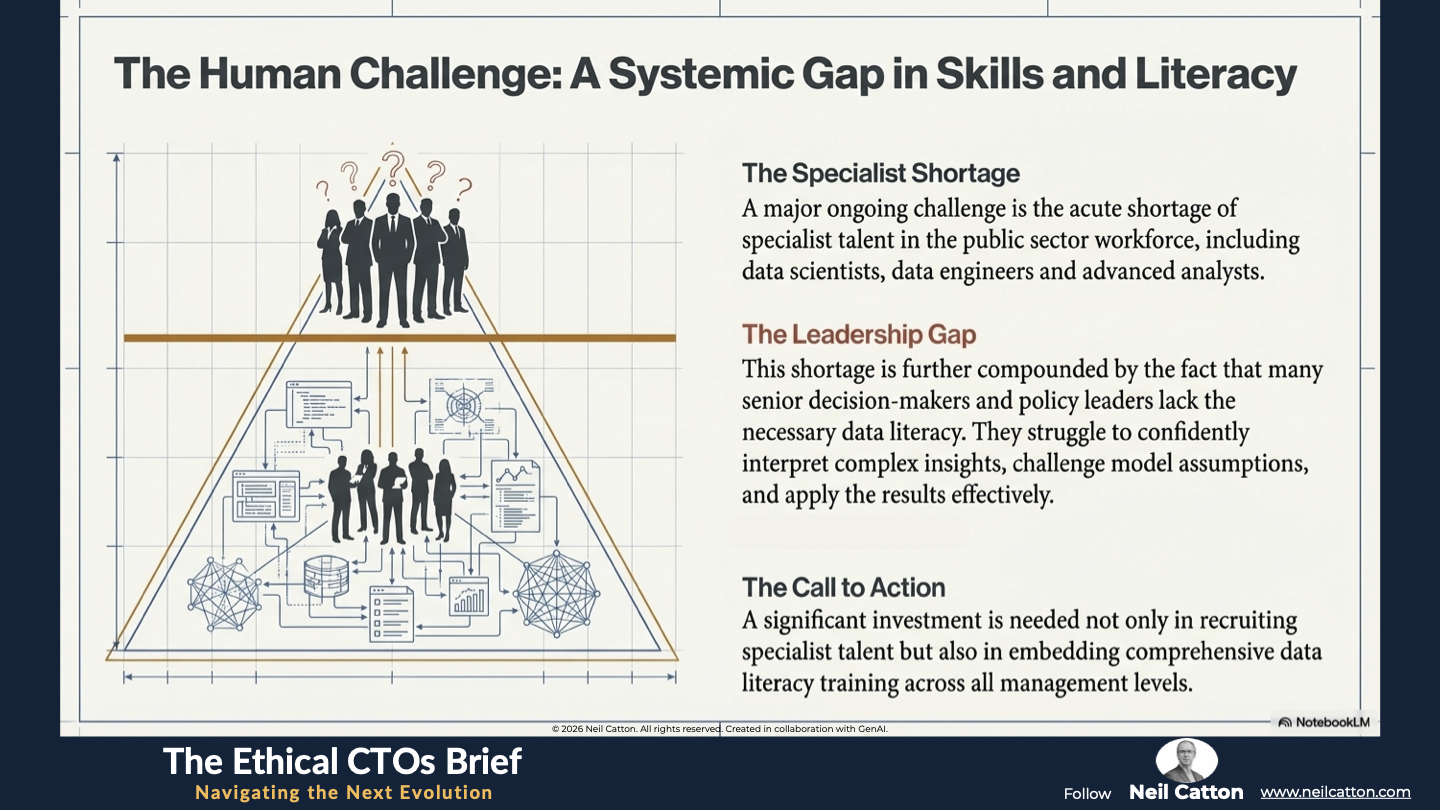

The Skills, Capacity, and Data Literacy Gap

A major ongoing challenge is the acute shortage of specialist talent in the public sector workforce, including data scientists, data engineers and advanced analysts. This shortage is further compounded by the fact that many senior decision-makers and policy leaders lack the necessary data literacy. They struggle to confidently interpret complex insights, challenge model assumptions, and apply the results effectively. Therefore a significant investment is needed not only in recruiting specialist talent but also in embedding comprehensive data literacy training across all management levels to foster an evidence-led culture.

Moral Foresight and Governance

Having explored the powerful applications of data and the architectural challenges it presents, we reach the most crucial aspect: the moral and political framework for its deployment. These challenges aren’t technical but ethical, concerning fairness and control. This section advocates for a shift from reactive legal compliance to a proactive approach of Moral Foresight. We’ll examine why transparency in decision-making is essential, the risk of embedding historical bias in algorithms and the urgent need to address the deep-rooted political friction hindering cross-government data sharing.

From Compliance to Moral Foresight

While robust adherence to regulatory frameworks like GDPR is crucial for ensuring compliance and privacy at the point of use, true thought leadership goes beyond this. It demands a deeper consideration of the Long-Term Societal Impact of algorithmic decisions. Data-driven systems trained on historical data inherently risk reinforcing or even amplifying existing societal biases. This could lead to systemic discrimination in areas like resource allocation policing and access to services. Therefore, a framework of Moral Foresight is essential.

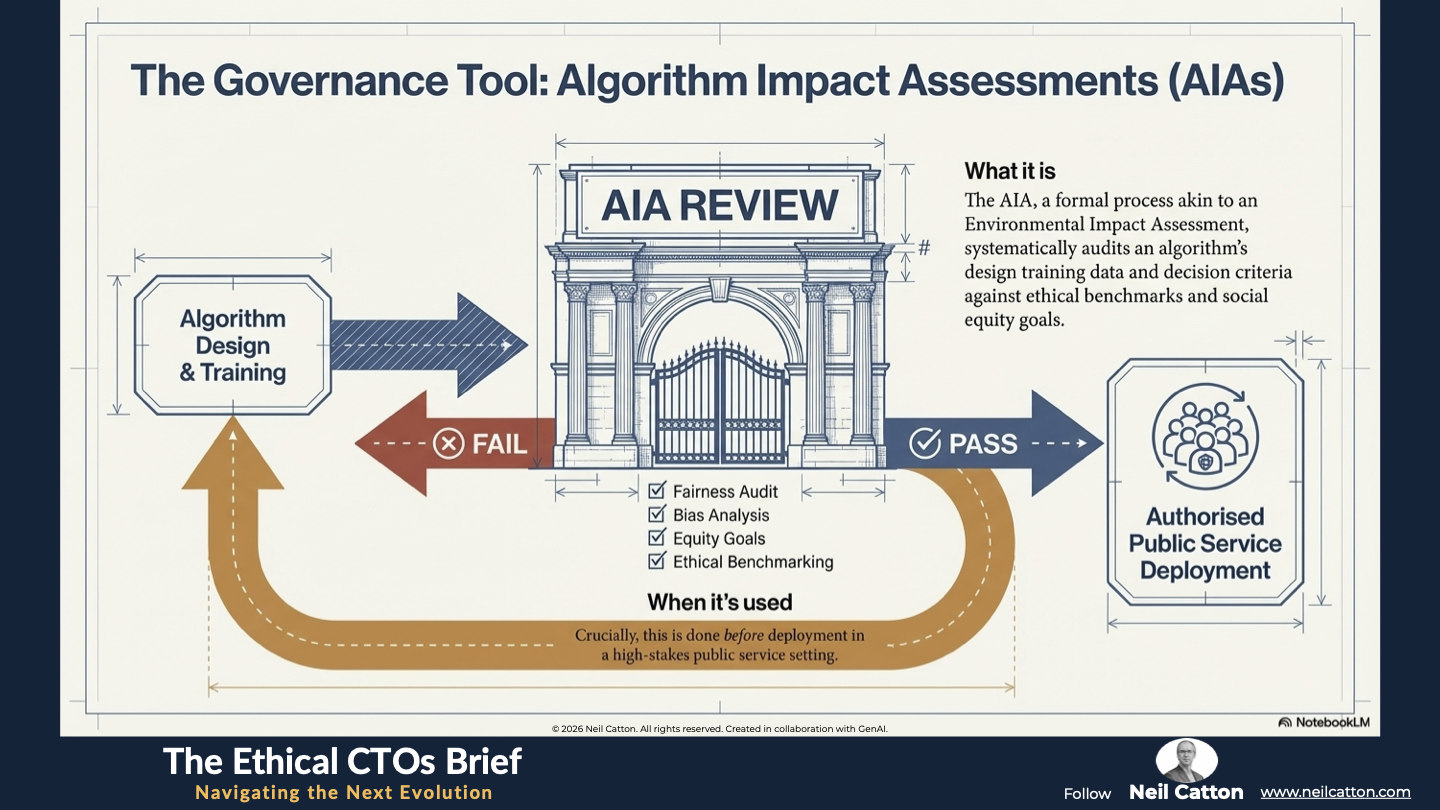

Moral foresight involves evaluating the long-term consequences of an algorithm’s deployment. It goes beyond legality, considering fairness, justice and equity across generations. This necessitates the use of Algorithm Impact Assessments (AIAs) as a governance tool.

The AIA, a formal process akin to an Environmental Impact Assessment, systematically audits an algorithm’s design training data and decision criteria against ethical benchmarks and social equity goals before deployment in a high-stakes public service setting.

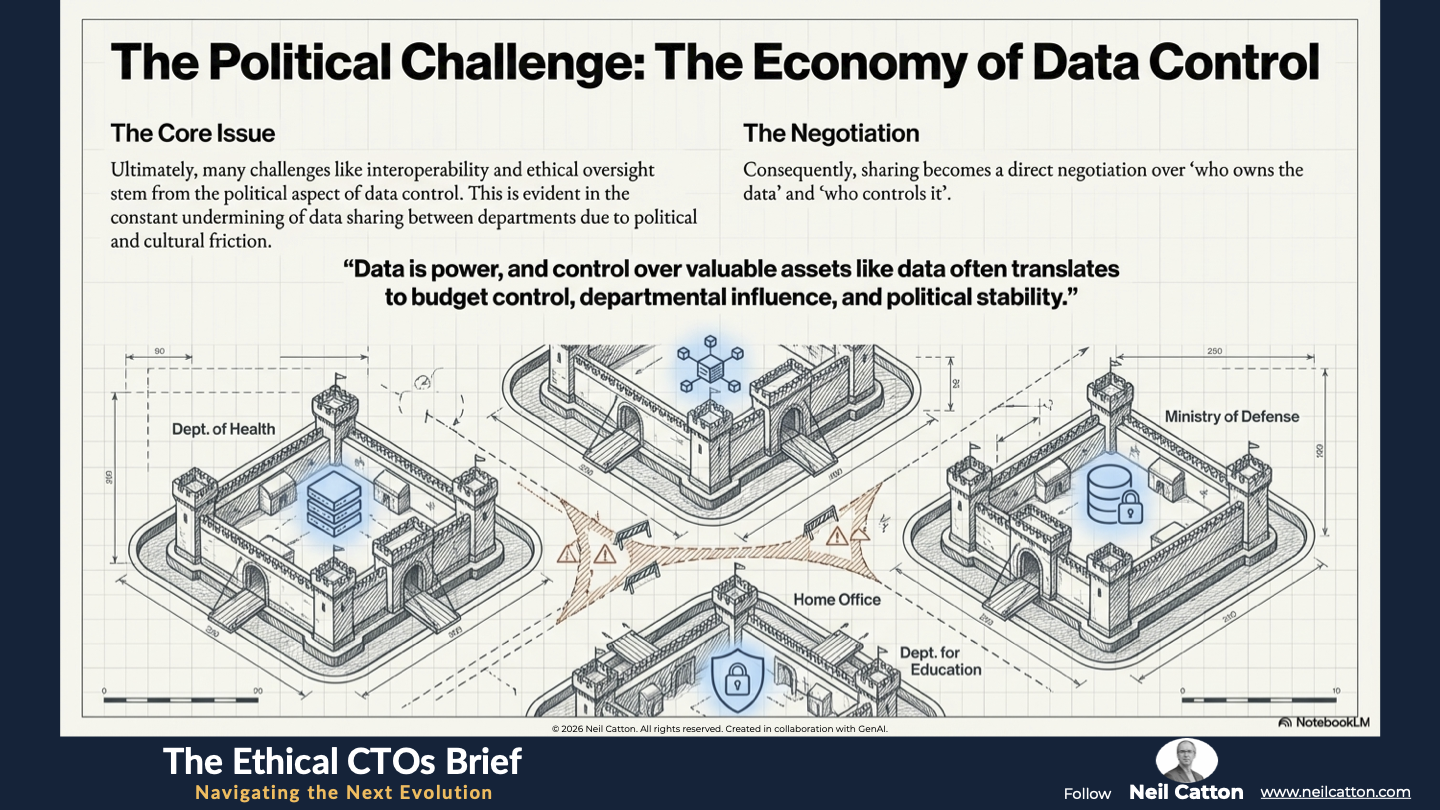

The Political Economy of Data Control

Ultimately, many challenges like interoperability and ethical oversight stem from the political aspect of data control. This is evident in the constant undermining of data sharing between departments due to political and cultural friction. Data is power, and control over valuable assets like data often translates to budget control, departmental influence, and political stability. Consequently, sharing becomes a direct negotiation over “who owns the data” and “who controls it”.

The solution lies not in a better IT system but in a ministerial mandate for interoperability. Fundamentally, the interoperability problem is solved with a signature and a clear directive from the highest political level, not a server upgrade. Data sharing must be a mandated cross-government performance objective ensuring accountability rests with executive leadership rather than technical teams negotiating. This eliminates political friction and fosters cooperation for the public good.

A Final Word

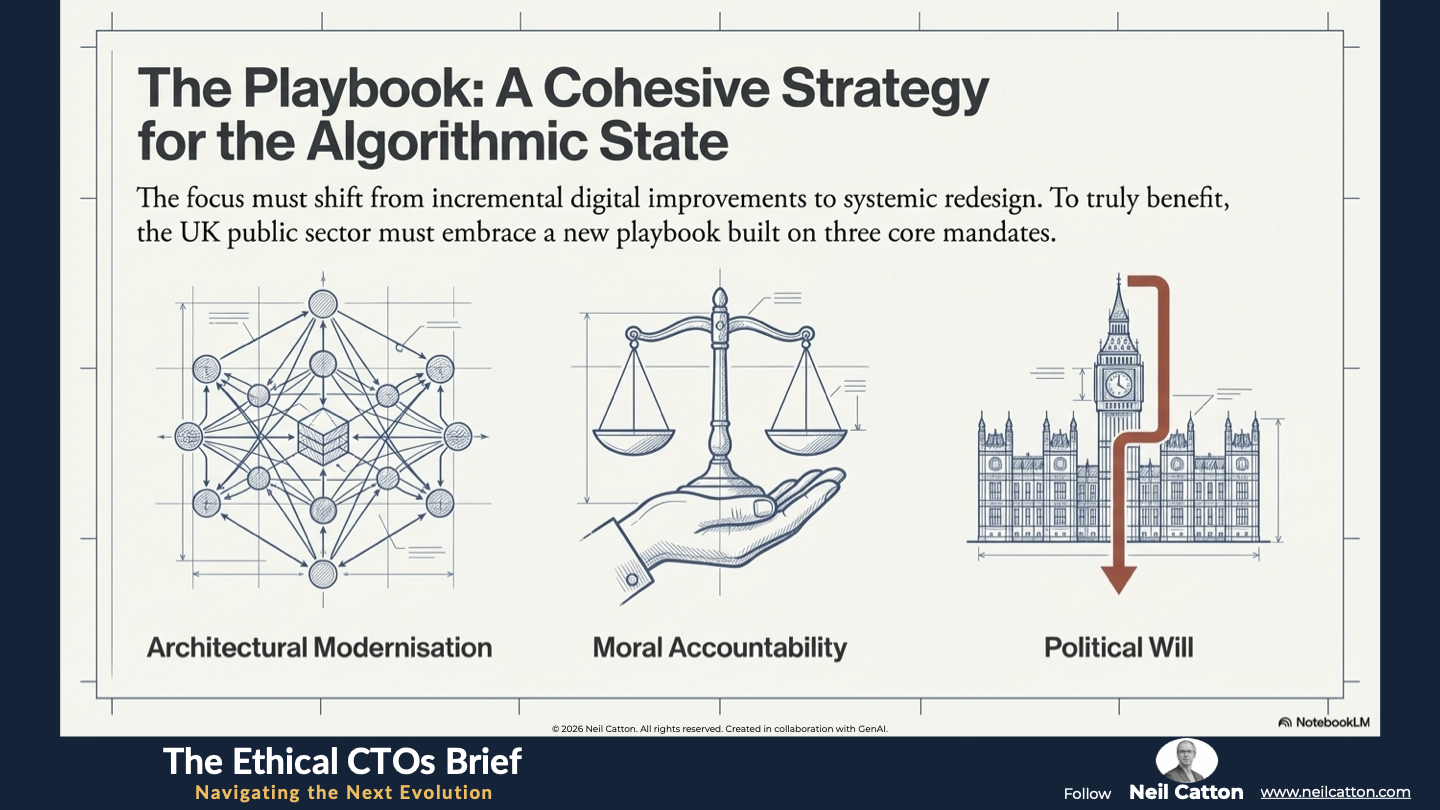

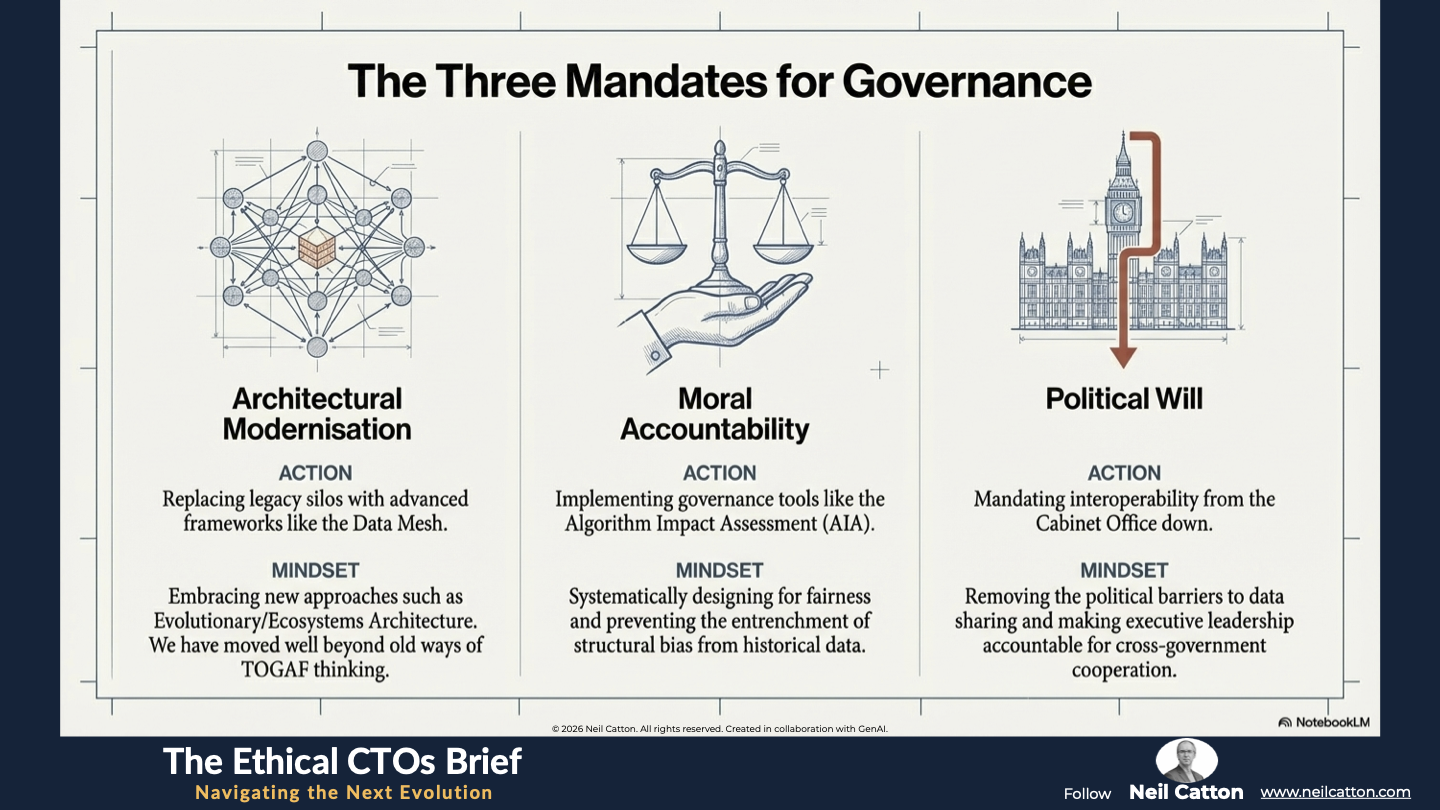

Data-driven insights are crucial for transforming the UK public sector. They enable more effective, efficient and fundamentally citizen-centred services. However, achieving a fully realised Algorithmic State faces significant structural, skills and moral challenges. The focus should shift from incremental digital improvements to systemic redesign. For the UK public sector to truly benefit, it must embrace:

- Architectural Modernisation: Replacing legacy silos with advanced frameworks like the Data Mesh and embracing new architecture approaches such as Evolutionary/Ecosystems Architecture. We have moved well beyond old ways of TOGAF thinking.

- Moral Accountability: Implementing governance tools like the Algorithm Impact Assessment to ensure fairness and prevent structural bias.

- Political Will: Mandating interoperability from the Cabinet Office down, removing the political barrier to data sharing.

Government agencies can effectively meet citizen needs by embracing these challenges through a unique blend of systems thinking, moral foresight, and practical governance insight. This approach fosters trust transparency and sustainable innovation, becoming the cornerstones of modern public service. The era of simply discussing data is over; the time to govern the resulting Algorithmic State has arrived.

Key Takeaways: From Best Guesses to Verifiable Data

The Evidence Imperative: Replacing historical intuition with rigorous, real-time data frameworks.

Operational Precision: Using predictive maintenance and workflow analysis to optimize every public pound.

Policy-as-a-Service: Moving from retrospective reviews to continuous, data-informed feedback loops.

Proactive Wellness: Shifting public health from reactive treatment to preventative, targeted intervention.

Strategic Insights: Beyond Efficiency: The Ethical Mandate

Explainable AI (XAI): Ensuring that algorithmic decisions (like welfare or school placement) are transparent and auditable.

The Data Mesh Solution: Decentralising data ownership to solve the legacy silo crisis without monolithic warehouses.

Synthetic Data: Using GenAI to create non-identifiable test data for safe policy simulation.

Algorithm Impact Assessments (AIA): Auditing long-term societal consequences to prevent the reinforcement of historical bias.

Video Summary: Intelligence Without Losing Humanity

The Redesign of the Social Contract: Why the move to data-driven policy is a choice, not an inevitability.

Moral Foresight vs. Compliance: Why checking boxes is no longer enough to manage systemic risk.

The Political Barrier: Why interoperability requires a ministerial signature, not just a server upgrade.

Future-Proofing the State: Embracing ecosystems architecture to thrive in the era of AI and Quantum.

The shift to an algorithmic state promises unprecedented efficiency, but efficiency is not a synonym for equity. Who is left behind in this new digital OS? We must confront the human cost in The Algorithmic Abyss.

The Ethical CTO: Arc 2 Index

- The Speed of Change: Governing the Tempo

- Where Policy Fails: The Governance Gap

- The Strategic Bridge: Closing the Gap

- Breaking the Structural Barriers : Data Silos

- The new OS of Society: Governing the Algorithmic State

- The human cost of exclusion: The Algorithmic Abyss

- Designing for Civic Agency: The Ethical Architect

- Trust in the age of AI: Policing at an Inflection Point

- The zenith of converged security: Designing the Future-Ready force