We’ve spent years "nudging" people. It’s time we started empowering them.

In the world of public service and digital strategy, we’ve become very good at the "nudge", those small, psychological prompts designed to get people to pay taxes on time or recycle more. But there’s a thin line between helpful guidance and quiet manipulation. As we lean harder into AI and behavioural science, we have to ask: are we building systems that respect people, or just systems that manage them?

In my latest article, The Ethical Architect, I argue that we need to move past simple Gamification and Nudge Theory. We shouldn't just be looking for "compliance"; we should be architecting for Civic Agency.

This piece covers:

Beyond the Trick: Why behavioural tools are failing when they aren't backed by a moral framework.

The Autonomy Trap: How "dark patterns" in design erode trust between the state and the citizen.

Designing for Equity: How to build a "Choice Architecture" that prioritises transparency and genuine human benefit over mere efficiency.

The goal isn't just to make systems work better, it's to make them work for the people they serve. It’s time to move from being designers of behaviour to architects of integrity.

The Crisis of Civic Engagement

The UK public sector faces a growing challenge: civic disengagement, passive compliance and stretched resources. Traditional communication and service delivery models are failing. We’re at a critical point where the citizen-state relationship needs a fundamental redesign. Behavioural science, particularly Gamification and Nudge Theory, offers tools for more than minor improvements; they can lead to systemic repair.

These powerful, psychology-backed strategies are often seen as simple tricks to manipulate people into doing things like recycling more or paying taxes on time. However, to use them effectively, especially in public life, we need to go beyond simple descriptions and adopt a governance imperative. This article shifts the focus from the tools themselves to the ethical and systemic governance of their deployment. It emphasises the need to ensure these strategies build genuine civic agency rather than erode it. For public sector leaders, the challenge is clear: use these tools to create interactive, responsive, and citizen-centred services but only within a strong moral framework.

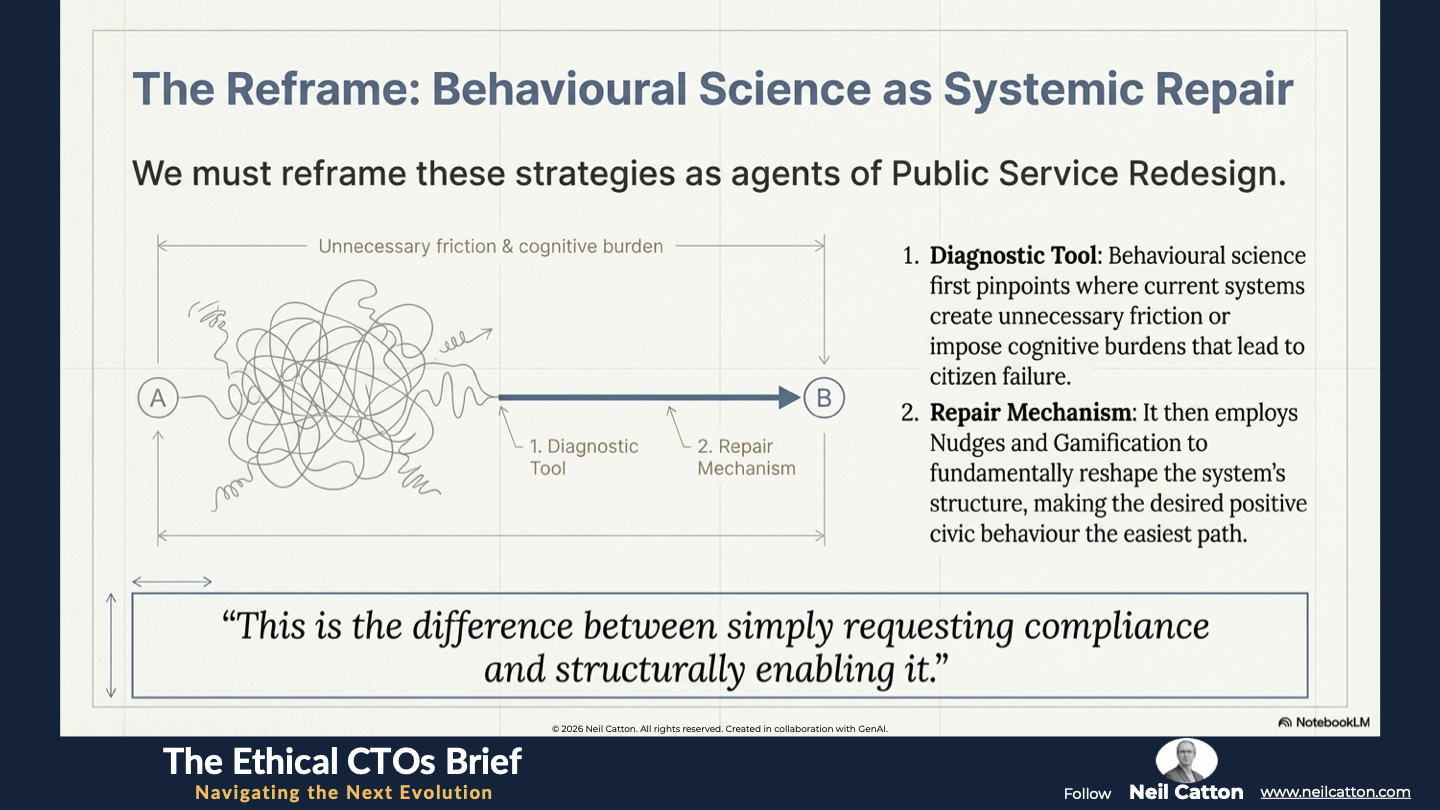

Behavioural Science as Systemic Repair

To fully appreciate the potential and inherent risks of these strategies, we must reframe them as agents of Public Service Redesign rather than isolated marketing tactics. Behavioural science acts as a diagnostic tool, pinpointing where current systems create unnecessary friction or impose cognitive burdens leading to citizen failure. The repair mechanism then employs Nudges and Gamification to fundamentally reshape the system’s structure, making the desired positive civic behaviour the easiest path. This is the difference between simply requesting compliance and structurally enabling it.

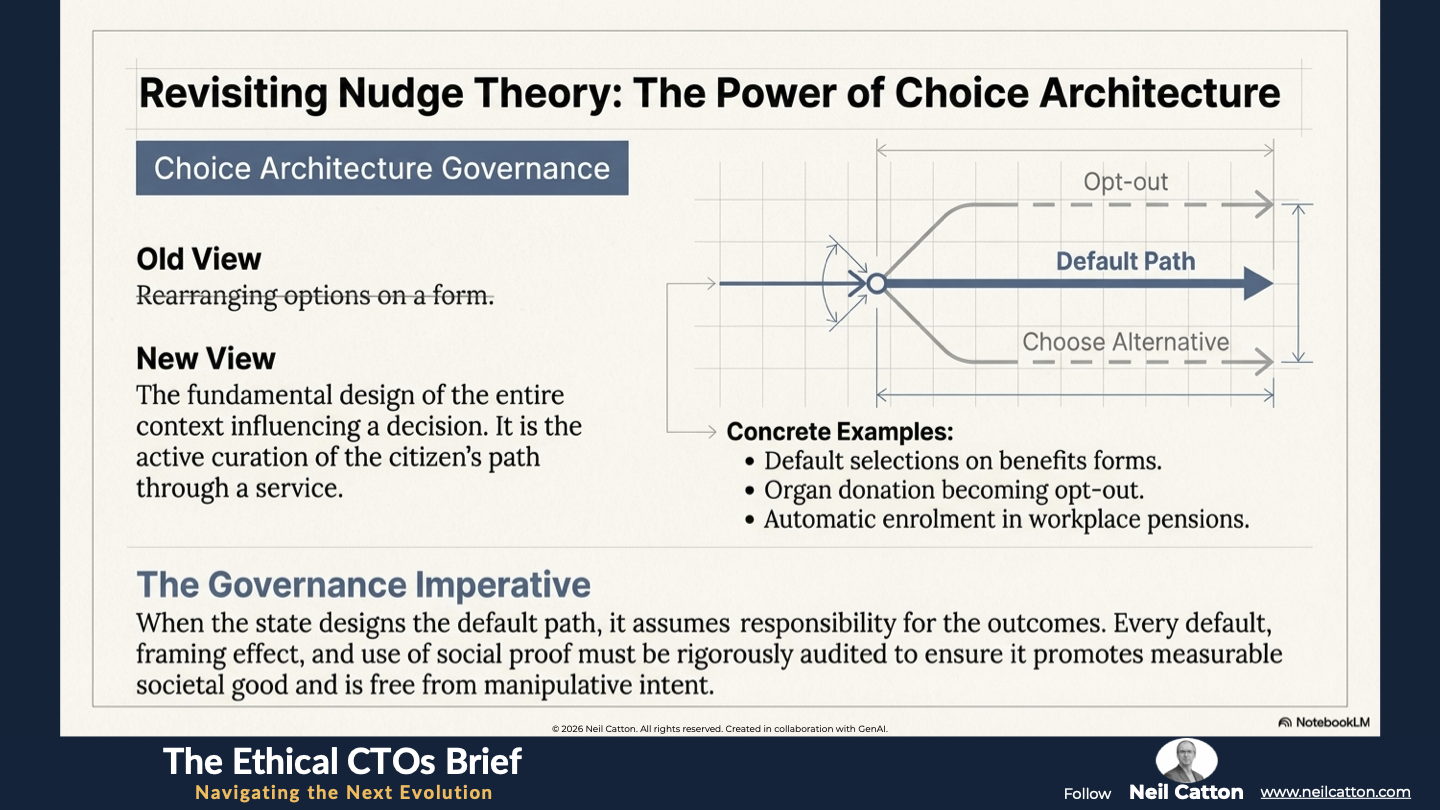

Choice Architecture Governance

Nudge theory proposes that subtle changes to the “choice architecture” can predictably influence behaviour without restricting freedom of choice or altering economic incentives. This goes beyond simply rearranging options; it’s a fundamental aspect of system design that shapes the path a citizen takes through a service.

- Defining the Architecture: The ‘choice architecture’ encompasses the entire context and environment influencing a decision. This includes online application sequences, public bin labels, healthy food placement and default selections on benefits forms. When governments design this context they assume responsibility for the resulting behaviour.

- The Systemic Perspective: When a public body alters a default setting on a public service like organ donation becoming opt-out or automatically enrolling eligible citizens in a workplace pension scheme, it fundamentally redesigns the interaction ecosystem. This shift moves from a passive hands-off approach to active governmental curation. Simply setting a default – requiring only acceptance rather than active decision-making – eliminates the substantial cognitive effort needed to initiate a new behaviour.

- Requirement for Governance: This level of influence demands Choice Architecture Governance. Since the state dictates the default path, every default setting, framing effect and use of social proof like “9 out of 10 people in your area…” must undergo rigorous auditing. This audit must ensure the nudge genuinely promotes measurable societal good (a “good nudge”) and is free from manipulative or self-serving intent. The governing policy should clearly define who is being nudged, the reason, and the verifiable benefit primarily accruing to the citizen or community rather than simply boosting a government metric. This is crucial for maintaining public trust.

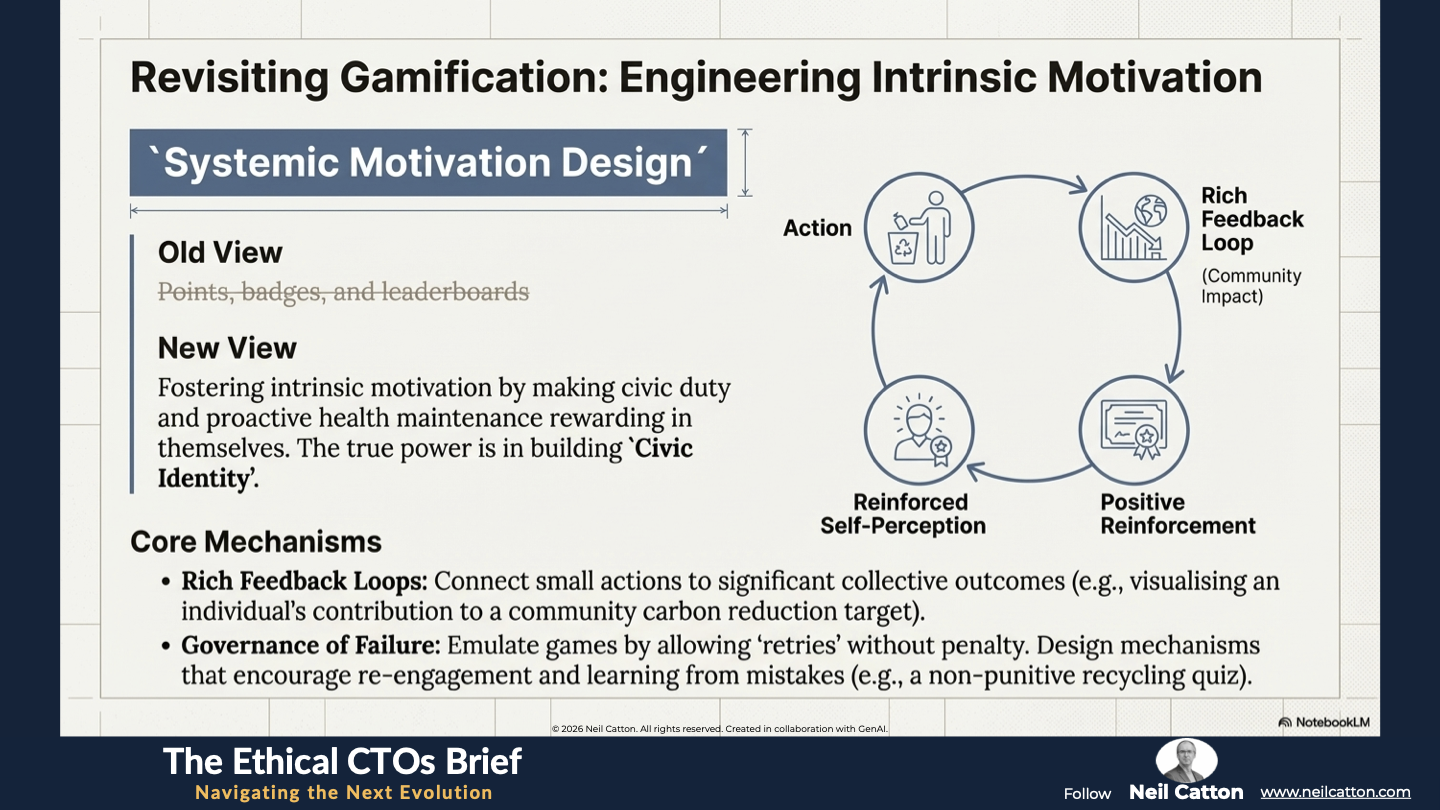

Systemic Motivation Design

Gamification involves applying familiar game elements like points, badges, progress bars, and leaderboards to non-game contexts. However, the public sector’s goal should be to foster intrinsic motivation rather than short-term compliance driven by external rewards. This means making civic duty public service participation and proactive health maintenance rewarding in themselves.

- The Design Goal: We must design for long-term Systemic Motivation. This involves transitioning citizens from simple external rewards like a digital badge for recycling once, to embracing the positive emotional and social feedback loop of meaningful sustained participation. Gamification’s true power lies in building Civic Identity. By consistently rewarding behaviour, the system reinforces citizens’ self-perception, such as “I’m a responsible recycler” or “I’m an engaged community member”. This self-reinforcing loop makes the behaviour sustainable.

- Feedback Loops and Agency: Effective public sector gamification creates rich, ongoing feedback loops. For example, an app rewarding domestic energy reduction shouldn’t simply award abstract points. Instead it should immediately visualise the citizen’s specific and measurable contribution to the community’s carbon reduction target. This connects their small action to a significant collective outcome, strengthening a sense of purpose and collective agency. The design should incorporate elements like visible progress tracking, clear milestones, and acknowledgement of effort rather than just absolute achievement. This ensures it appeals to all levels of ability and commitment.

- Governance of Failure: Unlike transactional services where failure incurs a penalty, Systemic Motivation Design must incorporate the right to fail gracefully. For example, a game allows immediate retries after a failure without severe consequences. Public services should emulate this by designing mechanisms that encourage re-engagement and learning from mistakes. Consider a “Quiz” on recycling knowledge offering rewards for correct answers and immediate non-punitive feedback for incorrect ones. This approach reinforces mastery rather than imposing bureaucratic punishment.

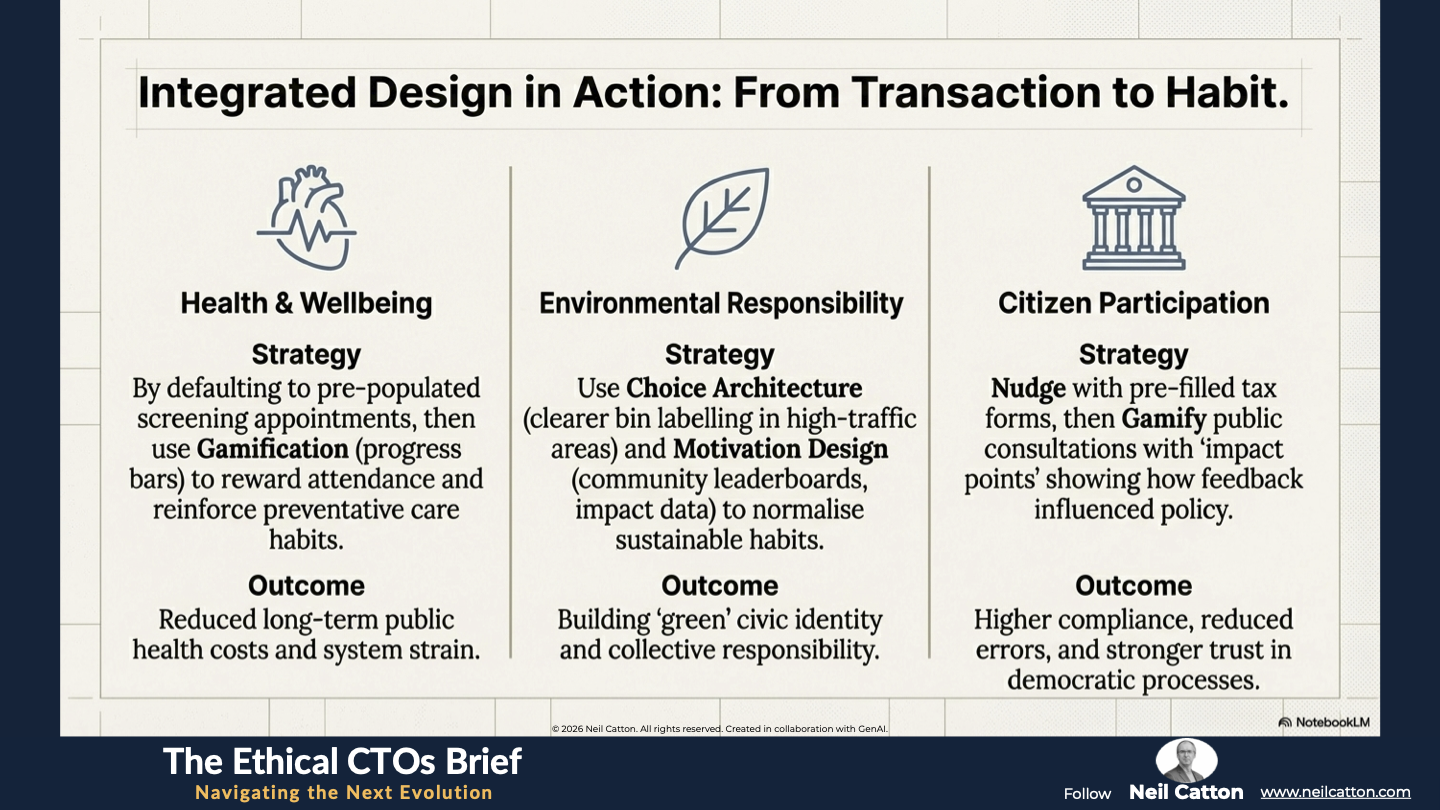

The most powerful applications emerge when Choice Architecture and Systemic Motivation Design are used in concert, moving services from transactional requirements to integrated, habitual interactions. The key to successful application in the UK public sector is focusing rigorously on measurable social benefit while ensuring that the mechanism is accessible and equitable across all demographics.

| Sector | Integrated Strategy Example | Expected Systemic Outcomes |

Health & Wellbeing | Strategy: Nudge users by defaulting to booking a pre-populated screening appointment (reducing cognitive load), then use Gamification (points and progress bars) to reward timely attendance and follow-up activities, reinforcing the habit of preventative care. | Outcome: Increased preventative health behaviours, leading to a demonstrable reduction in long-term public health costs and system strain. |

Environmental Responsibility | Strategy: Nudge citizens by placing recycling bins with clearer, simplified labelling in high-traffic areas (Choice Architecture), then use community leaderboards and badges for high performance (Motivation Design) coupled with tangible impact data. | Outcome: Normalisation of sustainable habits; building 'green' civic identity and collective responsibility. |

Citizen Participation | Strategy: Nudge users towards pre-filled (but editable) tax or application forms (reducing administrative friction), and gamify public consultations with "impact points" or visibility on how their feedback tangibly influenced policy decisions. | Outcome: Higher administrative compliance, reduced errors, and a stronger sense of political efficacy and trust in democratic processes. |

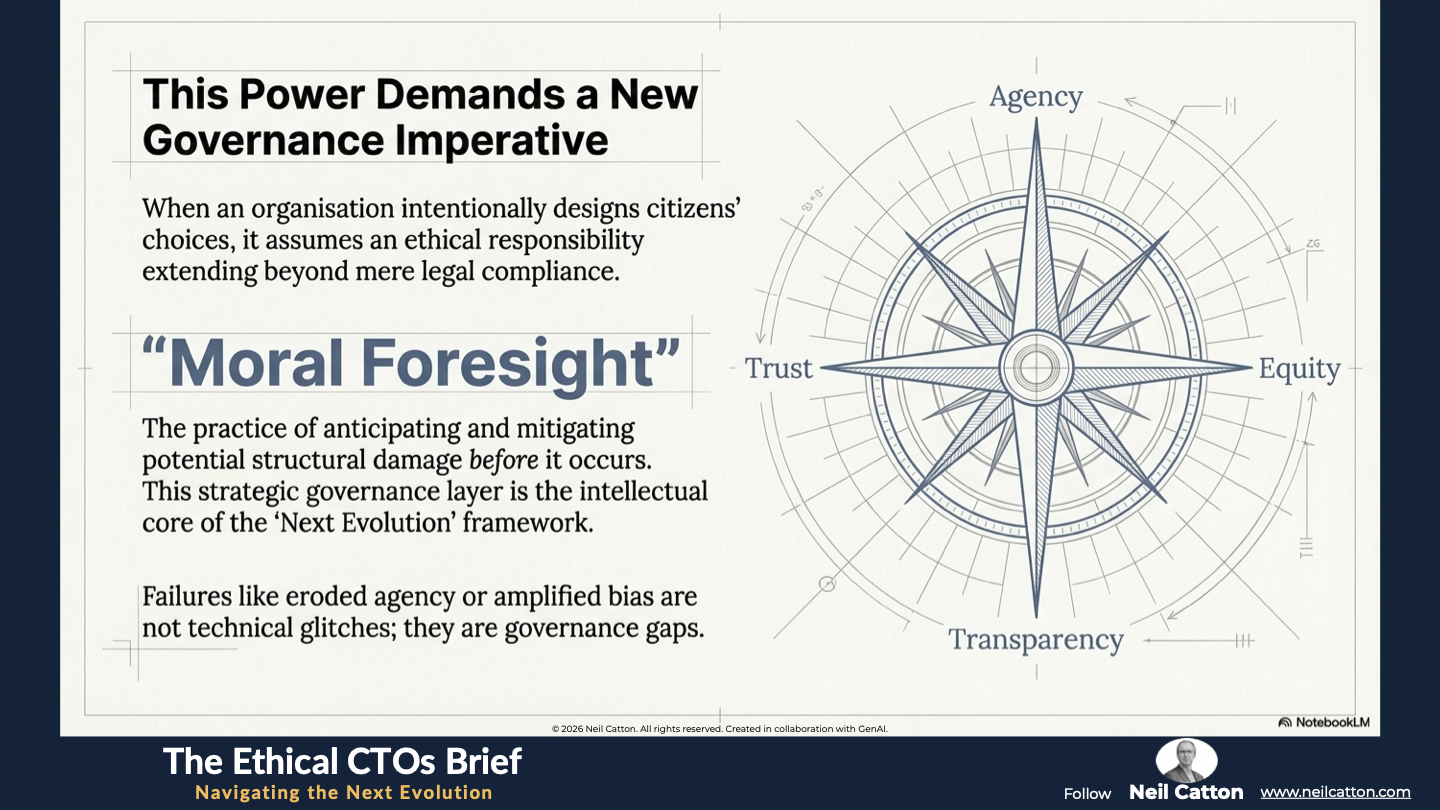

The Governance Imperative: The Ethical Framework and Moral Foresight

The Governance Imperative: The Ethical Framework and Moral Foresight

Transitioning from practical application to ethical management of behavioural tools demands a substantial shift in leadership mindset. When an organisation intentionally designs citizens’ choices, it assumes a ethical responsibility extending beyond mere legal compliance. This influence must be rigorously governed by moral foresight – anticipating and mitigating potential structural damage before it occurs. This strategic governance layer forms the intellectual core of the “Next Evolution” framework, ensuring innovation serves democracy rather than undermines it.

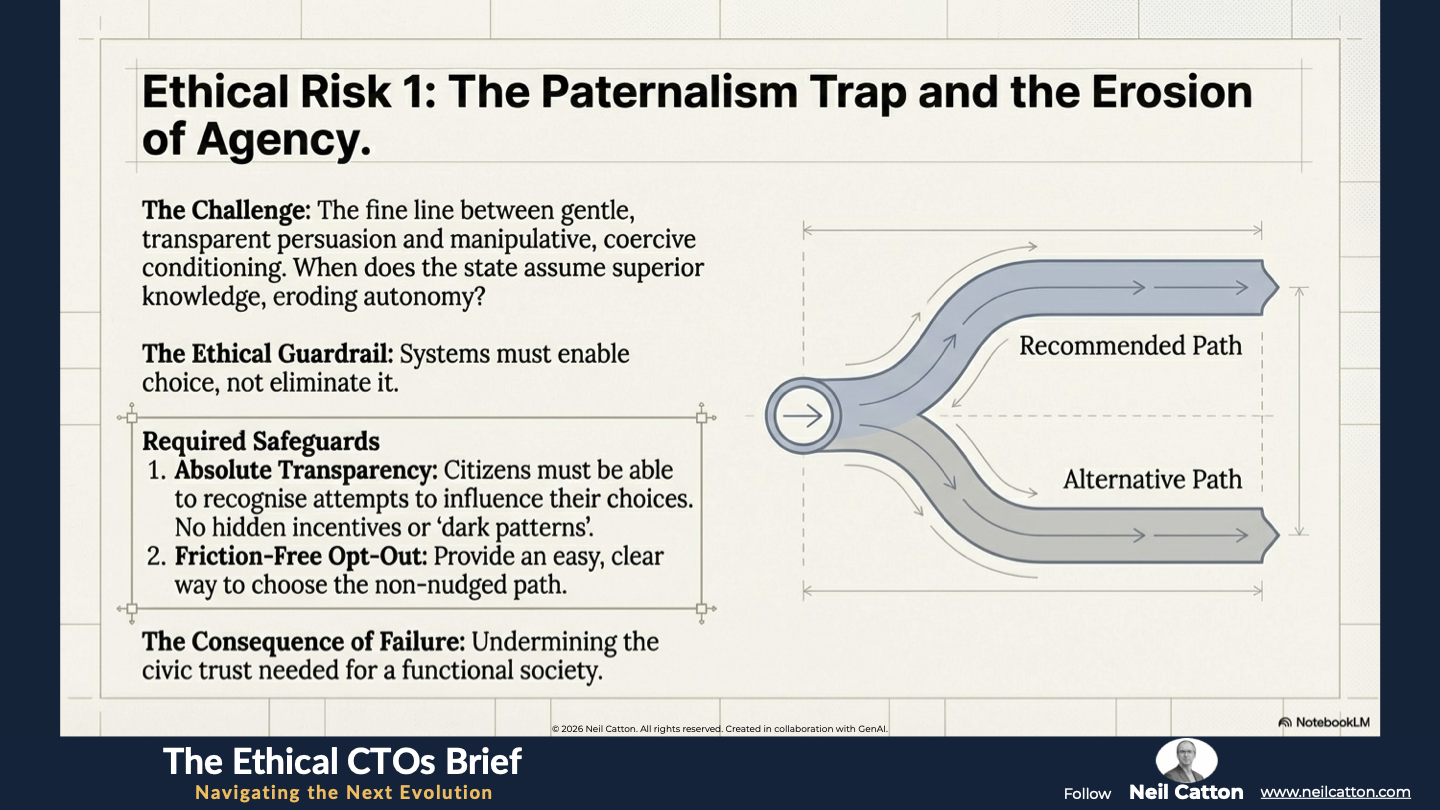

The Erosion of Agency: Navigating the Paternalism Trap

The main ethical challenge in behavioural science deployment lies in the fine line between gentle transparent persuasion and manipulative coercive conditioning. When the state subtly nudges citizens towards a “good” behaviour it risks falling into the Paternalism Trap. This occurs when the state assumes superior knowledge over the individual eroding autonomy and the ability to make independent choices. This is particularly concerning in a liberal democracy where the relationship between state and citizen is built on mutual trust and respect for individual decision-making.

- The Ethical Guardrail: Deployment must always rigorously preserve civic agency. A well-governed system guarantees absolute and easily understandable transparency about the underlying nudge mechanism. Citizens should at the very least be able to recognise attempts to influence their choices. Crucially, the system must provide an easy, friction-free way to opt out of the nudge or choose the less desirable path. If a gamified system employs hidden incentives, dark patterns in user interface design or mechanisms specifically engineered to coerce rather than inform it’s not simply poorly designed; it’s fundamentally and ethically unsound. This undermines the very civic trust needed for a functional society. Governance must enforce the rule that systems must enable choice, not eliminate it.

Algorithmic Bias in Personalisation

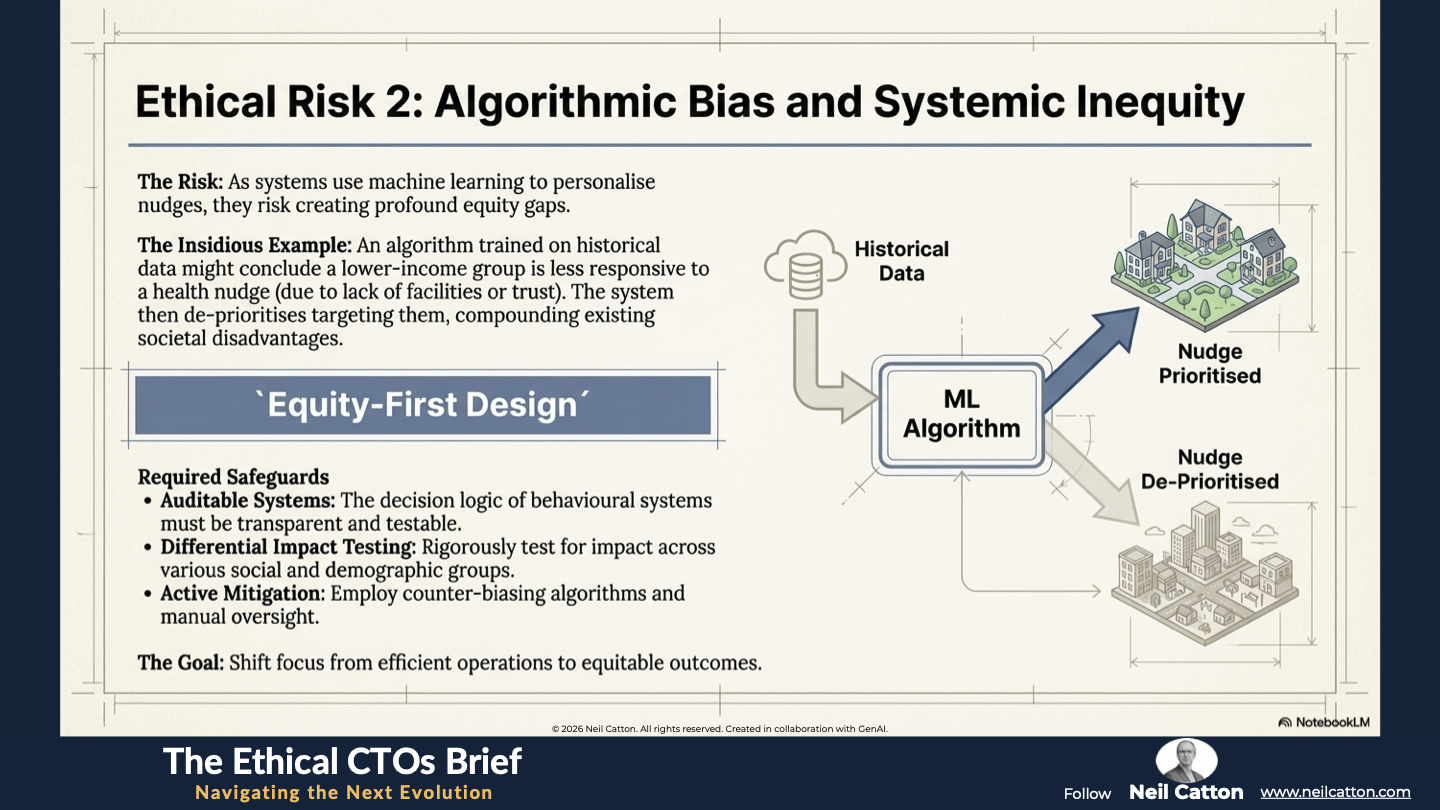

The risk of algorithmic bias increases as systems use machine learning and predictive analytics to personalise nudges based on a citizen’s past behaviour, demographics or location,. Given our lives are complex and constantly changing, any algorithm would need to incorporate substantial modelling of “life-change” events to provide the necessary contextualisation. This could effectively hardwire systemic prejudice.

- The Systemic Risk: An algorithm trained on historical data might conclude that citizens in a specific demographic like a lower-income area or ethnic minority group are less likely to respond positively to a health nudge. This could stem from a lack of local facilities or historical distrust. Consequently, the system might strategically de-prioritise targeting them. This calculated risk creates an equity gap in service provision. Those who need help most are then left without intervention, further compounding existing societal disadvantages.

- Moral Foresight Mandate: Public sector leaders must establish a mandate for Equity-First Design. This involves creating fully auditable and transparent behavioural systems whose decision logic is rigorously tested not only for technical efficiency but also for their differential impact across various social and demographic groups. The governance framework must demand active mitigation strategies like counter-biasing algorithms or manual oversight of targeting rules to prevent reinforcing existing inequalities. Ultimately, the goal should be equitable outcomes rather than just efficient operations.

Protecting Behavioural Capital and Data Trust

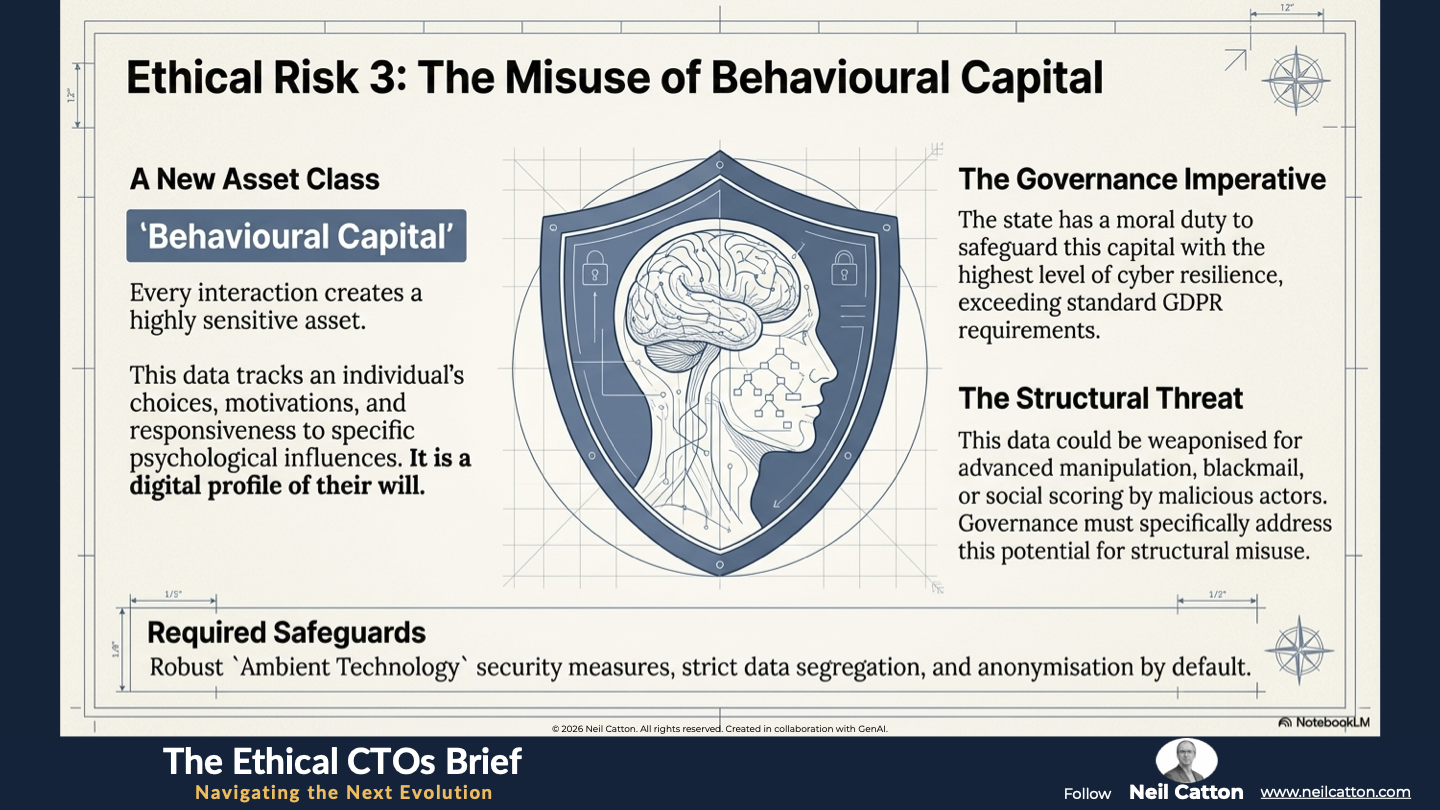

Every interaction in a gamified or nudged environment creates a highly sensitive asset: behavioural capital. This data tracks an individual’s choices, motivations, cognitive responses, and their responsiveness to specific psychological influences. It’s a digital profile of their will.

- Data Governance Imperative: The state has a moral and civic duty to safeguard this capital with the highest level of cyber resilience. Citizens are sharing their very intentions, and if mishandled by malicious internal actors, cyber criminals, or hostile state-sponsored threats this data could be used for advanced targeted manipulation, blackmail, or social scoring. Data generated from these systems must be strictly segregated, anonymised, or pseudonymised where possible and governed by frameworks exceeding standard GDPR requirements. Governance must specifically address the potential for structural misuse – how this data could be weaponised against the population. This demands robust Ambient Technology security measures deeply integrated into system architecture, protecting data not only from breaches but also from inappropriate internal application.

A Final Word

Gamification and Nudge Theory aren’t fleeting trends; they’re fundamental methods for redesigning public services to be more effective and engaging. However, their true value lies not in technical application but in the moral rigour of their governance.

To truly harness these systems’ power, public sector leaders need a holistic systems-thinking approach. The failures – eroded agency, amplified bias and risks to behavioural data – aren’t technical glitches but governance gaps. Addressing them demands the moral foresight, cyber resilience, and digital ethics essential for the new public service strategy.

The UK public sector has a chance to lead the world in this area. It can show that influential technologies can be responsibly deployed to foster genuine civic engagement and trust. This isn’t just about compliance optimisation; it’s about building the ethical, future-proof framework needed for a responsive and equitable digital state.

Key Takeaways: Architecture for Integrity

From Nudges to Agency: Moving from "manipulation for compliance" to "structures for empowerment."

Choice Architecture Governance: Auditing defaults and social proof to ensure they serve the citizen, not just a metric.

Systemic Motivation: Connecting small individual actions (like recycling) to collective community impact.

The Right to Fail Gracefully: Designing systems that encourage learning and re-engagement rather than bureaucratic punishment.

Strategic Insights: Beyond the Trick: Governance as a Safeguard

The Paternalism Trap: Ensuring that state influence never erodes individual autonomy or independent choice.

Equity-First Design: Auditing algorithms to prevent "strategic de-prioritization" of vulnerable groups.

Safeguarding Behavioural Capital: Protecting the "digital profile of the will" with the highest level of cyber resilience.

Radical Transparency: Guaranteeing that citizens can always recognise and opt-out of attempts to influence their choices.

Video Summary: Architects of Integrity, Not Designers of Behaviour

The Crisis of Engagement: Why traditional models are failing and how behavioural science acts as a "diagnostic tool" for repair.

The 19th-Century Barrier: Moving from passive curation to active, ethical governmental responsibility.

Visualising Contribution: Using real-time data to show citizens the tangible result of their civic participation.

The Next Evolution: Building a future-proof framework where influential technology fosters genuine trust.

Ethical design isn't just a theoretical exercise—it is a survival mandate for our most critical institutions. Nowhere is this more urgent than in law enforcement. See the framework applied in Policing at an Inflection Point.

The Ethical CTO: Arc 2 Index

- The Speed of Change: Governing the Tempo

- Where Policy Fails: The Governance Gap

- The Strategic Bridge: Closing the Gap

- Breaking the Structural Barriers : Data Silos

- The new OS of Society: Governing the Algorithmic State

- The human cost of exclusion: The Algorithmic Abyss

- Designing for Civic Agency: The Ethical Architect

- Trust in the age of AI: Policing at an Inflection Point

- The zenith of converged security: Designing the Future-Ready force