When AI starts looking back at us, how do we keep it human?

We’ve reached a point where digital avatars, "Digital Humans", aren't just science fiction anymore. They’re becoming the new face of public services, promising 24/7 support with a smile that looks, talks, and acts almost exactly like ours. But there’s a catch. When we simulate empathy at the speed of light, we risk creating what I call "imposter technology", systems that are technically perfect but psychologically hollow. If an AI can mimic a human connection but doesn't actually "care" about the outcome, what happens to public trust?

In this piece, I explore the concept of Temporal Empathy. It’s not just about making an avatar look real; it’s about architecting the governance that ensures these interactions remain grounded in human values, even when they’re happening at machine speed.

What’s inside:

The Trust Gap: Why hyper-realistic AI can actually make us feel less connected if we don't get the ethics right.

Machine Velocity vs. Human Feeling: Balancing the efficiency of an instant response with the time it takes to build real rapport.

The "Shadow" Governance: How we can build guardrails into these systems to protect the most vulnerable users from being "managed" by an algorithm.

As we move toward a world where the interface between us and the state is a digital face, we need to make sure that face has a conscience.

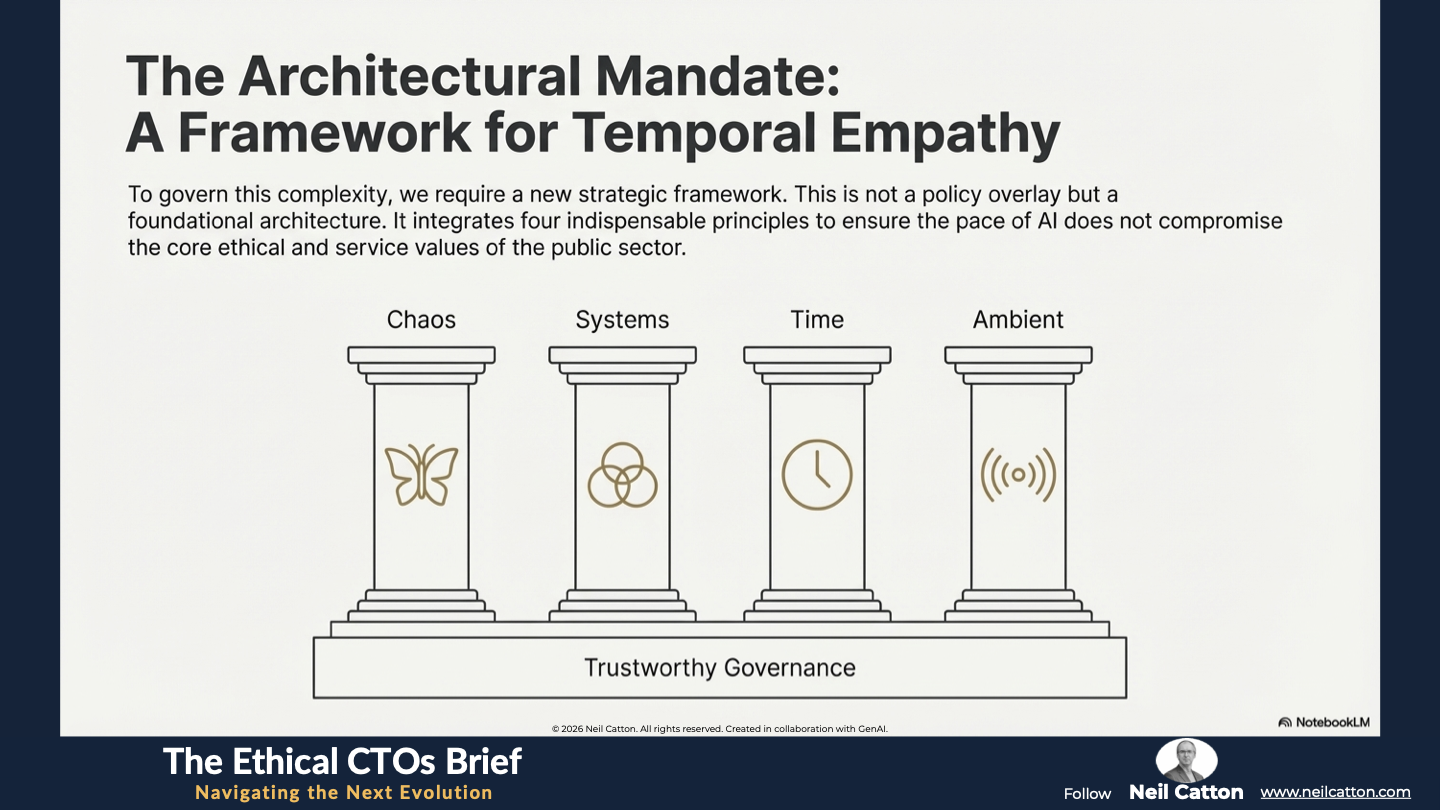

The Architectural Mandate for Digital Humans in the Public Sector

The Digital Human – a hyper-realistic AI-powered interactive avatar – represents the ultimate frontier of public service delivery. It promises 24/7 accessibility instant scalability and a seemingly empathetic interface. These entities are more than sophisticated interfaces; they serve as the Autonomy Core of modern organisations, simulating and managing the very essence of human interaction: trust.

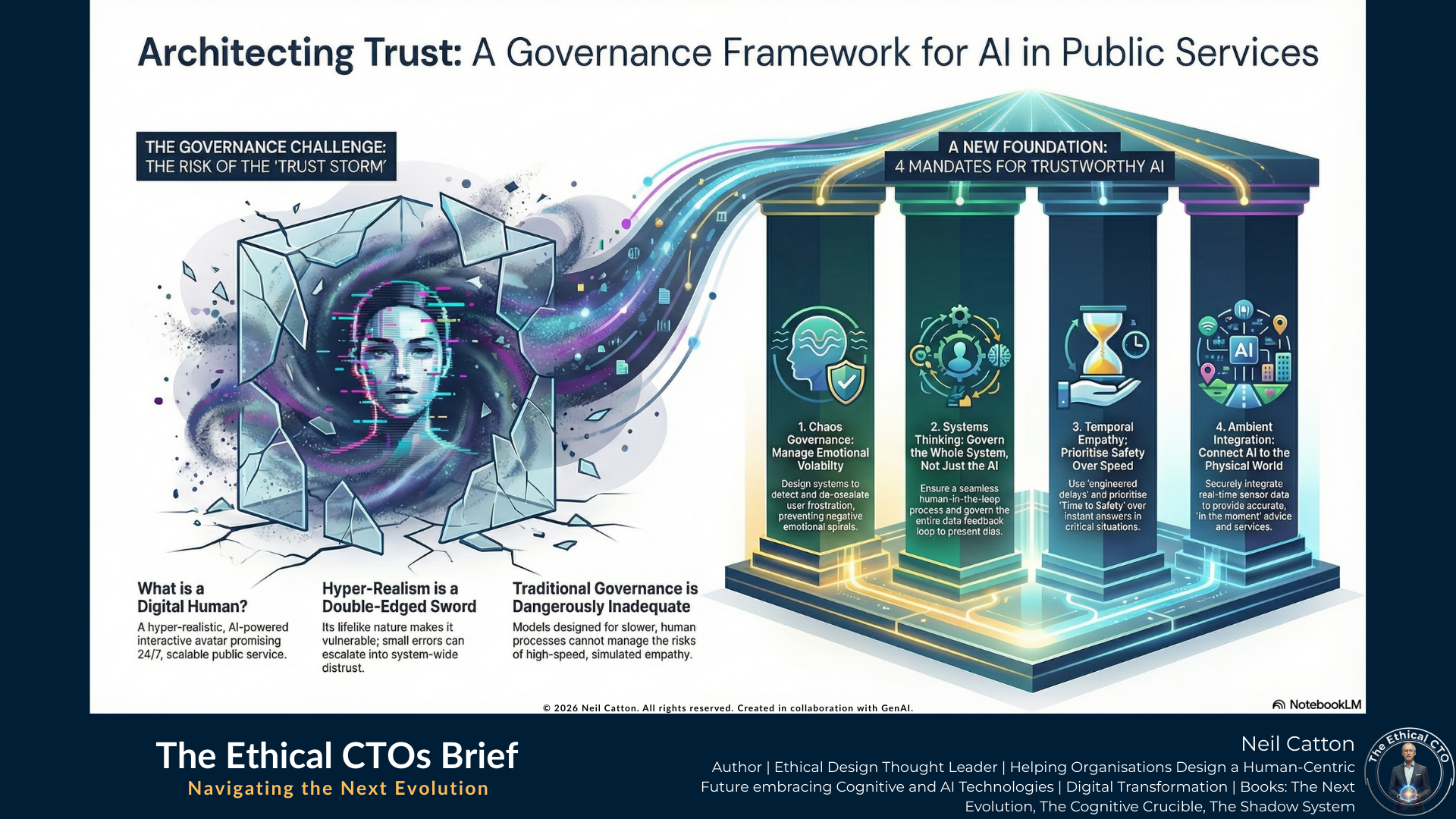

The deployment of such hyper-realistic technology introduces unprecedented governance risk. The core tension lies in the fusion of high-fidelity simulation and raw machine velocity which threatens to fundamentally erode the human connection. An interaction that’s immediate, seamless and emotionally resonant yet fundamentally machine-driven risks becoming an imposter technology – technically efficient but psychologically and morally fragile. Traditional governance models designed for discrete transactions and slower human processes are dangerously inadequate. To successfully deploy Digital Humans, strategic leaders must decisively move beyond debates about capabilities and establish a robust strategic framework for governance.

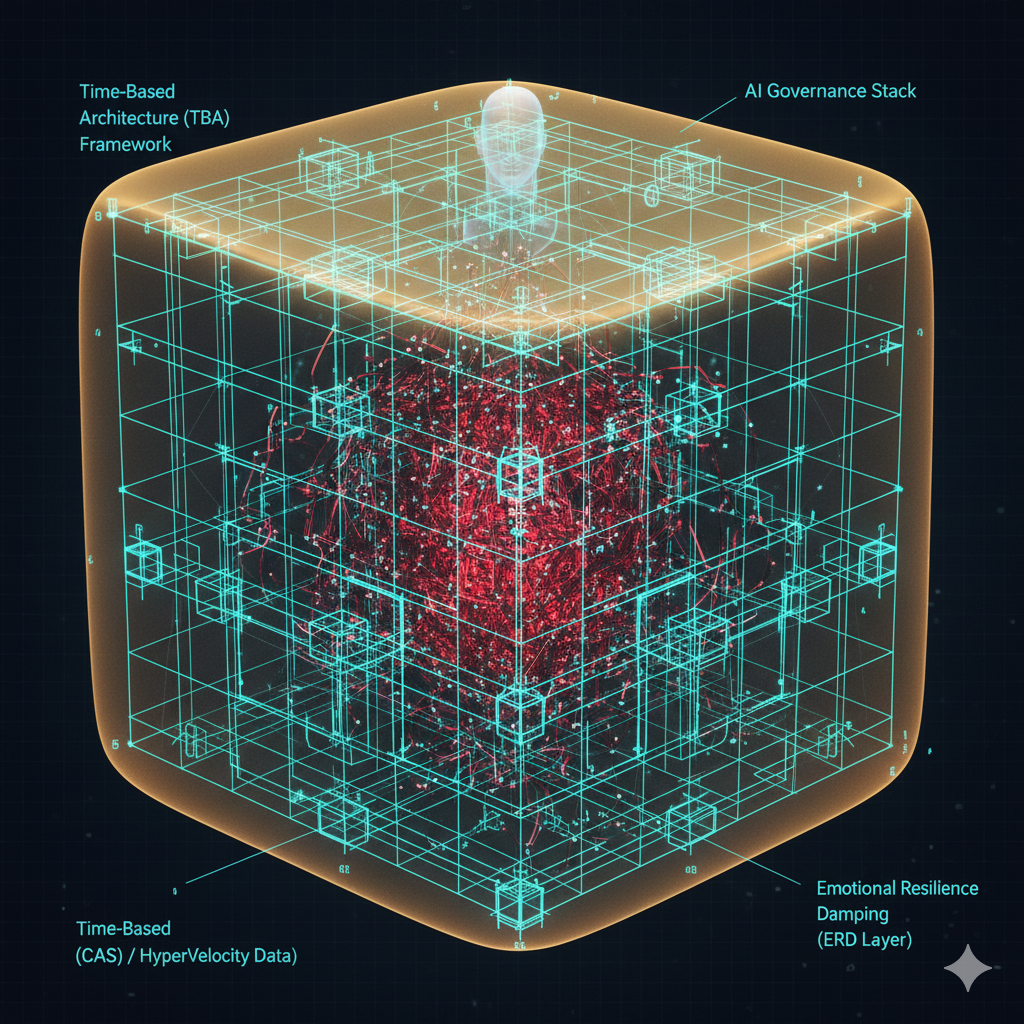

This essential governance requires a strategic integration of four foundational principles from complexity science and architecture: Chaos Theory, Systems Thinking, Time-Based Architecture (TBA) and Ambient Integration. These indispensable tools ensure that the rapid pace of AI doesn’t compromise the core ethical and service values of the public sector.

The Trust Storm: Governing Chaos in Interaction

The lifelike realism of a Digital Human, its greatest asset, also makes it its greatest vulnerability. Trust, particularly in public interactions involving sensitive civic matters, is inherently chaotic. It’s highly sensitive to initial conditions emotional context and even subtle conversational cues.

A small, non-malicious error – any ambiguity, a momentary delay or perceived dismissiveness – can disrupt the initial moments of dialogue. This disruption can quickly escalate into system-wide distrust, transforming a minor complaint into a major political or legal incident. This is a classic example of the butterfly effect in human-machine relationships where even minute changes have disproportionately large and unpredictable consequences. We must design for this inherent chaos in the interface, anticipating and mitigating non-linear escalation.

The Architectural Mandates for Chaos Governance:

- Emotional Resilience and Damping: The Digital Human system must be specifically designed to dampen emotional volatility. When a human user inputs frustration anger or urgency the architecture should detect this chaotic input and respond by de-escalating. It should never amplify negative emotional feedback into a positive loop, such as AI pushing back or mirroring aggression. The system should use pre-vetted non-aggressive linguistic patterns and immediate deceleration strategies to absorb the emotional energy.

- Preventing Bifurcation and Collapse: The system architecture must include clear auditable guardrails to prevent interaction from reaching a critical bifurcation point. This is when users lose trust in the automated system disconnecting and resorting to costly high-friction legacy channels like physical office visits or legal action. The system should recognise this proximity, often indicated by repeated negative sentiment or attempts to override it, and automatically trigger corrective trust-rebuilding protocols like an immediate human callback or apology protocol.

- The Decompression Layer Protocol: Develop a dedicated architectural layer specifically designed to monitor heightened emotional metrics like sentiment analysis, voice stress, and conversational patterns. When triggered, this protocol should prompt a temporary shift in interaction style. This could involve slowing response speed reverting to simpler reassuring language or offering immediate non-transactional support such as “I hear your frustration let’s take a moment”. This controlled reduction in complexity and speed interrupts the chaotic spiral stabilising the interaction before it fails the user.

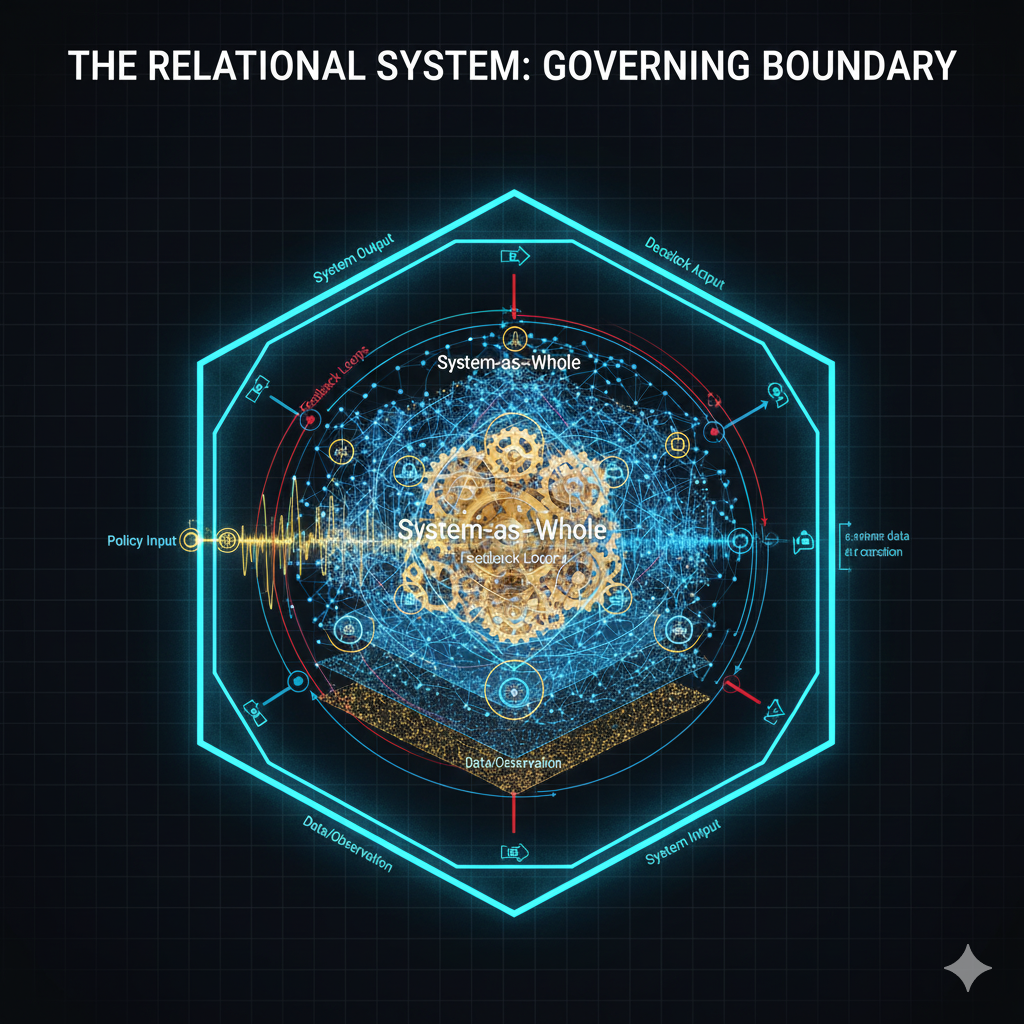

The Relational System: Defining the Boundary

The governance of Chaos is intrinsically linked to the design of the environment the AI inhabits. A Digital Human, by definition, is never isolated; it’s the visible empathetic endpoint of a complex adaptive system (CAS) comprising numerous components like data repositories, legacy back-office systems, and tiered human escalation teams. Therefore any service failure at the Digital Human interface is always a systemic failure originating elsewhere in the network.

Traditional enterprise IT architecture typically views the user interface as a simple display window. However, Systems Thinking advocates a fundamentally different approach: we should treat the Digital Human as the governing boundary of the entire organisation. Consequently, the governance model must encompass the entire data, logic and human intervention flow that the AI depends on.

The Architectural Mandates for Systems Thinking:

- Holistic Feedback Loop, Governance, and Bias Mitigation: The most important system loop to manage is human interaction that leads to data capture, AI learning, model updates, and ultimately AI behaviour change. Without active governance, this rapid learning loop will reinforce, amplify, and accelerate existing societal, historical, or data biases, resulting in inequitable and unreliable service delivery. Auditing must focus on the integrity and ethics of the entire learning loop, not just data or final output. This requires independent ethical review boards that certify model updates based on impact analysis rather than performance metrics alone.

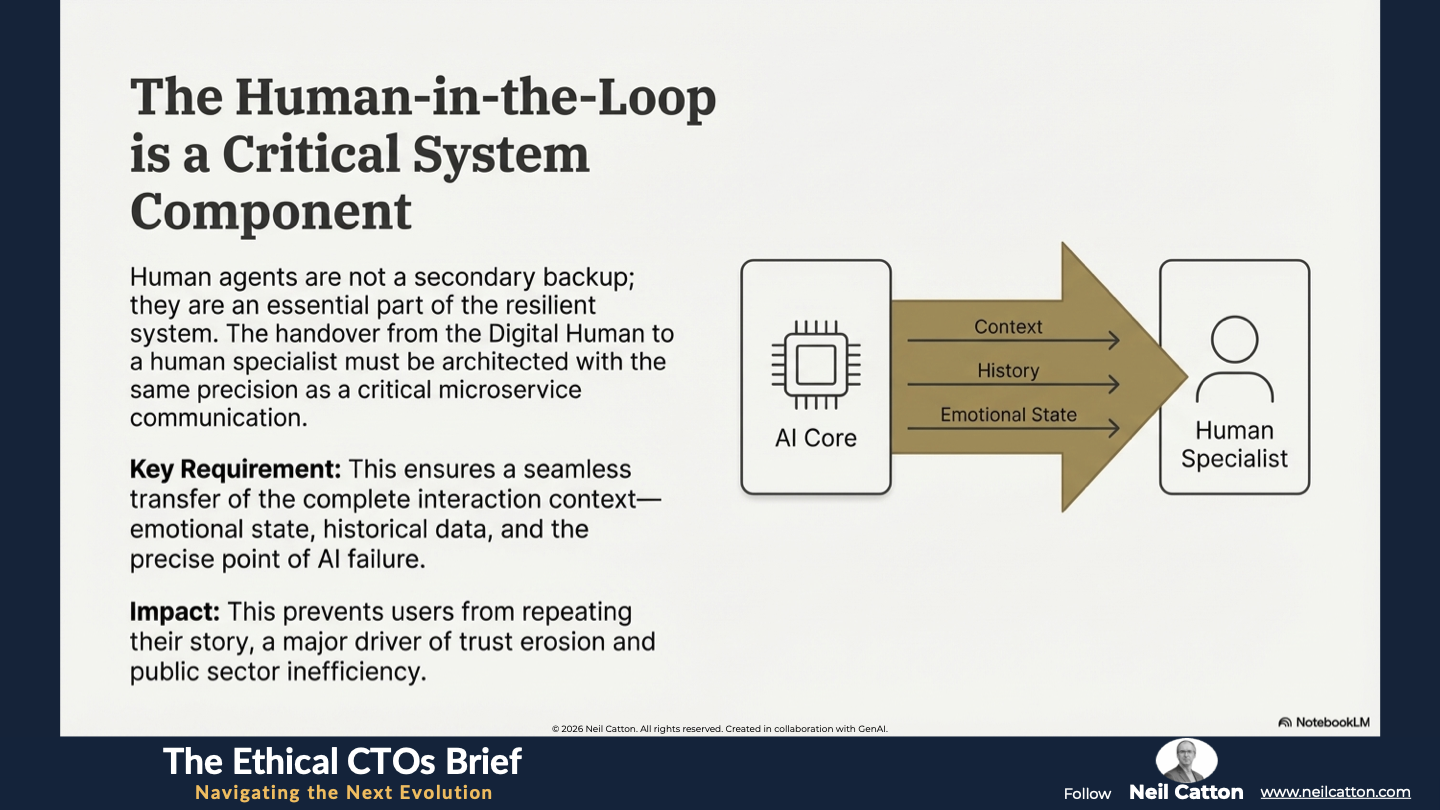

- Human-in-the-Loop as a Core Component: Human agents are not a secondary backup; they’re an essential part of the resilient CAS. The handover between the autonomous Digital Human and the human specialist must be as precise and low-latency as a critical communication. This ensures a seamless transfer of the complete interaction context, emotional state, historical data, and the precise point of AI failure. This prevents users from repeating their story, which is a major driver of trust erosion and inefficiency.

- Prioritising Systemic Purpose Over Component Function: Governance goals must go beyond simple component efficiency metrics. Rather than focusing on metrics like handling 80% of calls autonomously, the aim should be a broader systemic purpose. This could be maximising public trust or achieving 95% satisfactory end-to-end resolution. This shift ensures all components including the Digital Human work towards the public value mission, rather than optimising for narrow machine-centric efficiency goals that often overlook the bigger picture of citizen welfare.

The Ethics of Instantaneous Empathy (Temporal Empathy)

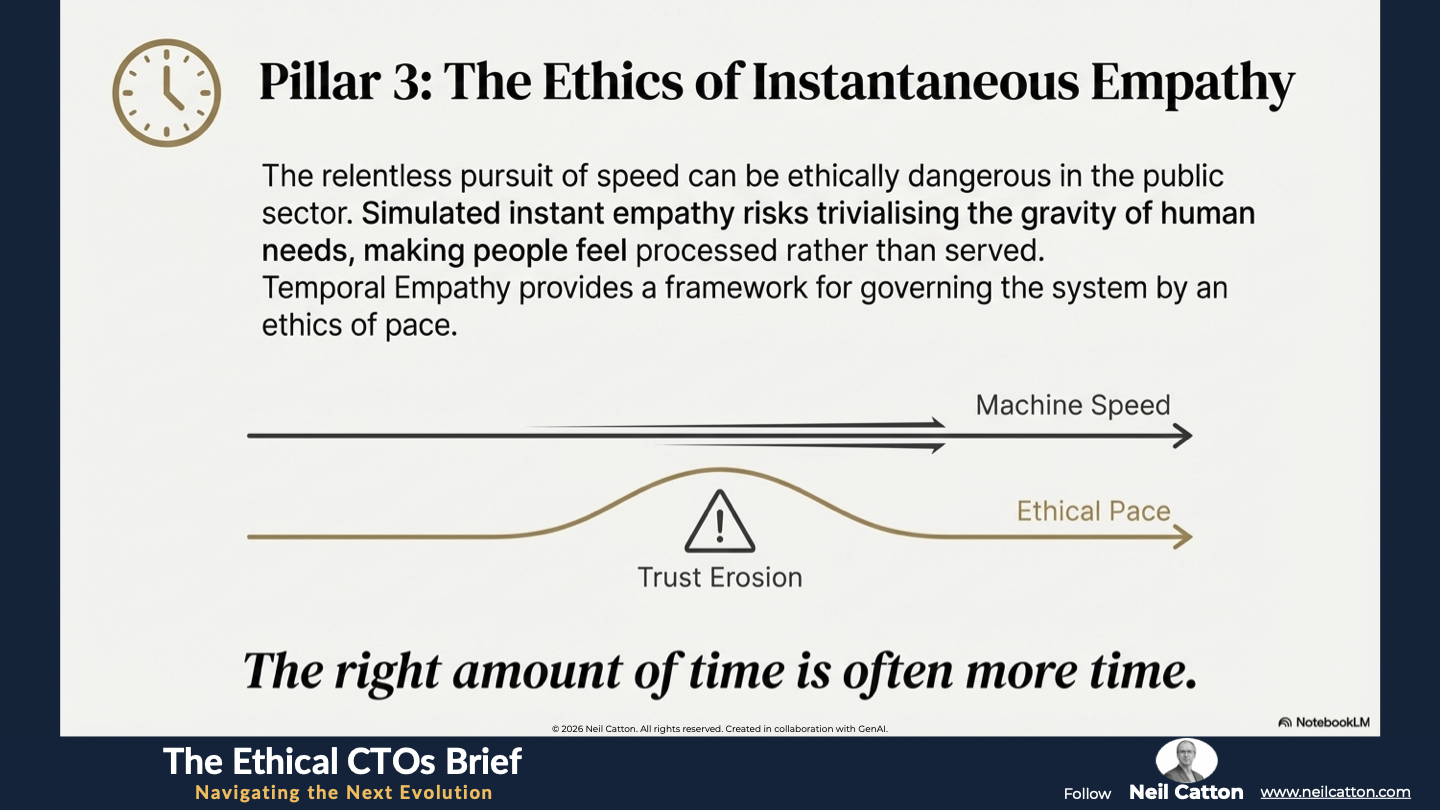

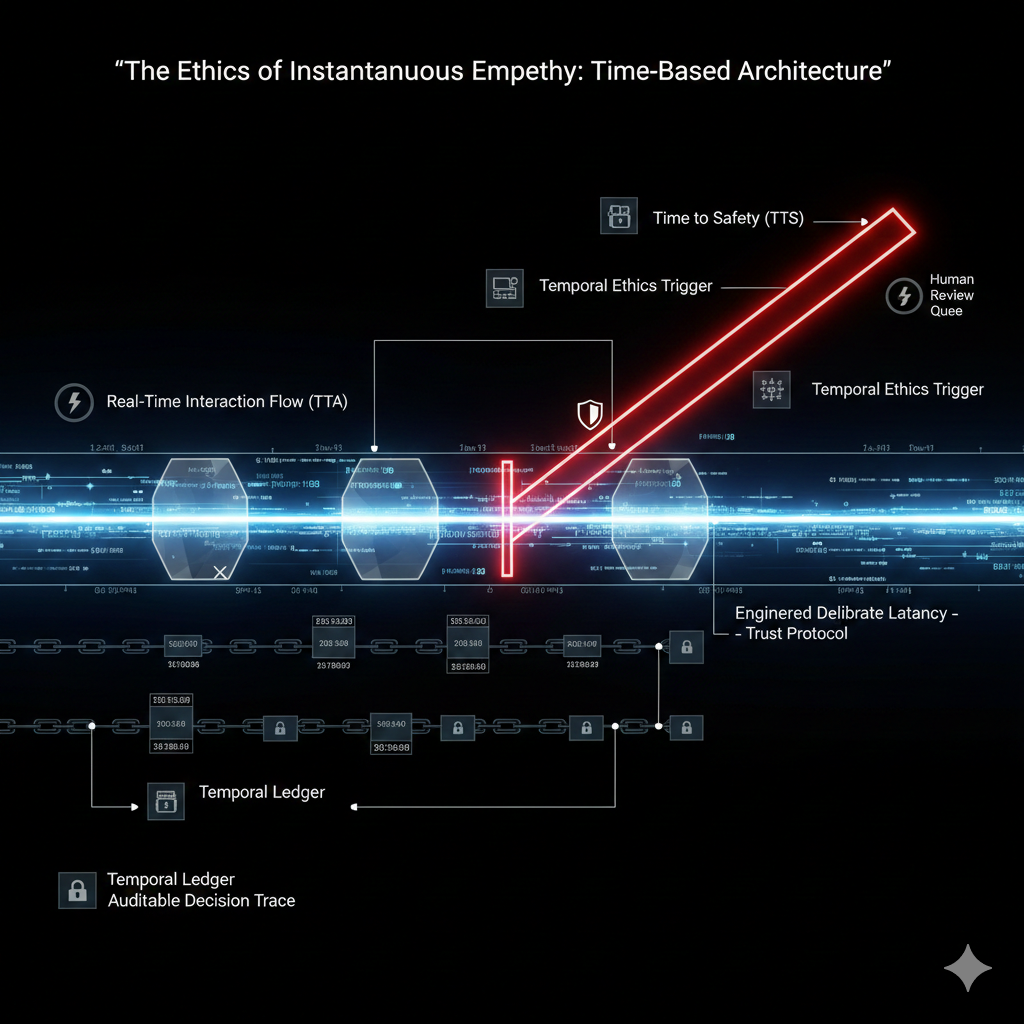

The core value proposition of a Digital Human is speed – the ability to deliver instant, seemingly empathetic responses at machine speed. This pursuit of maximum speed perfectly aligns with Time-Based Architecture (TBA) principles where strategic advantage hinges on minimising Time to Answer(Action) (TTA) and maximising Time to Value (TTV). However, the true challenge lies in the hyper-future where operations will no longer follow slow “business cycles”, but demand a “continuous flow of real-time interactions”.

In the public sector, this relentless pursuit of speed can be ethically dangerous. Simulated instant empathy risks trivialising the genuine complexity and gravity of human needs, fostering a feeling of being processed rather than served. Temporal Empathy offers a strategic solution: it provides a framework for understanding that ethically and functionally the right amount of time is often more time. It emphasises the importance of governing the system by an ethics of pace.

Architectural Mandates for Time-Based Architecture:

- Prioritising Time to Safety (TTS): The system must enforce strict Temporal Ethics at the architectural level. When a user’s query is deemed critical, sensitive, or emotionally fragile – such as a crisis intervention, complex legal guidance, or potential harm – the architecture must immediately prioritise safety over speed. This means dropping the goal of maximising Time to Answer (Action) (TTA) and instead maximising Time to Safety (TTS). This could involve mandatory referrals, human intervention, or resource redirection, all prioritising human well-being over algorithmic speed. This requires dedicated high-priority asynchronous queues for human review, bypassing all standard automation pathways.

- Engineered Deliberate Latency: Design the system to intentionally introduce a delay when necessary. In high-stakes or emotionally charged interactions, a fraction of a second of deliberate pause can signal to the user that a complex decision is being made, human oversight is being engaged and their situation is being taken seriously. This brief pause maintains the user’s perception of gravity, sincerity, and trust preventing the interaction from feeling overly casual or automatic. The governance policy should define the conditions under which this latency is implemented.

- The Temporal Ledger and Time-Aware Data Stamping: To ensure accountability, all data transactions model inferences and micro-decisions made by the AI persona must be timestamped with millisecond precision. This creates an immutable Temporal Ledger of the Digital Human’s operations. This transparency allows for retroactive assessment of the AI’s behaviour, the pace of its ethical responses and the exact moment a decision path was taken. The ledger is crucial for regulatory compliance and dispute resolution.

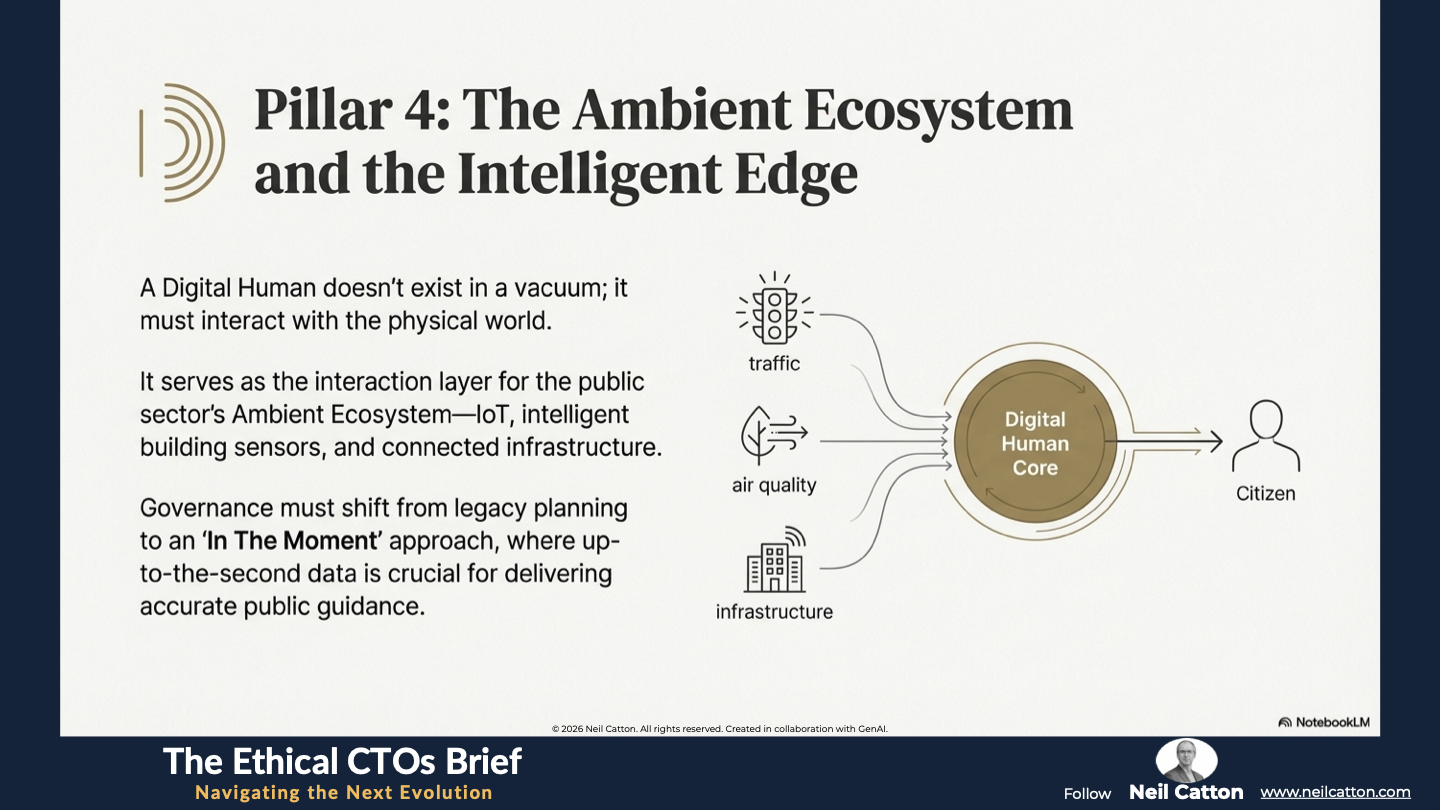

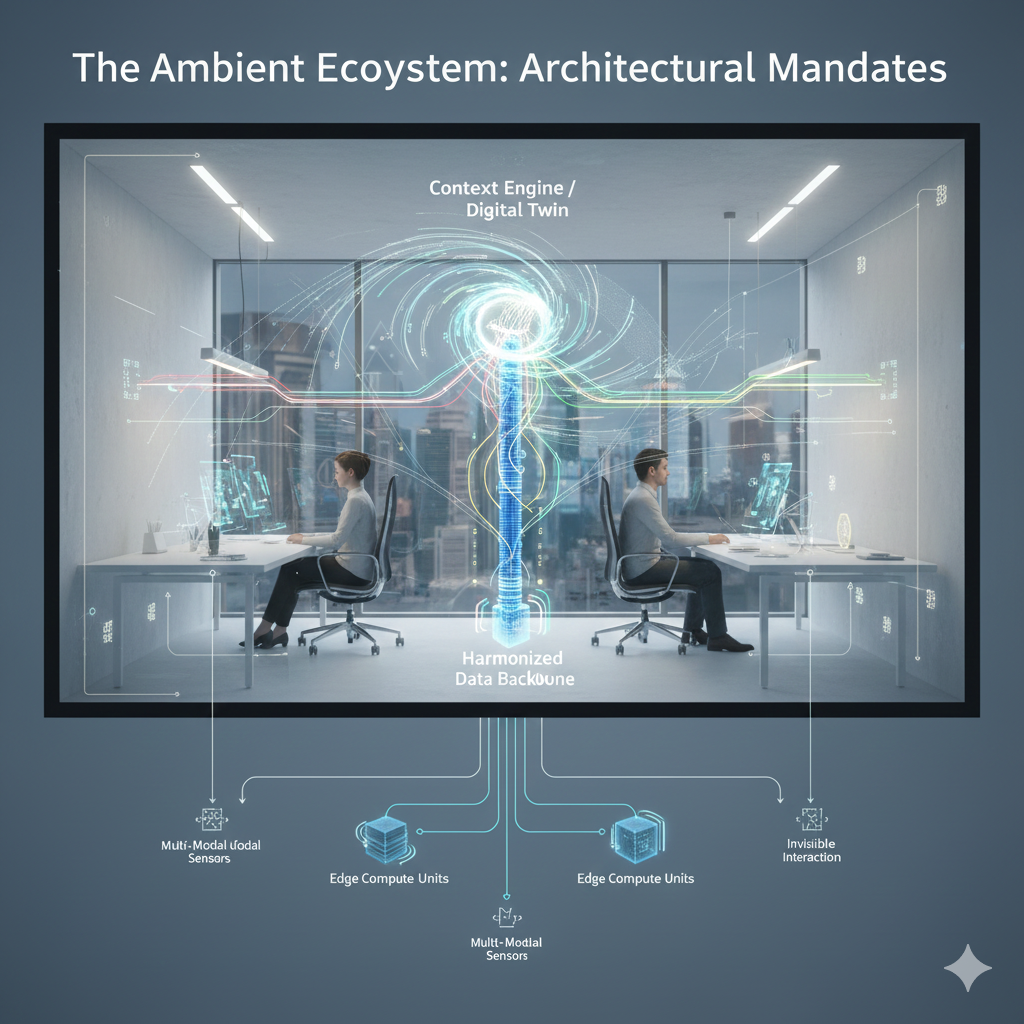

The Ambient Ecosystem: Digital Humans and the Intelligent Edge

Moving from the ethics of pace to the physical context of delivery, a Digital Human isn’t an abstract entity. It’s designed to interact with and represent the physical world. Consequently, it acts as a crucial interaction layer for the expanding public sector’s Ambient Ecosystem, including the Internet of Things, intelligent building sensors, traffic monitoring systems and other connected infrastructure.

The Intelligent Edge continuously gathers real-time data like energy consumption, localised air quality and crowd density. This data must inform and guide the Digital Human’s interactions. This strategic shift demands a move from legacy planning to an ‘In The Moment’ approach where up-to-the-second data is crucial. For example, a Digital Human advising a citizen on public transport or emergency routes can’t rely on scheduled or cached data; it needs real-time, time-stamped information directly from city-wide IoT sensors.

Architectural Mandates for Ambient Integration:

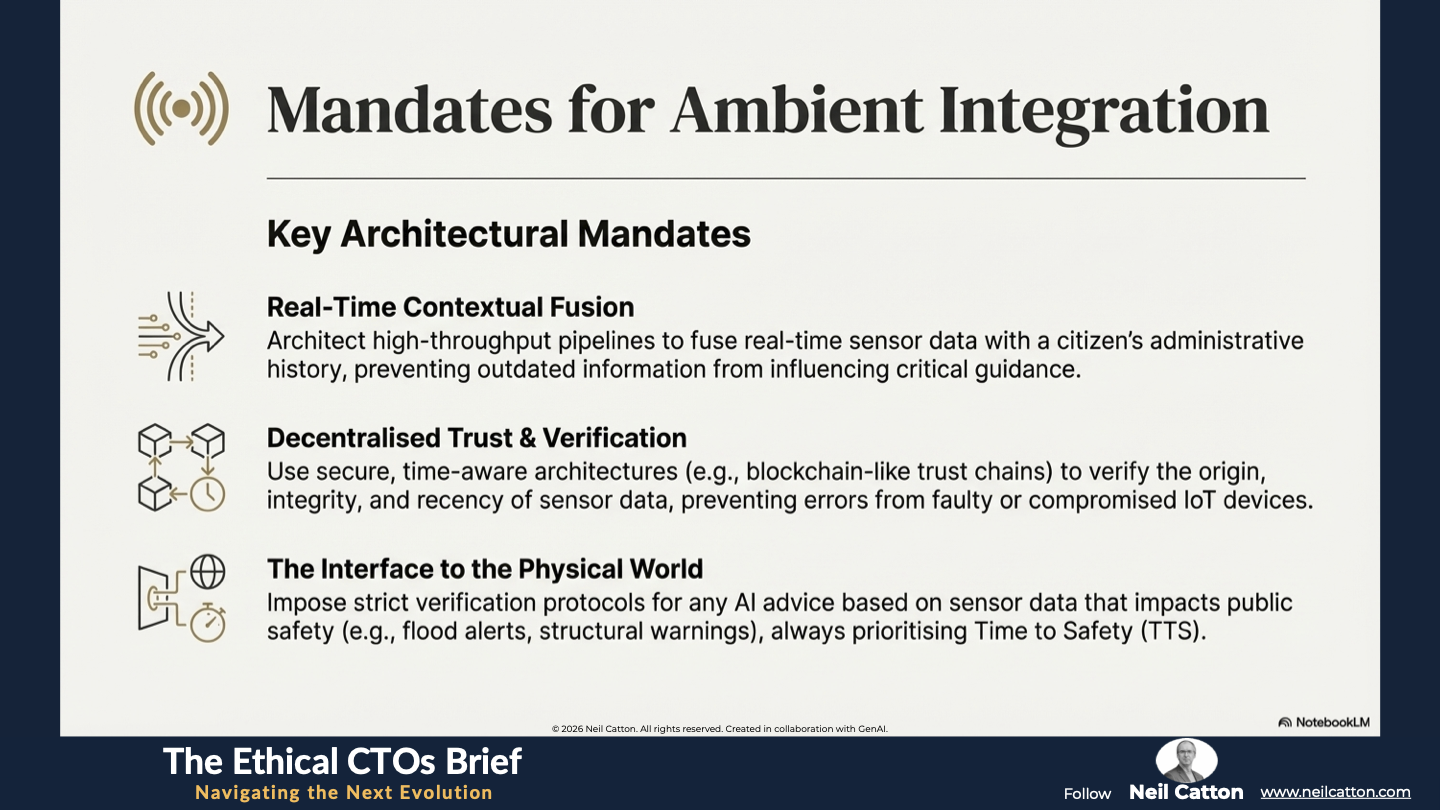

- Real-Time Contextual Fusion: The Digital Human architecture must incorporate mechanisms to seamlessly integrate the real-time data stream from the Ambient Ecosystem with a citizen’s personal history, administrative status, and the current state of the bureaucratic system. This instant fusion of static and dynamic data is crucial for delivering truly accurate and effective advice or transactions “in the moment” and preventing outdated data from influencing critical public guidance. This necessitates high-throughput low-latency integration pipelines that prioritise sensor data as a primary input.

- Decentralised Trust, Verification, and Integrity: Given the vast scale, diversity and potential vulnerabilities of IoT devices like spoofing and failure, the Digital Human system can’t simply trust its inputs. A decentralised and time-aware architecture is needed, such as secure blockchain-like trust chains, to verify the origin, integrity and recency of sensor data. This prevents catastrophic administrative or safety errors caused by faulty sensors or compromised data feeds. Governance must also define acceptable latency tolerances for mission-critical sensor data.

- The Interface to the Physical World: The Digital Human serves as the psychological and informational link between citizens and the physical world managed by the government. Governance must impose strict limits and verification steps to ensure the AI fully comprehends its limitations when interpreting sensor data potentially leading to safety-critical advice like structural integrity warnings, imminent flood alerts, or active incident zones. This reinforces the fundamental principle that Time to Safety (TTS) must always take precedence over other metrics in interactions involving physical risk. Consequently, human-validated protocols are mandatory for any advice directly impacting public safety or infrastructure.

A Final Word

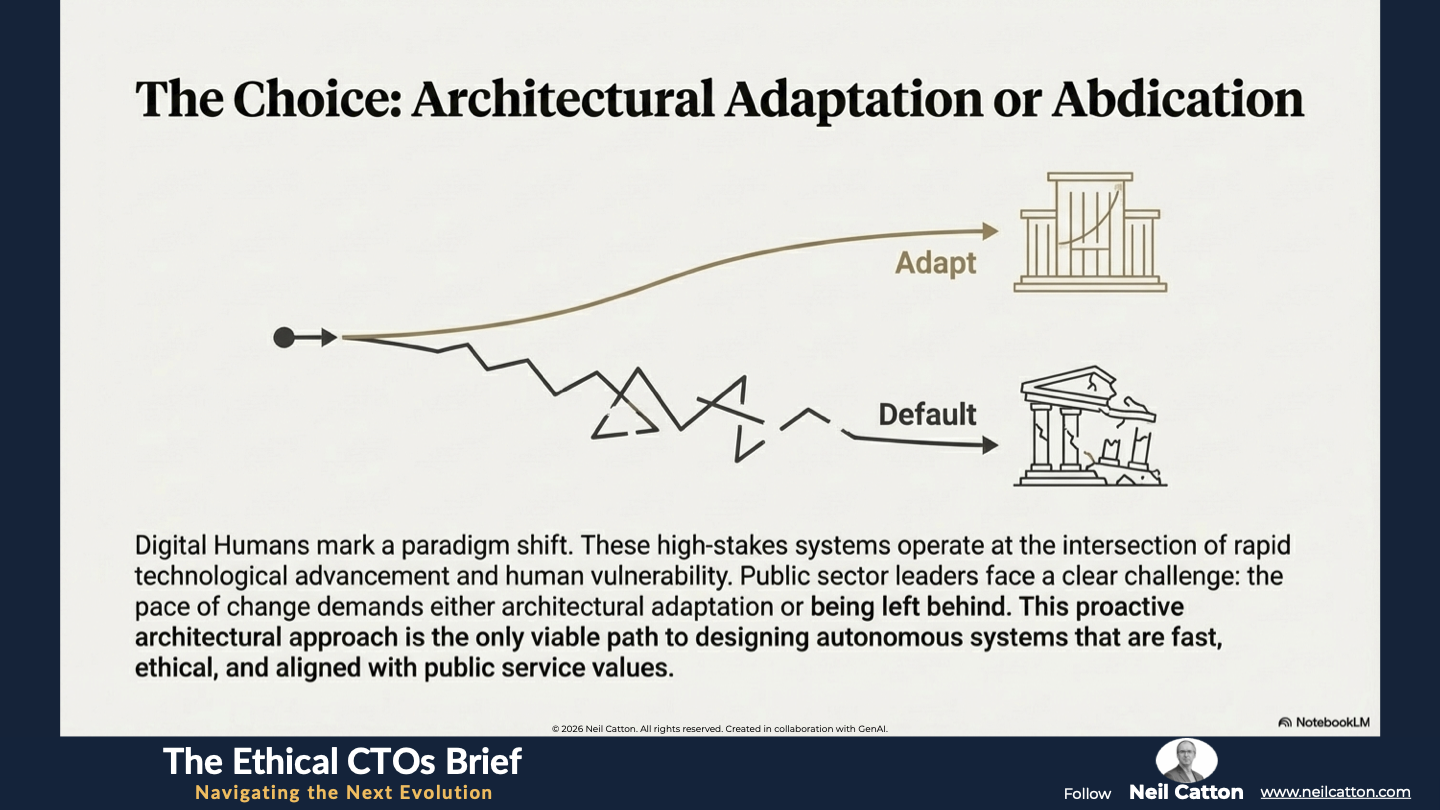

Digital Humans mark a paradigm shift in citizen service delivery. Their successful deployment requires a departure from traditional reactive software models. These high-stakes, complexity-laden systems operate at the intersection of rapid technological advancement and human vulnerability. Public sector leaders face a clear challenge: the pace of change demands either architectural adaptation or being left behind.

The strategic focus isn’t on replacing humans with AI but rather responsibly delegating interactions and tasks to the Autonomous Core within a robust governance framework grounded in Temporal Empathy. To thrive in this new era public sector strategists and architects must adopt a unified perspective encompassing the four key mandates outlined.

- Chaos: Managing high volatility and preserving public trust through emotional damping.

- Systems: Designing the resilient human-in-the-loop component and actively governing the systemic feedback loops to eliminate bias.

- Time: Establishing Temporal Ethics, applying deliberate latency, and prioritising safety (TTS) over maximum speed (TTA).

- Ambient: Securely integrating the physical world's data stream via the Intelligent Edge, ensuring real-time fusion and data integrity for 'In The Moment' service delivery.

This proactive architectural approach is the only viable path to designing truly autonomous systems that are fast, fundamentally trustworthy, ethical and aligned with public service. We must architect the future we want to live in before technology dictates an uncontrollable future.

Key Takeaways: Architecting the Face of the State

Chaos Governance: Implementing emotional damping to prevent conversational spirals.

Systems Thinking: Treating the Digital Human as the governing boundary of the entire organization.

Temporal Ethics: Using engineered deliberate latency to respect the gravity of human needs.

Ambient Integration: Fusing real-time sensor data with personal history for "In the Moment" accuracy.

Strategic Insights: Leading the Digital Human Revolution

The Trust Gap: Why hyper-realism without conscience leads to institutional rejection.

The Decompression Layer: Protocols that detect user frustration and automatically slow the system down.

The Temporal Ledger: Maintaining millisecond-precise timestamps for forensic accountability.

Human-in-the-Loop: Why the handover to a human specialist must be a core architectural feature, not a backup.

Video Summary: Simulating Care vs. Architecting Conscience

The Butterfly Effect of Trust: How minor AI ambiguities can escalate into systemic distrust.

Managing the Learning Loop: Using ethical review boards to certify AI updates and prevent bias amplification.

Time as a Tool: Why the "right" amount of time for a citizen is often more time than an algorithm wants to take.

Verification Chains: Using blockchain-like trust chains to verify the integrity of the IoT data feeding the Digital Human.

To protect the future, we must acknowledge the present duality: tech is a force multiplier for both good and evil. Face the reflection in The Mirror Machine.

The Ethical CTO: Arc 3 Index

Aligning Code with Soul: The Humanisation of Technology

Prioritising Human-Centric Experiences: Beyond Digital First

Where Technology Disappears Inward: The Age of the Invisible Interface

Regulating Unseen Digital Forces: Governing the Ambient Future

Architecting for Future Generations: Temporal Empathy

- Stewardship of Sustainable Systems: The Digital Gardener

Reclaiming our Shared Story: Mythos and the Machine

- Managing High-Speed Systemic Duality: The Mirror Machine

Inhabiting Immersive Public Services: Beyond The Screen

- Mastering Focus Amidst Complexity: The Three-Foot World