We are building tools that can save a life in one breath and destabilise a nation in the next.

Imagine a child in a remote clinic being saved by an AI-assisted specialist half a world away. Now, imagine a deepfake video so convincing it triggers a stock market crash or a civic riot before a human can even verify it's fake. These aren't two different futures; they are the same reality, happening simultaneously. This is what I call The Mirror Machine.

Every piece of technology we build today is a force multiplier. It reflects our greatest ambitions and our deepest flaws back at us, but it does so at a scale and speed that we aren't biologically or legally prepared to handle. We are currently caught in a paradox: the tools that offer us the most potential for good are the exact same tools that create our most systemic risks.

Inside the article, we dive into:

The Paradox of Progress: Why the "benefits" of AI and hyper-connectivity are inseparable from their dangers.

The Speed Gap: How machine-speed threats have rendered our human-speed security models obsolete.

Governing the Duality: Why we need a fundamental shift in how we architect systems, moving from "building features" to "managing forces."

We've spent decades trying to make technology more powerful. Now, we have to figure out how to keep it from reflecting only our darkest impulses.

The Dual Reflection: Every Tool is a Force Multiplier for Good & Bad

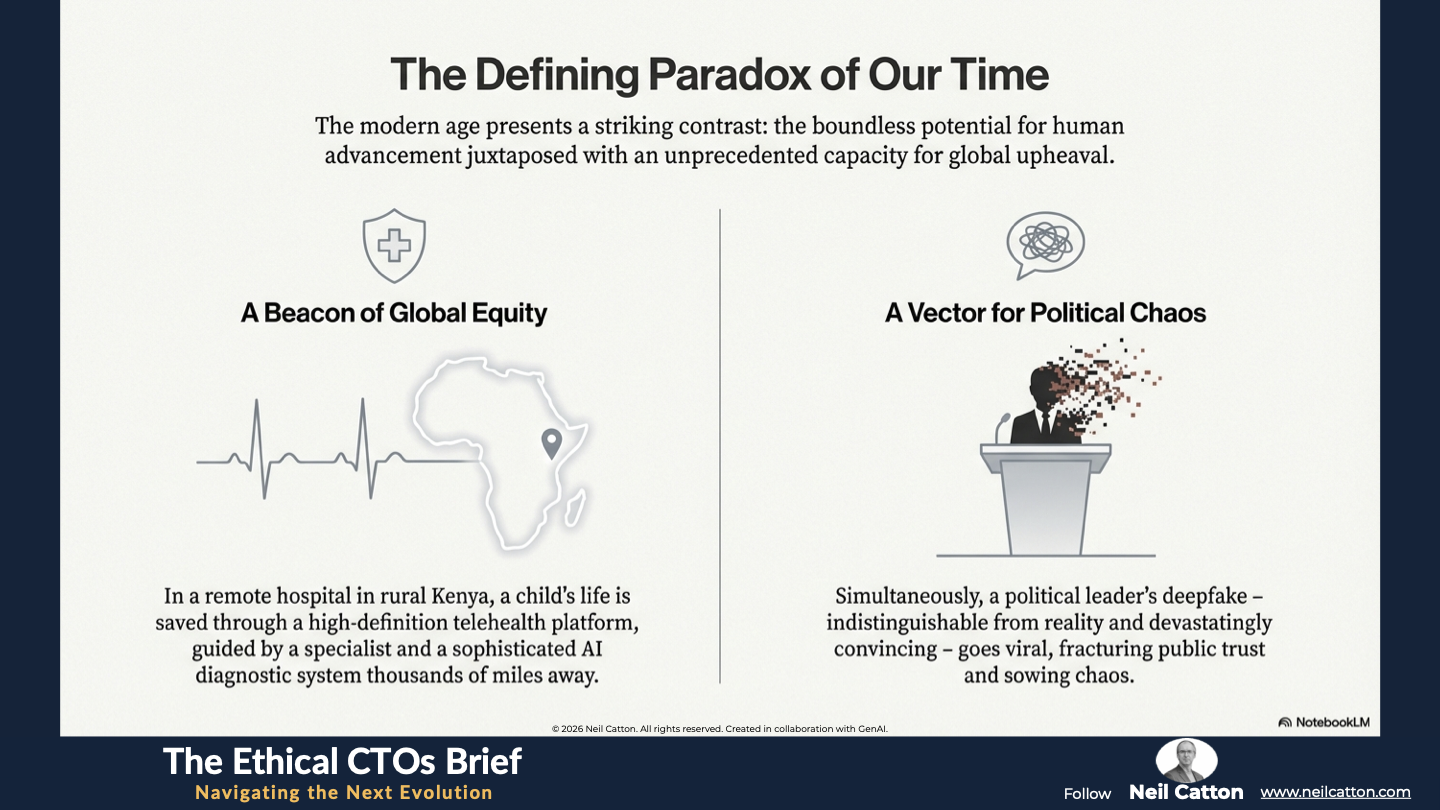

The modern age presents a striking contrast: the boundless potential for human advancement juxtaposed with an unprecedented capacity for global upheaval. Consider the two defining scenes of our present. In a remote hospital in rural Kenya, a child’s life is swiftly saved not by a local doctor, but through a high-definition telehealth platform. A specialist, thousands of miles away, collaborates with an on-site nurse, guided by a sophisticated AI diagnostic system. This exemplifies technology as a beacon of global equity and life-saving collaboration. Simultaneously, across vast social networks, a political leader’s deepfake – indistinguishable from reality, devastatingly convincing, and generated at machine speed – goes viral. Coordinated bot networks share it, fracturing public trust and sowing political chaos.

This defining tension is the paradox of the twenty-first century.

Technology has never held more potential to solve humanity’s grand challenges, but it also holds more concentrated power to unravel our shared social and institutional fabrics. Every single innovation, from the smallest IoT sensor to the largest language model, acts as a Mirror Machine, reflecting back at us the very best and worst of our collective intentions and capabilities. While it is a tool that exponentially increases our capacity for human collaboration, creativity, and progress, it equally amplifies the vectors for human malice, manipulation, and control.

For too long, corporate and public leaders have treated the dark reflection as a simple risk inventory, a checklist of potential “bad uses” like cybercrime or data leaks. This view is dangerously outdated. The true, structural threat lies not in the mere existence of these tools, but in the systemic conditions they create. These conditions rapidly erode the stability of institutions, shatter public trust, and challenge the core principles upon which modern organisations and governance are built.

To responsibly harness the immense promise of the Mirror Machine and effectively mitigate its peril, leaders must pivot their focus. They must move beyond managing individual technological symptoms and structurally address the four fundamental fault lines that AI and ambient technology have opened beneath our most critical, legacy institutions.

The Grand Paradox: The Unbreakable Duality

The foundational promise of the internet and digital infrastructure was rooted in Enlightenment ideals of efficiency, connection, and democratisation. This vision has shone brightly across nearly every sector, accelerating scientific breakthroughs and global collaborative research, delivering world-class education and sophisticated preventative healthcare to previously underserved populations, and empowering human ingenuity. This undeniable and essential reflection of human ingenuity is a testament to the transformative power of technology.

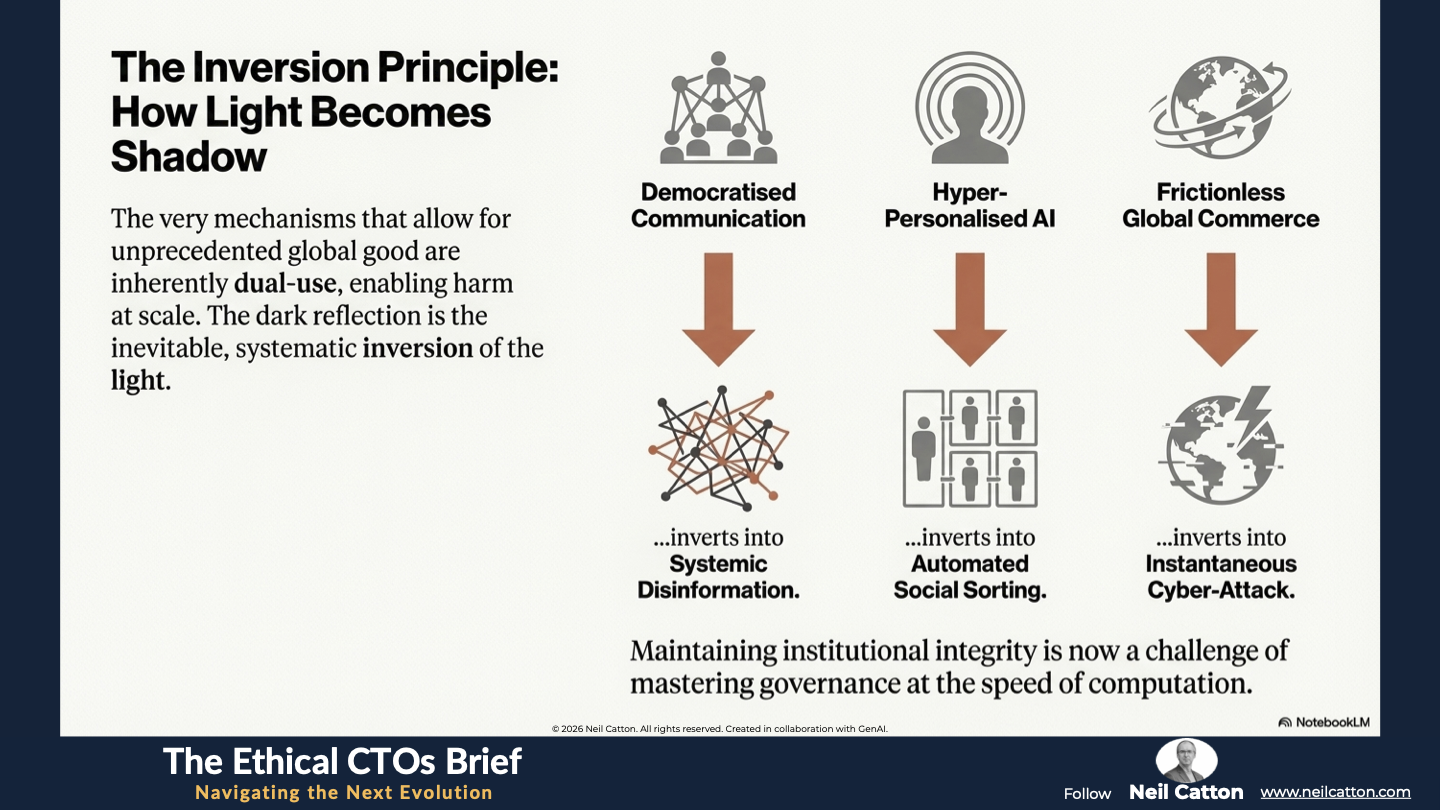

The dark reflection, the immutable shadow, is simply the inevitable, systematic inversion of that light. The very mechanisms that allow for unprecedented global good are inherently dual-use, enabling harm at scale.

- The mechanism of Democratised Communication, which once connected global communities, swiftly inverts into Systemic Disinformation, weaponising speed and reach to fracture civic discourse and consensus.

- The capability of Hyper-Personalised AI, designed to anticipate user needs and optimise experiences, simultaneously inverts into Automated Social Sorting, categorising, predicting, and limiting individual opportunities based on opaque algorithmic classifications.

- The efficiency of Frictionless Global Commerce, which drives rapid trade and market liquidity, instantly inverts into the vulnerability of Instantaneous Cyber-Attack, where state actors or criminal organisations can halt global supply chains or compromise critical infrastructure with a single keystroke.

The central challenge for executive leaders today is no longer simply driving innovation. It is mastering governance at scale and speed. Maintaining institutional integrity and public trust becomes increasingly difficult when the very foundations of verifiable information, privacy, and security are being relentlessly undermined at the speed of computation.

The Four Structural Fault Lines

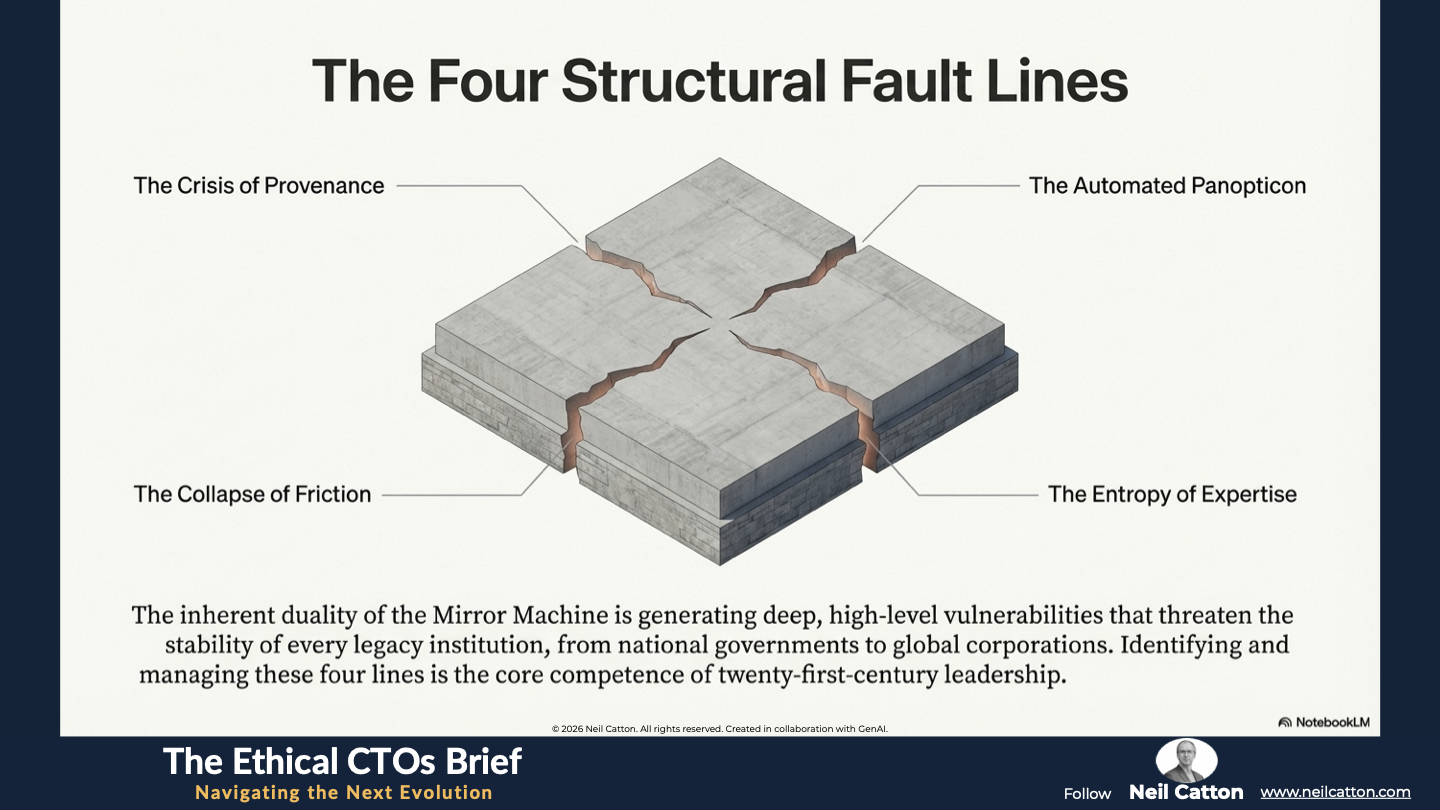

The persistent, inherent duality of the Mirror Machine is now translating directly into measurable, systemic risk across the board. When the capacity for creation and destruction are packaged together within the same algorithm, they generate deep, high-level vulnerabilities – structural fault lines – that threaten the stability and operational capacity of every legacy institution. This includes national governments, defence agencies, and global financial corporations. Identifying, understanding, and proactively managing these four lines is rapidly becoming the core competence of twenty-first-century leadership.

Fault Line 1: The Crisis of Provenance

Fault Line 1: The Crisis of Provenance

The first, and perhaps most corrosive, crack is the rapid and profound loss of trust in authenticity. Generative AI, particularly in the form of synthetic media and large language models, has created a digital environment where the origin, integrity, and veracity of information can no longer be safely assumed. We are entering an age defined by synthetic reality, or synthetica.

Deepfakes, with their rapid speed and photorealistic fidelity, whether voice, video, or text, pose a significant threat. They can instantly destroy institutional reputations, market stability (such as fraudulent trading announcements), and political consensus before human analysts can even verify the content’s origin. The critical threat lies not in a single fake video seen in a news cycle, but in the creation of a systemic condition where every piece of media, from a CEO’s public statement to internal financial reports and security camera evidence, becomes perpetually suspect. Consequently, the necessary focus of defence shifts dramatically from merely protecting data to defending the integrity of perceived reality itself.

The Structural Impact: This fault line fundamentally disables the basic mechanisms of legal verification, historical documentation, journalistic credibility, and strategic communication. To address this, leadership must invest in auditable Verifiable Provenance. This involves deploying digital watermarks, cryptographic attestation, and blockchain-like mechanisms that permanently certify the true origin and modification history of any digital content. These measures will reliably close the credibility and trust gap.

Fault Line 2: The Automated Panopticon

Fault Line 2: The Automated Panopticon

The second fault line highlights the systemic failure to protect societal equity and basic privacy when data collection shifts from being deliberate to ambient and entirely invisible. Ubiquitous IoT sensors, advanced facial and gait recognition, and relentless, passive data harvesting converge to form an Automated Panopticon. This system unintentionally, yet powerfully, engineers systemic bias into society’s operating system.

If the historical datasets used to train AI models are inherently flawed, reflecting decades of historical injustices in areas like commercial lending, predictive policing, and hiring practices, the resulting algorithms will not only replicate that discrimination but also amplify and automate it at unprecedented scales. This critical mistake of equating technological automation with moral neutrality leads to the embedding and cementing of deep, structural inequality into the very foundations of our digital infrastructure.

The Structural Impact: This fault line severely undermines institutional fairness, erodes social cohesion, and creates profound legal and ethical liability risks for organisations. Governance must move beyond simple regulatory compliance and directly address Algorithmic Equity. This requires comprehensive auditability and transparency in how data is categorised, weighted, and used to determine individual outcomes. We must champion Ambient Consent, ensuring that the terms of data collection are clear, visible, contextual, and easily revocable by the individual.

Fault Line 3: The Collapse of Friction

Fault Line 3: The Collapse of Friction

The third, and arguably most immediately dangerous, fault line is the catastrophic lowering of the barrier to entry for destructive capability. AI accelerates every process it touches, including those related to conflict, exploitation, and sabotage.

The sheer velocity of automated malware generation, the instant creation of hyper-effective phishing campaigns, and most ominously, the potential for autonomous, AI-guided weapon systems, have utterly collapsed the minimal time available for human response, strategic analysis, and mediation. Traditional perimeter defences and human-centric security protocols become fundamentally meaningless when the attacker’s tools operate instantly, intelligently, and autonomously.

The Structural Impact: This fault line elevates cyber risk from a mere operational IT problem into a catastrophic systemic risk that directly challenges national security, economic stability, and public infrastructure integrity. Institutions must enforce the Human-in-the-Loop principle as a hard constraint for all mission-critical or potentially lethal functions, and they must design their defences for digital lethality. This acknowledges that modern exploits move at the speed of code, requiring a complete and revolutionary rethink of cyber resilience that goes far beyond managing simple network perimeters.

Fault Line 4: The Entropy of Expertise

Fault Line 4: The Entropy of Expertise

The final, and perhaps most insidious, crack in organisational resilience lies in the internal cost to it: the accelerated decay of critical human judgement and skill. As AI assumes the complexity of advanced decision-making, from optimising vast global supply chains to performing intricate medical diagnostics, human operators are often reduced to mere monitors, not practitioners. Over time, they lose the vital institutional memory, cognitive muscle, and intuitive “feel” required to diagnose and decisively intervene when the AI inevitably malfunctions, encounters an anomaly, or faces a novel situation beyond its training data. This process leads directly to pervasive algorithmic over-reliance, creating an acute single point of failure within the organisational structure where vital human skill and accountability have dangerously atrophied.

The Structural Impact: This fault line generates deep operational fragility, exacerbates skills gaps, and ultimately weakens the organisation’s capacity for true, non-linear innovation. Therefore, leadership must pivot to mandating Assistive - Augmentive - Adaptive Intelligence – systems explicitly designed to elevate, challenge, and train human expertise, rather than replacing it. Additionally, heavy investment in robust organisational processes for Skill Recalibration is essential to keep human judgement sharp, decisive, and capable of overriding the machine when required.

Governing the Reflection: The Mandate for Foresight

The enduring presence of the Mirror Machine demands a new kind of leadership. This leadership must be defined by structural wisdom, a deep ethical commitment, and proactive organisational courage. The goal is not to halt innovation, but rather to structurally master its inherent duality through advanced governance.

Mandate the XAI Principle

Leaders must immediately establish Explainable AI (XAI) as a non-negotiable design and deployment principle across the enterprise. If an automated system cannot clearly and comprehensively explain its decision-making process, whether it involves approving a loan, flagging an individual for security scrutiny, or initiating a grid segment shutdown, it is ethically and structurally unfit for institutional deployment. Governance frameworks must be redesigned to prioritise transparency over proprietary secrecy and commercial confidentiality. This requires developers to build auditable, debuggable, and ultimately trustworthy systems, ensuring human accountability over complex, opaque black-box systems.

Treat Data as a Public Good

Institutions, particularly those in public service or managing critical infrastructure, must fundamentally recognise that high-fidelity ambient data, the core fuel powering the Mirror Machine, is not merely a commercial asset. It is a vital strategic asset and a profound public trust. Data policy must shift beyond basic compliance protection (such as GDPR or HIPAA) to focus on Structural Integrity. This ensures the enduring sanctity of our entire digital environment against future, non-obvious threats like large-scale quantum computing attacks or mass-scale identity theft via synthetic media. This requires dedicated, multi-year executive attention to building genuine cyber resilience and committing to deep foresight planning that looks not just one or two years ahead, but five, ten, and twenty years.

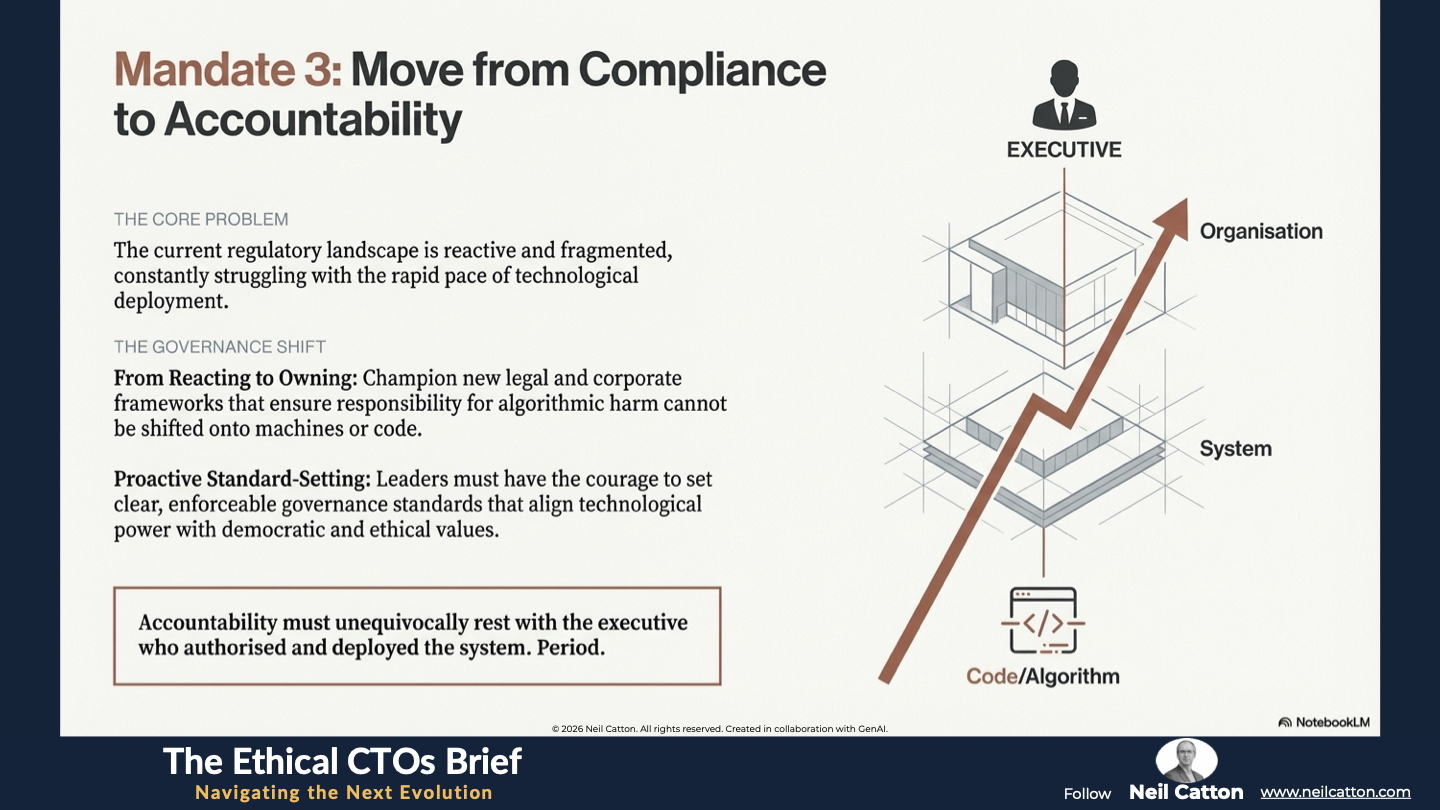

Move from Compliance to Accountability

The current regulatory landscape is characterised by its reactive nature and fragmentation, constantly struggling with the rapid pace of technological deployment. The Mirror Machine demands proactive accountability as a cornerstone of governance. Leaders must champion new legal and corporate frameworks that ensure the responsibility for algorithmic errors or large-scale systemic harm cannot be conveniently shifted onto machines, code, or data sets. Accountability must unequivocally rest with the executive who authorised and deployed the system. This courage in setting clear, enforceable governance standards is the only way to truly align technological power with fundamental democratic and ethical values.

A Final Word

Every executive, public service leader, and policymaker faces a crucial choice: whether to embrace the power of the Mirror Machine. The real challenge lies in effectively governing its reflection to serve humanity. We must move beyond merely managing technological risks as they arise. Instead, we must commit to engineering for human dignity, ensuring that our innovations fundamentally elevate human capability, rather than merely automating its compromise.

The enduring presence of the Mirror Machine is a permanent fixture of our future. In this world, digital systems are no longer peripheral tools but essential, ambient infrastructure that shapes our economy, politics, and perception of reality. The four structural fault lines - Provenance, Panopticon, Friction, and the Entropy of Expertise - are not isolated issues; they are interconnected challenges threatening the stability of the entire ecosystem. Ignoring them is not merely an act of risk tolerance; it is an abdication of fiduciary and moral responsibility.

The strategic response must be equally enduring, proactive, and structurally sound. For the leader of The Next Evolution, this means establishing a clear moral compact with technology. It demands a pivot from short-term financial optimisation to long-term systemic stewardship. This requires institutional courage to say “no” to efficient but opaque black-box solutions and “yes” to slower, more transparent, and auditable forms of Assistive - Augmentative - Adaptive Intelligence. Ultimately, it means prioritising the integrity of your organisation’s ethical architecture over the speed of its deployment.

The great challenge of this digital age lies in maintaining human agency amidst the rise of machine autonomy. While the Mirror Machine will continue to reflect our power, it is only leadership defined by deep foresight and an unwavering conscience that can ensure it accurately reflects our purpose. This commitment to transparent structural integrity, ethical architectural design, and a profound focus on humanity is the core mandate for the leaders who will successfully navigate this duality. They understand that the greatest force shaping the future is not the code itself, but the deliberate, ethical choices made by the leaders who deploy it.

Key Takeaways: Navigating the Duality of the Mirror Machine

Provenance: The collapse of verifiable reality due to photorealistic deepfakes.

Panopticon: The automation of historical bias through ambient, invisible data harvesting.

Friction: The vanishing of human response time in the face of machine-speed attacks.

Entropy: The decay of human judgment as we over-rely on autonomous decision-making.

Strategic Insights: Governance in the Age of Synchronous Crisis and Opportunity

Force Multipliers: Why democratized communication is inseparable from systemic disinformation.

Synthetica: Moving the defense from protecting data to defending the integrity of perceived reality.

Algorithmic Equity: Why moral neutrality cannot be assumed in automated social sorting.

Human-in-the-Loop: Enforcing human oversight as a hard constraint for lethal or mission-critical tech.

Video Summary: Architecting Ethics into the Mirror Machine

** Kenya to Kansas:** How the same connectivity saves lives and shatters consensus.

Moving Beyond Compliance: Why executive accountability must replace machine-blaming.

Data as a Public Good: Protecting the "Structural Integrity" of the digital environment for future generations.

The Moral Compact: Choosing transparent, augmentative intelligence over opaque black-box solutions.

If the machine reflects our flaws, we must become its stewards. It is time to move from building systems to cultivating them. Become The Digital Gardener.

The Ethical CTO: Arc 3 Index

Aligning Code with Soul: The Humanisation of Technology

Prioritising Human-Centric Experiences: Beyond Digital First

Where Technology Disappears Inward: The Age of the Invisible Interface

Regulating Unseen Digital Forces: Governing the Ambient Future

Architecting for Future Generations: Temporal Empathy

- Stewardship of Sustainable Systems: The Digital Gardener

Reclaiming our Shared Story: Mythos and the Machine

- Managing High-Speed Systemic Duality: The Mirror Machine

Inhabiting Immersive Public Services: Beyond The Screen

- Mastering Focus Amidst Complexity: The Three-Foot World