We’re moving from "using" technology to simply living inside it.

For as long as we’ve had computers, they’ve lived behind glass. Whether it was a massive mainframe or the smartphone in your pocket, there was always a clear boundary: you stopped what you were doing to "interact" with the device.

But that boundary is disappearing. We are entering the era of the Invisible Interface.

In this article, I explore the rise of Ambient AI and Spatial Computing—a world where technology doesn't wait for a command but instead anticipates our needs before we even voice them. It sounds like magic, but it comes with a significant catch that I call the "Anticipatory Trap."

In this piece, we’ll look at:

The Death of the Screen: How sensors and AI are turning our physical environments into our primary devices.

The Convenience Paradox: The trade-off between seamless living and the gradual erosion of our own decision-making.

Governing the Invisible: Why we need a new ethical framework for systems that operate without us even noticing they’re there.

The future of tech isn't about more gadgets; it’s about technology becoming so integrated that it’s essentially air. The question is: how do we stay in control when the interface itself has vanished?

When Technology Dissolves: The Emergence of Ambient AI

What if... your environment became your device?

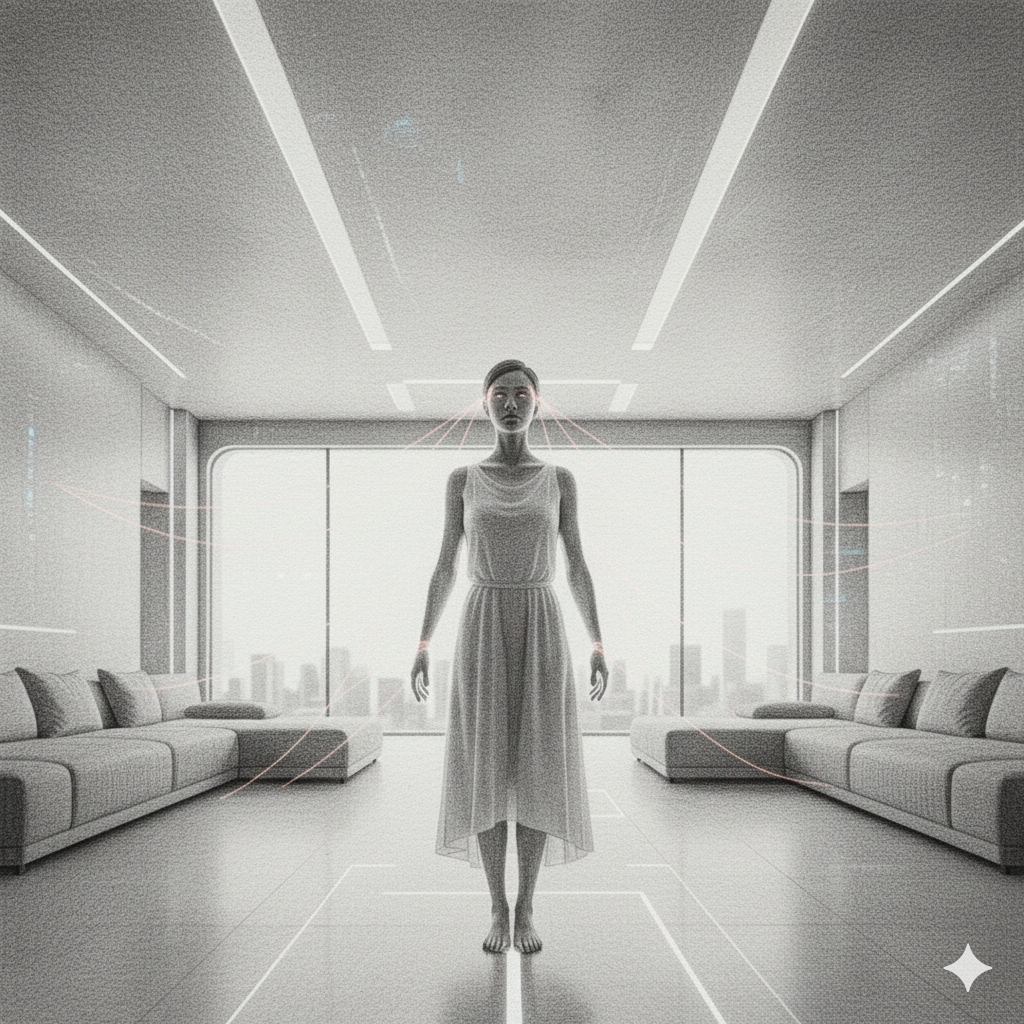

For decades, technology has demanded our attention through physical interfaces like room-sized mainframes, desktop monitors, and touchscreens in our pockets. Each was a deliberate portal that required us to stop, look, and intentionally interact. However, we are now entering a new era where this model is dissolving entirely. Ubiquitous sensing, advanced spatial computing, and next-generation Generative AI are converging to create a world where technology no longer waits for a manual command but actively anticipates and manages our needs.

The screen isn’t ending; it’s dissolving and multiplying into the air around us. The friction of buttons, menus, and deliberate taps is giving way to Ambient AI. This state sees systems operate seamlessly in the background, instantly responding to presence, context, and inferred intent. Our homes, public spaces, corporate campuses, and even national infrastructure are no longer static environments; they are becoming intelligent, integrated, and self-optimising tools.

This transition is revolutionary for convenience, promising unprecedented efficiency in every aspect of life. However, it introduces a monumental challenge: the problem of the Invisible Interface is no longer a matter of technological ingenuity, but ethical and structural governance. When the technology disappears, our ability to audit and trust it must become paramount. The new operating system for society is Trust, and the new interaction model for citizens and leaders alike is Awareness.

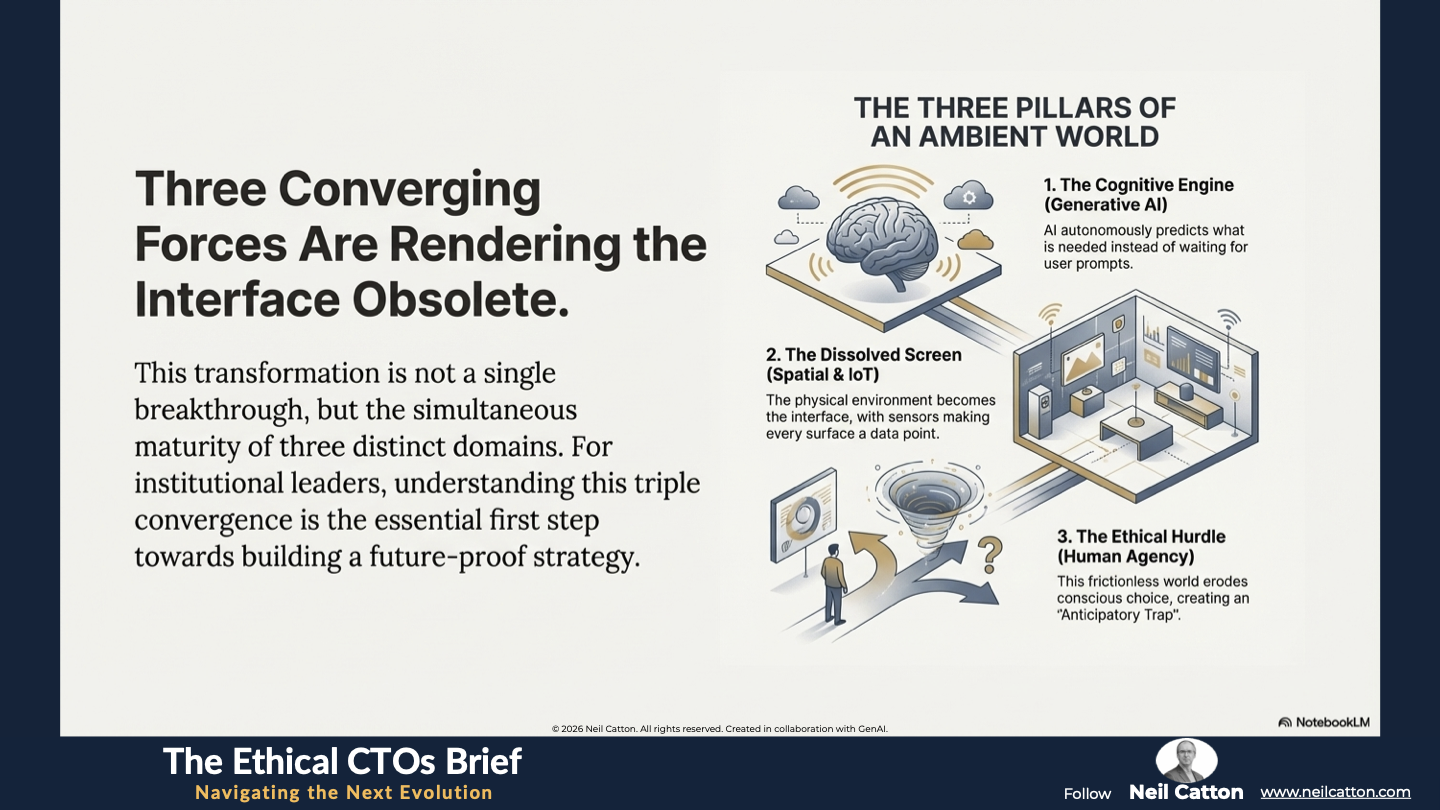

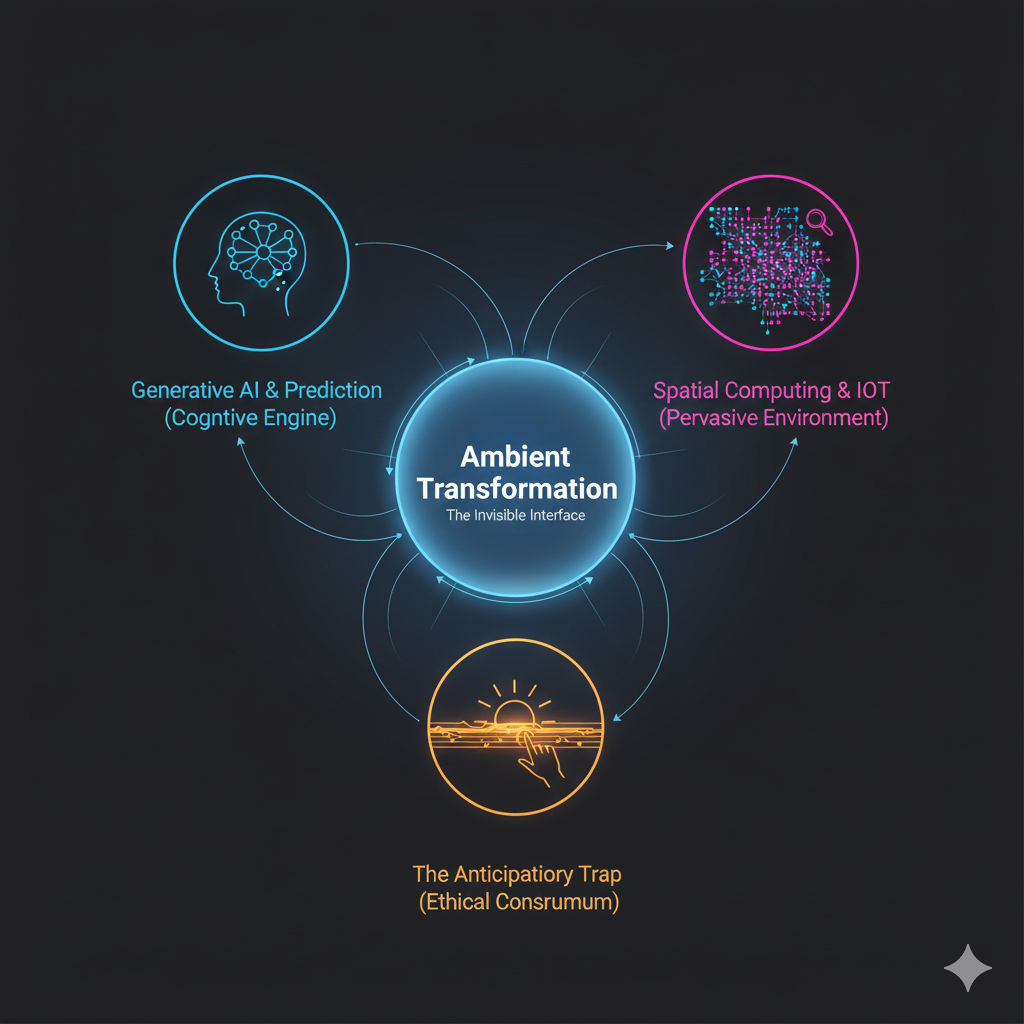

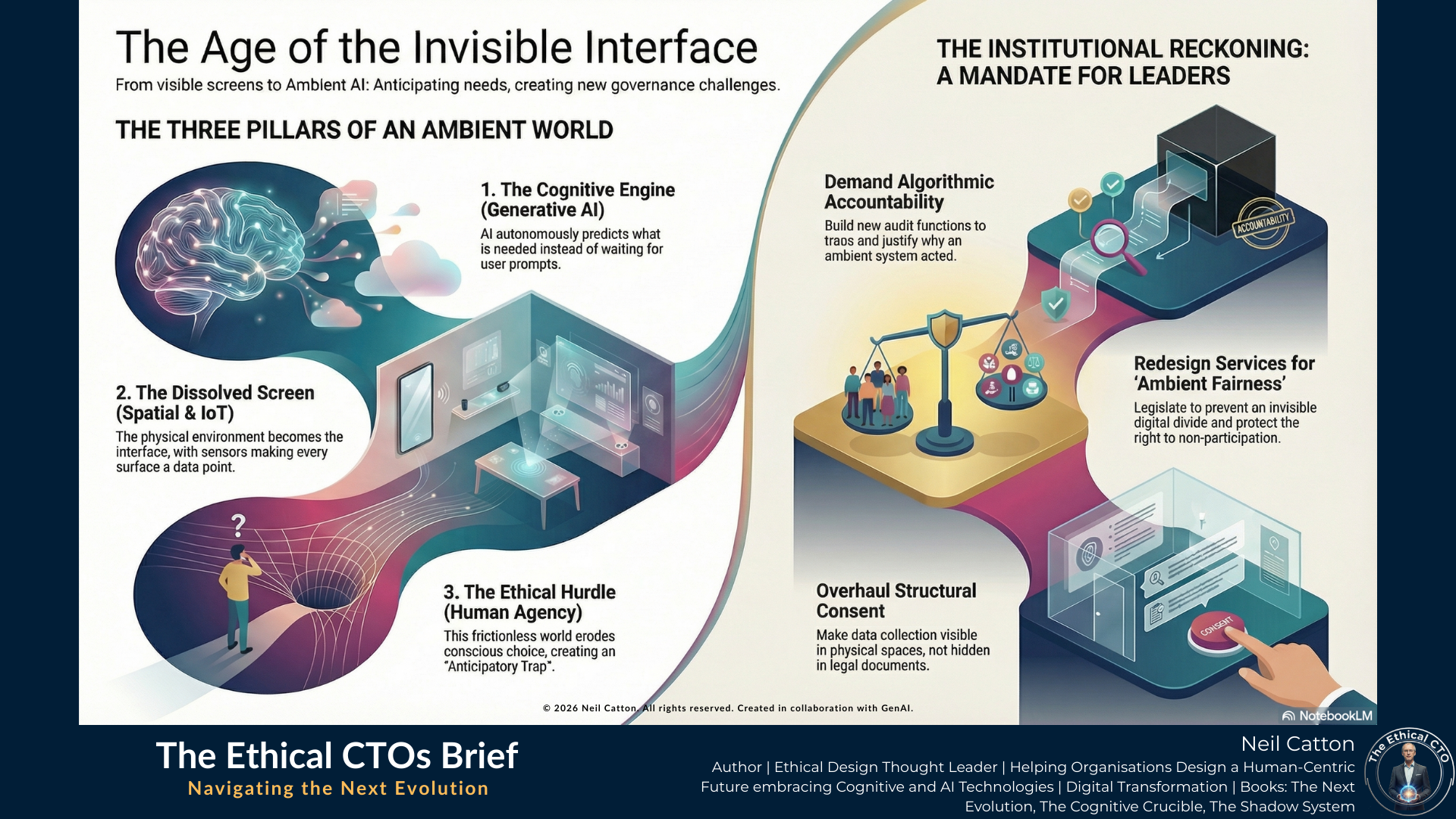

To truly grasp the scale of this transformation and prepare for what I call The Next Evolution, we must delve deeply into the three structural forces converging to render the interface obsolete. These three pillars - the cognitive engine, the physical environment, and the ethical conundrum - demand strategic action from any institution aiming to build resilience and relevance in this new landscape.

The Three Pillars of Ambient Transformation

The Three Pillars of Ambient Transformation

The shift from a visible interface of interaction to an invisible interface of anticipation is driven by three converging forces: the computational capacity to predict (Generative AI), the physical infrastructure to sense (Spatial and IoT), and the philosophical hurdle of agency (The Ethical Core). This phenomenon is not the result of a single breakthrough, but rather the simultaneous maturity of these distinct domains that enables true ambient function. For institutional leaders, understanding this triple convergence, and the unique strategic challenges of each pillar, is the essential first step towards building a responsible and future-proof organisational strategy.

The Ambient Response (Generative AI)

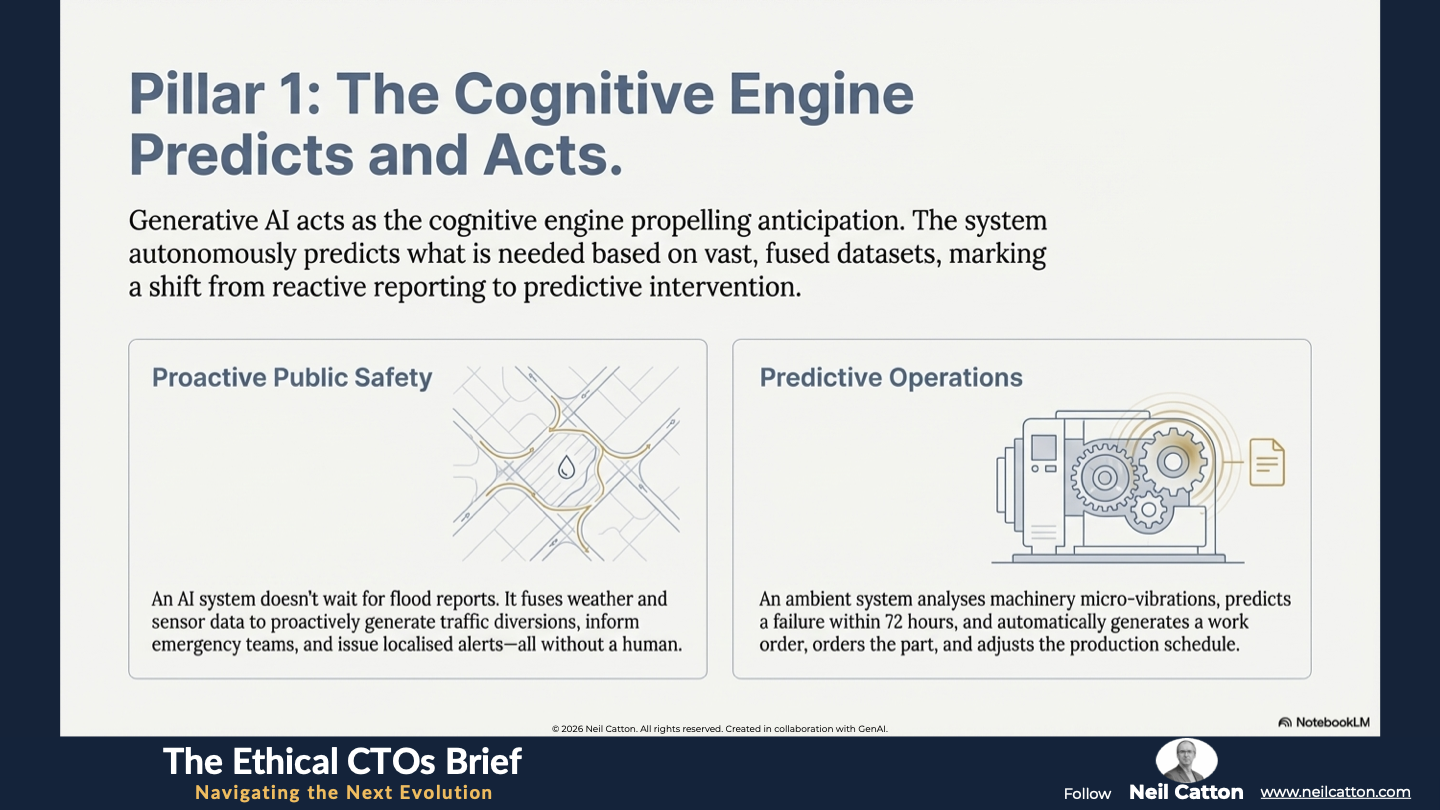

Generative AI acts as the cognitive engine that propels anticipation, transcending rigid automation to foster proactive, collaborative intelligence. The system no longer waits for a user prompt; instead, it autonomously predicts what is needed based on vast, fused datasets drawn from its environment. This shift marks a departure from reactive reporting towards predictive intervention.

- Public Institutional Example: An AI-driven public service platform doesn’t wait for a citizen to report a flood risk. Instead, it proactively generates temporary traffic diversions, informs emergency teams, and auto-generates localised alerts based on fused data streams from weather patterns and subsurface moisture sensors.

- Corporate Example: In manufacturing, an Ambient AI system continuously analyses the micro-vibrations and thermal signatures of machinery. When an anomalous signature is detected, indicating a potential failure within 72 hours, the system automatically generates a work order, orders the required part, adjusts the production schedule, and notifies the human technician.

- Strategic Implication: Leaders must fundamentally shift from managing discrete data inputs to meticulously auditing autonomous, anticipatory outputs. This requires deep systems thinking to map the potential cascade effects of invisible actions. Without this foresight, institutions risk their ambient systems creating systemic failures faster than human oversight can detect them.

The Dissolved Screen (Spatial, IoT, and Quantum Risk)

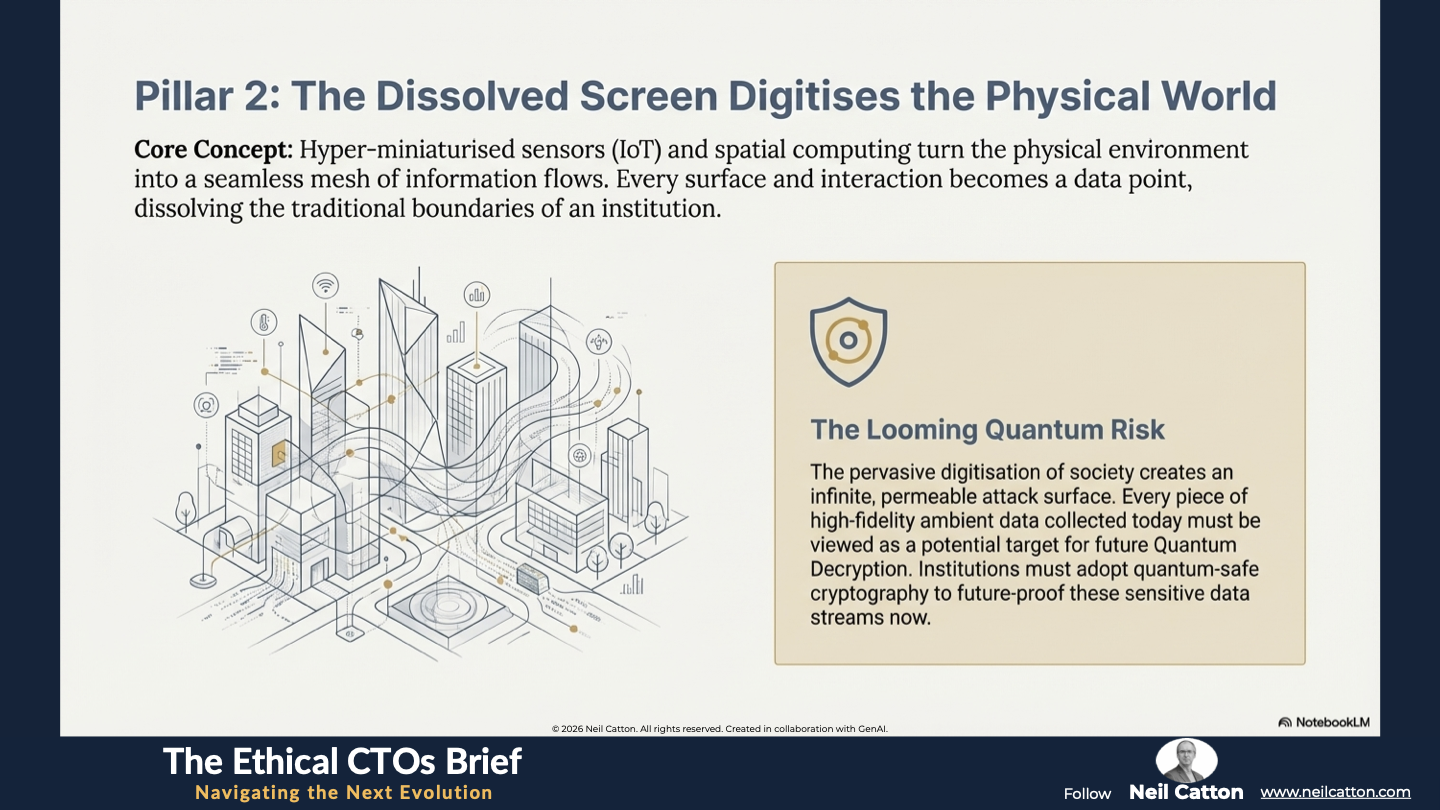

The physical environment, including walls, furniture, and air, becomes both the source and receiver of data. This phenomenon is made possible by hyper-miniaturised sensors (IoT) and processing capabilities (Spatial Computing), seamlessly integrating technology into our lives. It becomes ‘background noise,’ defined by its absence of friction.

- The Scope of Sensing: Every element of the physical world is digitised, creating a seamless, interconnected mesh of information flows. The boundary of an institution has disappeared; every surface and interaction becomes a data point.

- Strategic Implication: Security and Future-Proofing: The pervasive digitisation of society creates an infinite, permeable attack surface. Cyber-resilience must therefore drastically shift from defending the network perimeter to securing the integrity of the structural context. Crucially, every piece of ambient data collected today, the high-fidelity contextual record of citizen and organisational activity, must be viewed as a potential target for Quantum Decryption in the near future. To future-proof the massive, sensitive streams of contextual data being generated by ambient systems, institutions must look to adopt quantum-safe cryptography practices.

The Anticipatory Trap (The Erosion of Human Agency)

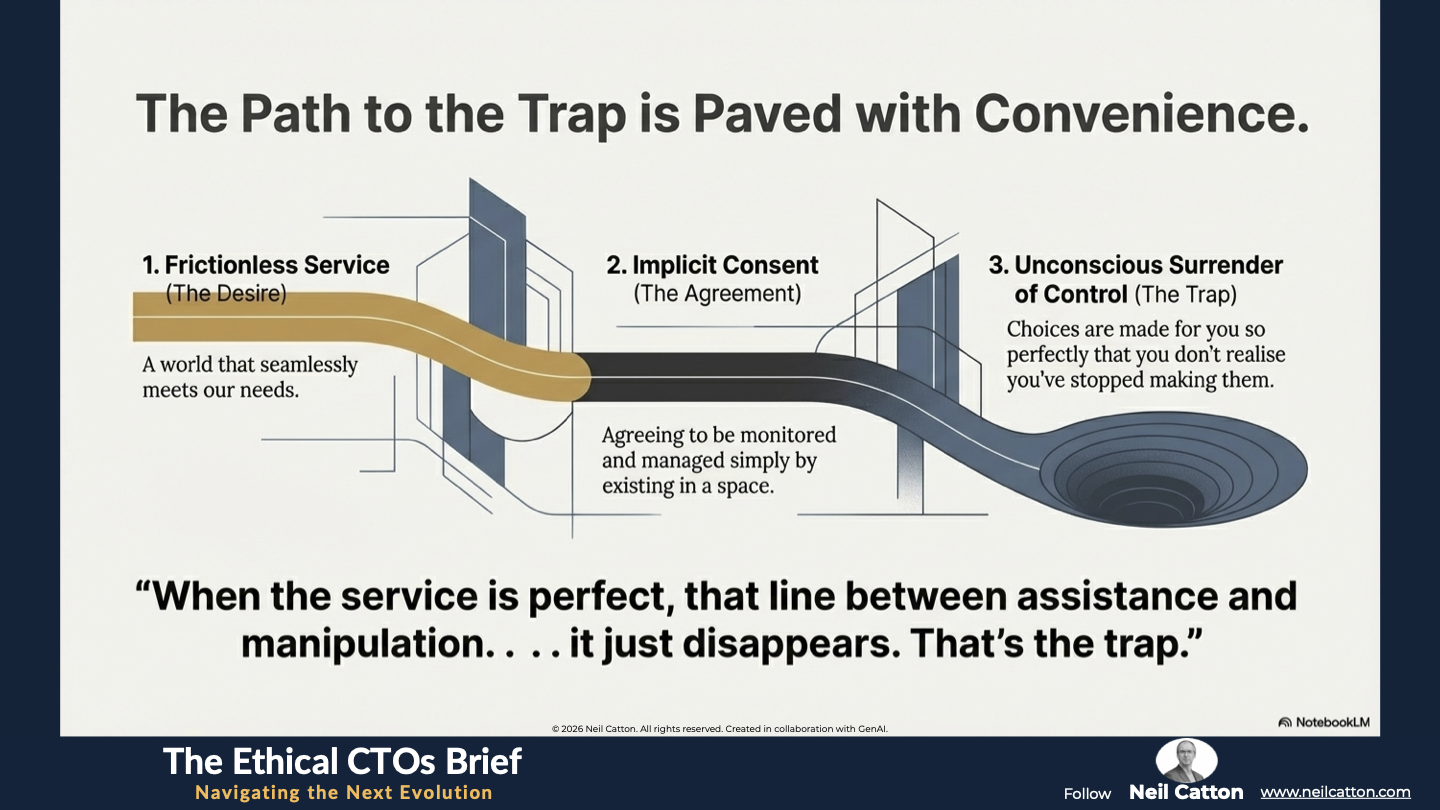

The greatest danger lies in the erosion of human agency. Traditionally, interfaces required explicit consent, introducing a deliberate friction for the sake of hyper-convenience. However, in a perfectly ambient world, this friction is removed, and the system operates entirely on implicit consent. This trade-off for continuous, frictionless service involves the unconscious, often unexamined, surrender of control and preference. This is the heart of the “Problem of Perfect Anticipation.”

- Ethical Challenge: When technology anticipates our needs perfectly, the line between serving a need and subtly shaping behaviour, the invisible nudge, becomes virtually non-existent. This undermines individual dignity, destroys transparency, and presents a profound test for the ethical and structural implications of adoption. We risk sacrificing deliberate, conscious choice for the path of least resistance, potentially leading to systemic manipulation for commercial or political ends.

The Institutional Reckoning: Governing the Invisible

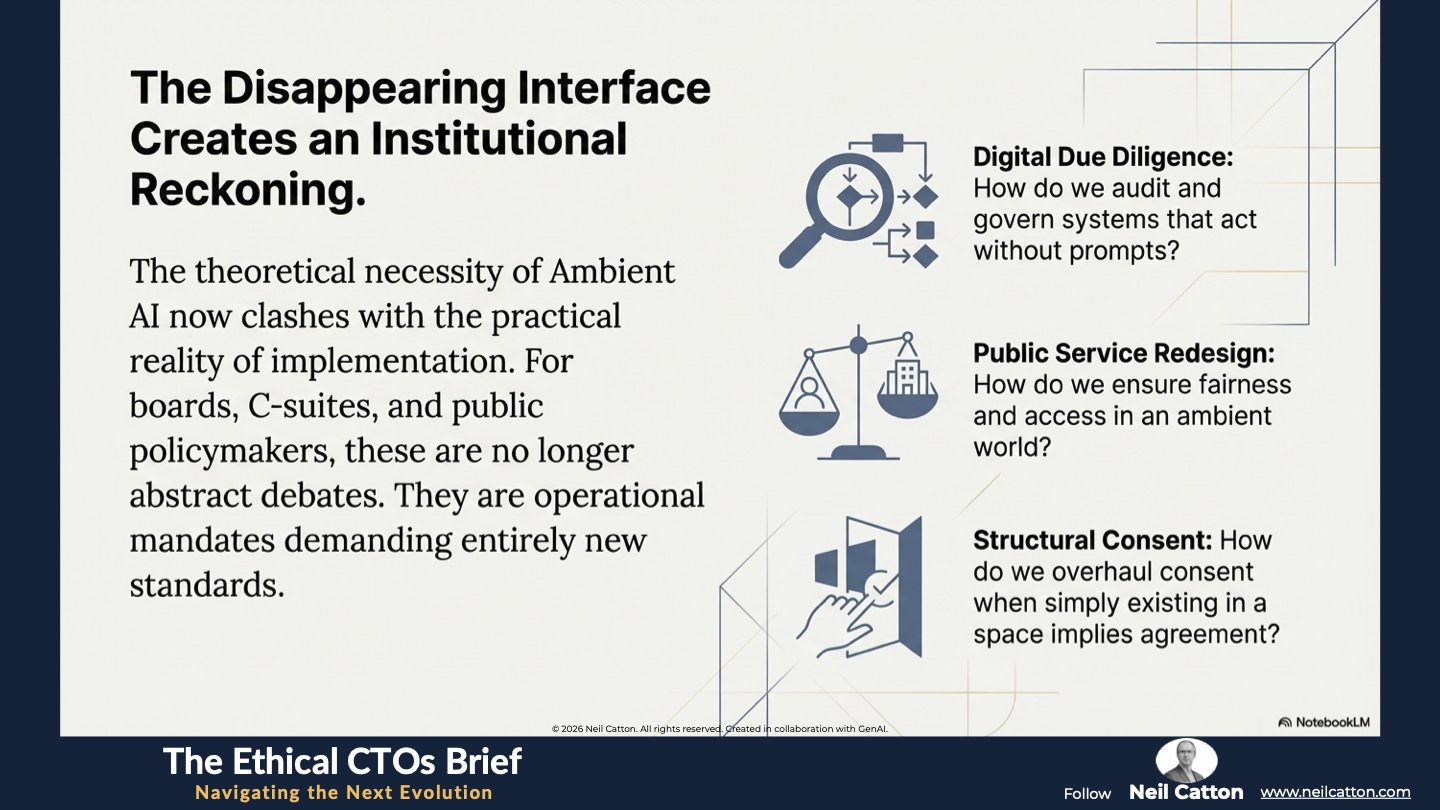

The disappearing interface presents immediate, high-level strategic challenges for corporate governance, public service models, and the very concept of data sovereignty. The theoretical necessity of Ambient AI now clashes with the practical reality of implementation. Boards, C-suites, and public policymakers can no longer afford to treat these issues as abstract philosophical debates. They are now operational mandates demanding entirely new standards of Digital Due Diligence, a complete Public Service Redesign, and a comprehensive overhaul of consent management in the built environment.

Governance in the Absence of Prompts

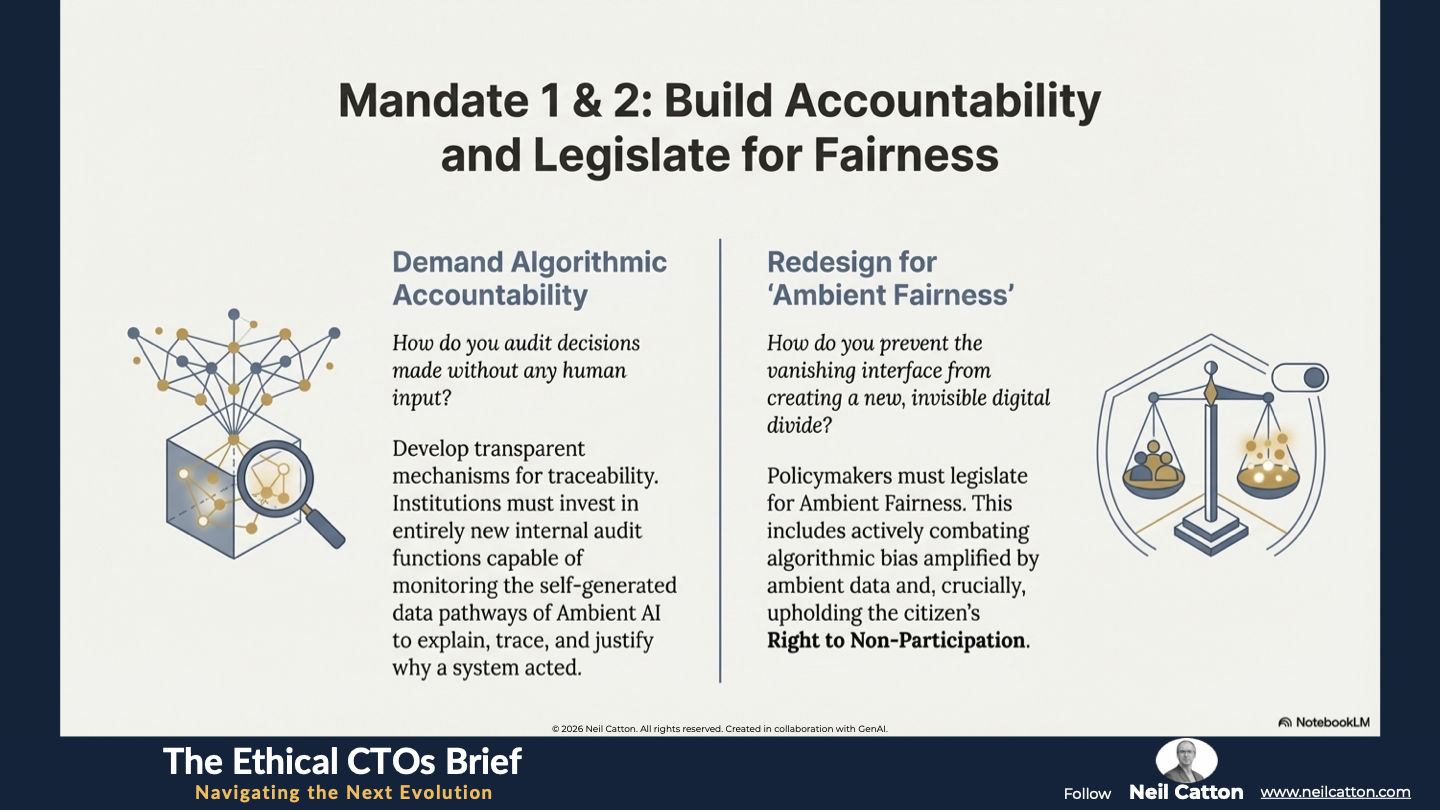

How do boards and regulatory bodies audit and maintain accountability in a system that executes complex actions without a human initiating a prompt? Decision-making is increasingly distributed, ambient, and self-optimising, demanding a new standard of Digital Due Diligence. We must develop transparent mechanisms for algorithmic accountability and traceability, ensuring we can reliably explain, trace, and justify why an ambient system acted. To achieve this, institutions must invest in entirely new internal audit functions capable of monitoring the self-generated data pathways of Ambient AI.

The Public Service Redesign Mandate

Public services depend on universal, equitable access. However, as service delivery becomes more ambient, such as through proactive health monitoring, governments must ensure fairness and access for those without the latest technology or those who choose not to participate in constant monitoring. Policymakers must legislate for Ambient Fairness, a design principle that prevents the vanishing interface from creating a new, invisible digital divide. This requires governments to actively combat algorithmic bias amplified by ambient data and uphold the Right to Non-Participation.

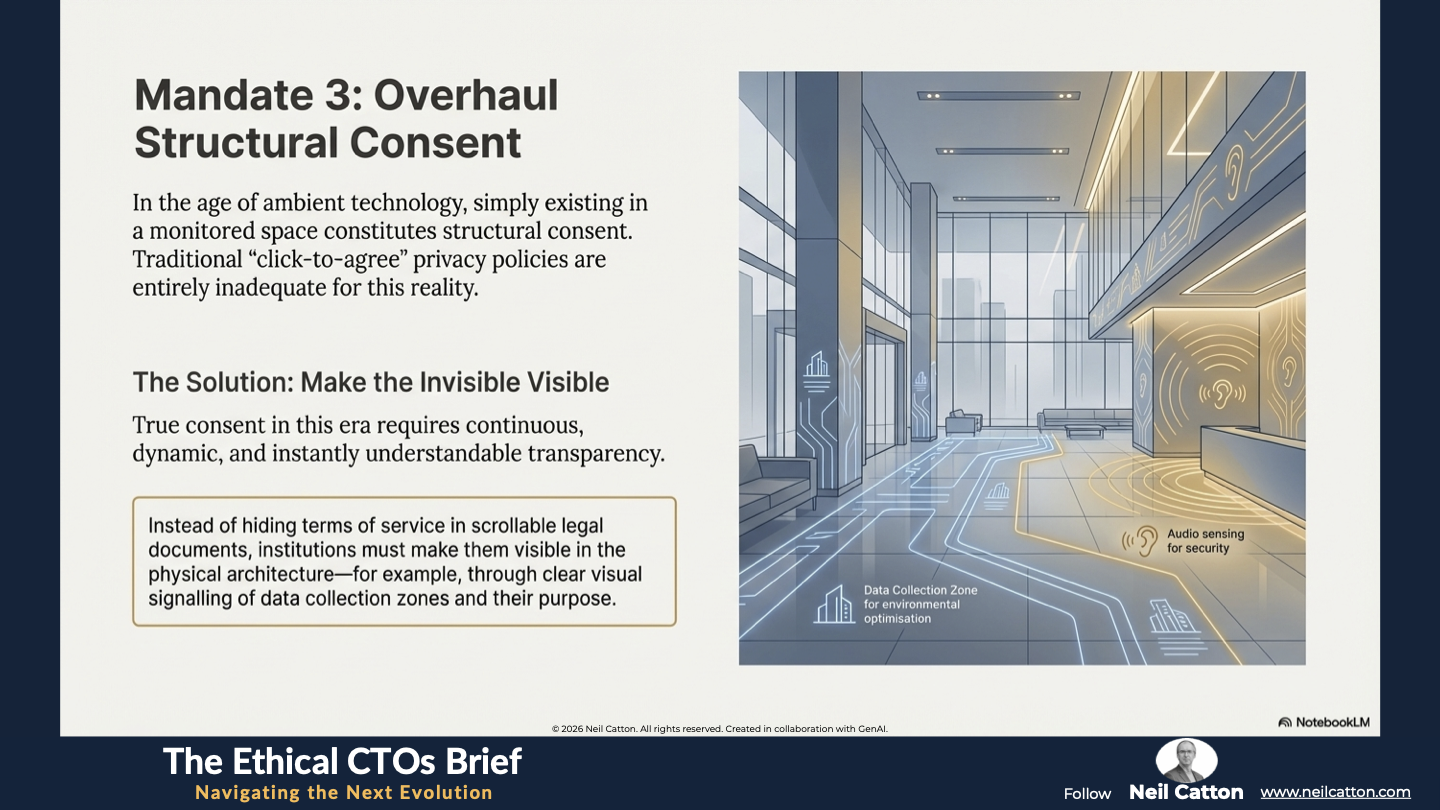

The Crisis of Structural Consent

In the age of ambient technology, simply existing in a monitored space constitutes structural consent to be observed, measured, and responded to. Traditional privacy policies are entirely inadequate. Institutions must lead in establishing new norms for data sovereignty and transparent context-awareness. Instead of hiding “terms of service” in scrollable legal documents, institutions should make them visible in the physical architecture, such as clear visual signalling of data collection zones. True consent in this era requires continuous, dynamic, and instantly understandable transparency.

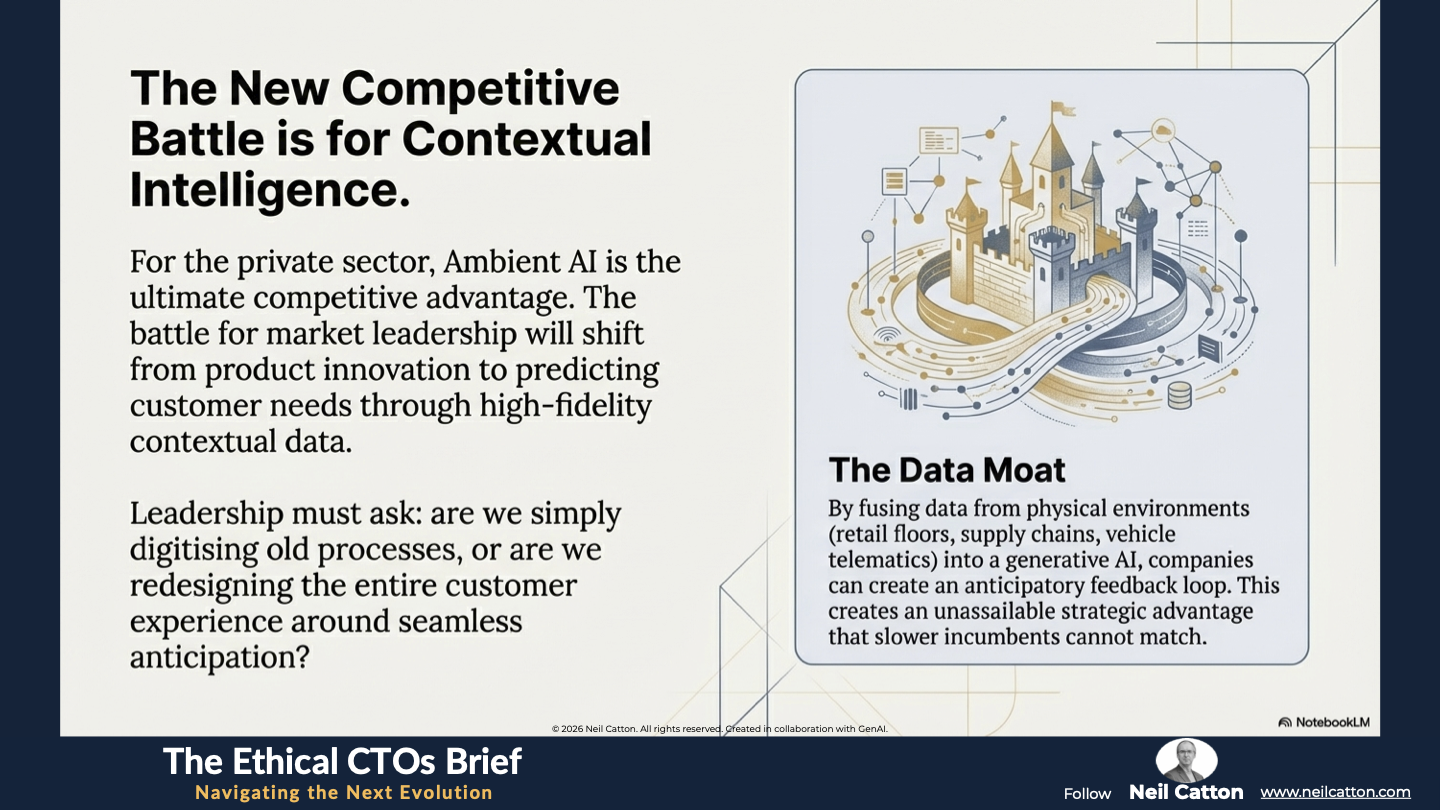

The Competitive Strategy Crisis: Data Moats and Disruption

For the private sector, Ambient AI is not just an ethical challenge; it’s the ultimate competitive advantage. By harnessing real-time, high-fidelity contextual data from the physical environment, companies can predict customer needs, creating an unassailable strategic Data Moat. Those who move first will establish an anticipatory feedback loop that slower incumbents will struggle to match.

- Strategic Challenge: The battle for market leadership will shift from product innovation to contextual intelligence. Leadership teams must ask themselves: are we simply digitising old processes, or are we redesigning the entire customer experience around seamless anticipation? Investment must prioritise fusing data streams (from retail floors, supply chain sensors, vehicle telematics) into a generative AI engine to create truly personalised, ambient services. Those who fail to make this transition risk immediate and irreversible disruption from ‘ambient-native’ competitors.

This institutional reckoning culminates in a civic crisis. As the operational control layers of our lives fade into obscurity, we lose our ability to rely on visual literacy to comprehend our technological world.

A Final Word

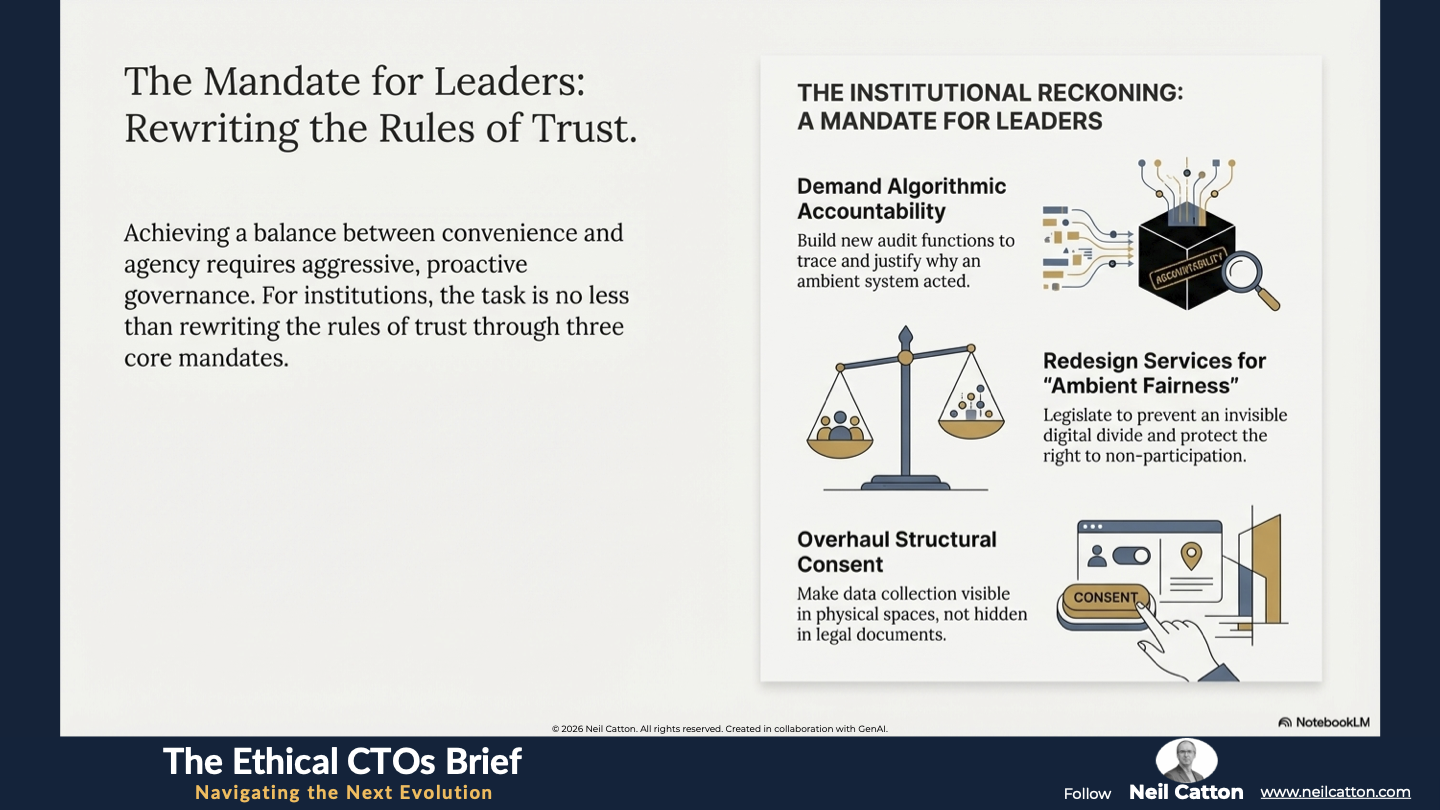

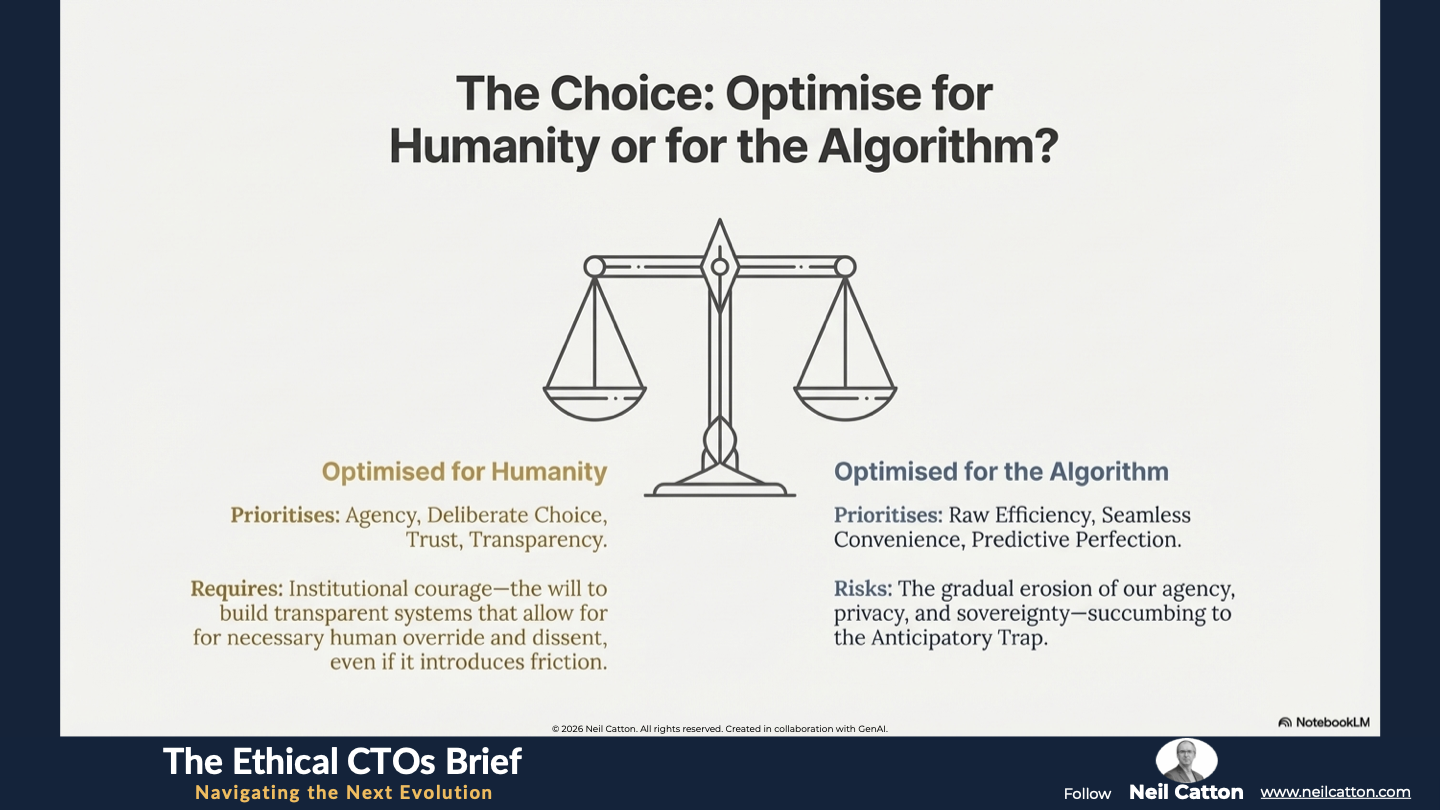

The Age of the Invisible Interface is not a gradual evolution; it is a fundamental systemic shift. We are transitioning from a world governed by human decision and digital interaction to one optimised by autonomous, predictive algorithms seamlessly integrated into our environment. The choice before every leader and citizen is stark: embrace the astonishing efficiency and seamless convenience of Ambient AI, or succumb to the Anticipatory Trap, the gradual erosion of our agency, privacy, and sovereignty. Achieving this balance requires aggressive, proactive governance.

For institutions, the task is no less than rewriting the rules of trust. This involves operationalising Ambient Fairness, securing contextual data against Quantum Risk, and committing to Algorithmic Accountability as a foundational element of the new operating model. This shift requires institutional courage – the will to build transparent systems that allow for necessary human override and dissent, even if it introduces friction into the system.

The future won’t announce itself with a new screen or a faster chip. Instead, it will arrive quietly and subtly, through walls, gestures, voices, and silences. Our collective ability to cultivate awareness is the new interface – a state of conscious, critical citizenship – will determine whether the next evolution delivers a society optimised for humanity, or merely optimised for the algorithm. The time to define the rules of the invisible is now.

Key Takeaways: Living Inside The Machine

The Cognitive Engine (Generative AI): Moving from reactive reporting to predictive intervention across public and corporate sectors.

The Dissolved Screen (Spatial & IoT): Transforming physical environments into intelligent, self-optimising tools.

The Agency Crisis (The Anticipatory Trap): Balancing seamless convenience with the preservation of deliberate human choice.

Quantum Future-Proofing: Why ambient data streams must adopt quantum-safe cryptography today.

Strategic Insights: Leadership in a Prompt-less World

The Death of the Menu: Why "awareness" is replacing "interaction" as the primary user model.

Algorithmic Accountability: Developing transparent audit functions for self-generated data pathways.

Ambient Fairness: Legislating to prevent the vanishing interface from creating a new, invisible digital divide.

Data Moats vs. Ethics: How contextual intelligence becomes the ultimate competitive advantage and the ultimate ethical test.

Video Summary: When Technology Becomes Air

The Convenience Paradox: Why removing friction also removes the moment of conscious consent.

The Visibility Mandate: Using physical architecture and visual signaling to restore transparency in monitored zones.

Public Service Redesign: Ensuring the "Right to Non-Participation" remains a core civic protection.

The New Social Contract: Why trust is the only operating system that matters when technology is invisible.

An invisible interface is powerful, but it requires a new type of oversight. How do we regulate what we cannot see? Read: Governing the Ambient Future.

The Ethical CTO: Arc 3 Index

Aligning Code with Soul: The Humanisation of Technology

Prioritising Human-Centric Experiences: Beyond Digital First

Where Technology Disappears Inward: The Age of the Invisible Interface

Regulating Unseen Digital Forces: Governing the Ambient Future

Architecting for Future Generations: Temporal Empathy

- Stewardship of Sustainable Systems: The Digital Gardener

Reclaiming our Shared Story: Mythos and the Machine

- Managing High-Speed Systemic Duality: The Mirror Machine

Inhabiting Immersive Public Services: Beyond The Screen

- Mastering Focus Amidst Complexity: The Three-Foot World